Christian Puhrsch [Wed, 27 Feb 2019 21:48:34 +0000 (13:48 -0800)]

Use name for output variables instead of out in JIT (#17386)

Summary:

This adds 88 matches.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17386

Differential Revision:

D14179139

Pulled By: cpuhrsch

fbshipit-source-id:

2c3263b8e4d084db84791e53290e8c8b1b7aecd5

Jesse Hellemn [Wed, 27 Feb 2019 21:17:16 +0000 (13:17 -0800)]

Forcing UTC on Mac circleci jobs (#17516)

Summary:

And adding timestamps to linux build jobs

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17516

Differential Revision:

D14244533

Pulled By: pjh5

fbshipit-source-id:

26c38f59e0284c99f987d69ce6a2c2af9116c3c2

Xiaomeng Yang [Wed, 27 Feb 2019 20:18:52 +0000 (12:18 -0800)]

Fix math::Set for large tensor (#17539)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17539

Fix math::Set for large tensor

i-am-not-moving-c2-to-c10

Reviewed By: dzhulgakov, houseroad

Differential Revision:

D14240756

fbshipit-source-id:

0ade26790be41fb26d2cc193bfa3082c7bd4e69d

Natalia Gimelshein [Wed, 27 Feb 2019 19:39:37 +0000 (11:39 -0800)]

Add sparse gradient option to `gather` operation (#17182)

Summary:

This PR allows `gather` to optionally return sparse gradients, as requested in #16329. It also allows to autograd engine to accumulate sparse gradients in place when it is safe to do so.

I've commented out size.size() check in `SparseTensor.cpp` that also caused #17152, it does not seem to me that check serves a useful purpose, but please correct me if I'm wrong and a better fix is required.

Motivating example:

For this commonly used label smoothing loss function

```

def label_smoothing_opt(x, target):

padding_idx = 0

smoothing = 0.1

logprobs = torch.nn.functional.log_softmax(x, dim=-1, dtype=torch.float32)

pad_mask = (target == padding_idx)

ll_loss = logprobs.gather(dim=-1, index=target.unsqueeze(1), sparse = True).squeeze(1)

smooth_loss = logprobs.mean(dim=-1)

loss = (smoothing - 1.0) * ll_loss - smoothing * smooth_loss

loss.masked_fill_(pad_mask, 0)

return loss.sum()

```

backward goes from 12.6 ms with dense gather gradients to 7.3 ms with sparse gradients, for 9K tokens x 30K vocab, which is some single percent end-to-end improvement, and also improvement in peak memory required.

Shout-out to core devs: adding python-exposed functions with keyword arguments through native_functions.yaml is very easy now!

cc gchanan apaszke

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17182

Differential Revision:

D14158431

Pulled By: gchanan

fbshipit-source-id:

c8b654611534198025daaf7a634482b3151fbade

Jane Wang [Wed, 27 Feb 2019 19:26:40 +0000 (11:26 -0800)]

add elastic zeus handler (#16746)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/16746

as titled. We use a special url schem elasticzeus for elastic zeus so that we dont need to change the public interface of init_process_group.

Reviewed By: aazzolini, soumith

Differential Revision:

D13948151

fbshipit-source-id:

88939dcfa0ad93467dabedad6905ec32e6ec60e6

Jongsoo Park [Wed, 27 Feb 2019 18:09:53 +0000 (10:09 -0800)]

optimize elementwise sum (#17456)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17456

Using an instruction sequence similar to function in fbgemm/src/QuantUtilAvx2.cc

elementwise_sum_benchmark added

Reviewed By: protonu

Differential Revision:

D14205695

fbshipit-source-id:

84939c9d3551f123deec3baf7086c8d31fbc873e

rohithkrn [Wed, 27 Feb 2019 18:04:33 +0000 (10:04 -0800)]

Enable boolean_mask, adadelta, adagrad fp16 on ROCm (#17235)

Summary:

- Fix bugs, indentation for adadelta and adagrad tests to enable fp16

- Enable boolean_mask fp16 on ROCm

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17235

Differential Revision:

D14240828

Pulled By: bddppq

fbshipit-source-id:

ab6e8f38aa7afb83b4b879f2f4cf2277c643198f

Iurii Zdebskyi [Wed, 27 Feb 2019 17:17:04 +0000 (09:17 -0800)]

Enabled HALF for fill() and zero() methods. Moved them into THTensorFill (#17536)

Summary:

For some additional context on this change, please, see this [PR](https://github.com/pytorch/pytorch/pull/17376)

As a part of work on Bool Tensor, we will need to add support for a bool type to _fill() and _zero() methods that are currently located in THTensorMath. As we don't need anything else and those methods are not really math related - we are moving them out into separate THTensorFill for simplicity.

Change:

-moved _fill() and _zero() from THTensorMath.h to THTensorFill

-enabled _fill() and _zero() for HALF type.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17536

Differential Revision:

D14242130

Pulled By: izdeby

fbshipit-source-id:

1d8bd806f0f5510723b9299d360b70cc4ab96afb

Tongzhou Wang [Wed, 27 Feb 2019 04:43:11 +0000 (20:43 -0800)]

Fix autograd with buffers requiring grad in DataParallel (#13352)

Summary:

Causing a problem with spectral norm, although SN won't use that anymore after #13350 .

Pull Request resolved: https://github.com/pytorch/pytorch/pull/13352

Differential Revision:

D14209562

Pulled By: ezyang

fbshipit-source-id:

f5e3183e1e7050ac5a66d203de6f8cf56e775134

Chaitanya Sri Krishna Lolla [Wed, 27 Feb 2019 04:36:45 +0000 (20:36 -0800)]

enable assymetric dilations and stride for miopen conv (#17472)

Summary:

As of MIOpen 1.7.1 as shipped in ROCm 2.1 this works correctly and we can use MIOpen and do not need to fall back

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17472

Differential Revision:

D14210323

Pulled By: ezyang

fbshipit-source-id:

4c08d0d4623e732eda304fe04cb722c835ec70e4

Johannes M Dieterich [Wed, 27 Feb 2019 04:35:28 +0000 (20:35 -0800)]

Enable tests working on ROCm 2.1 dual gfx906

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/17473

Reviewed By: bddppq

Differential Revision:

D14210243

Pulled By: ezyang

fbshipit-source-id:

519032a1e73c13ecb260ea93102dc8efb645e070

peter [Wed, 27 Feb 2019 04:33:59 +0000 (20:33 -0800)]

Fix linking errors when building dataloader test binaries on Windows (#17494)

Summary:

Fixes #17489.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17494

Differential Revision:

D14226525

Pulled By: ezyang

fbshipit-source-id:

3dfef9bc6f443d647e9f05a54bc17c5717033723

hysts [Wed, 27 Feb 2019 04:20:32 +0000 (20:20 -0800)]

Fix typo

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/17521

Differential Revision:

D14237482

Pulled By: soumith

fbshipit-source-id:

636e0fbe2c667d15fcb649136a65ae64937fa0cb

Christian Puhrsch [Wed, 27 Feb 2019 01:41:56 +0000 (17:41 -0800)]

Remove Bool/IndexTensor from schema for native functions with derivatives (#17193)

Summary:

This only deals with four functions, but is an important first step towards removing BoolTensor and IndexTensor entirely.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17193

Differential Revision:

D14157829

Pulled By: cpuhrsch

fbshipit-source-id:

a36f16d1d88171036c44cc7de60ac9dfed9d14f2

Ilia Cherniavskii [Tue, 26 Feb 2019 23:34:04 +0000 (15:34 -0800)]

Fix operator initialization order (#15445)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/15445

Initilize task graph after operators (task graph uses ops)

Reviewed By: yinghai

Differential Revision:

D13530864

fbshipit-source-id:

fdc91e9158c1b50fcc96fd1983fd000fdf20c7da

bhushan [Tue, 26 Feb 2019 22:11:18 +0000 (14:11 -0800)]

Make transpose consistent with numpy's behavior (#17462)

Summary:

Pytorch's tensor.t() is now equivalent with Numpy's ndarray.T for 1D tensor

i.e. tensor.t() == tensor

Test case added:

- test_t

fixes #9687

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17462

Differential Revision:

D14214838

Pulled By: soumith

fbshipit-source-id:

c5df1ecc8837be22478e3a82ce4854ccabb35765

Lu Fang [Tue, 26 Feb 2019 20:22:14 +0000 (12:22 -0800)]

Bump up the ONNX default opset version to 10 (#17419)

Summary:

Align with the master of ONNX.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17419

Reviewed By: zrphercule

Differential Revision:

D14197985

Pulled By: houseroad

fbshipit-source-id:

13fc1f7786aadbbf5fe83bddf488fee3dedf58ce

liangdzou [Tue, 26 Feb 2019 20:18:42 +0000 (12:18 -0800)]

' ' ==> ' ' (#17498)

Summary:

Fix formatting error for cpp code.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17498

Reviewed By: zou3519

Differential Revision:

D14224549

Pulled By: fmassa

fbshipit-source-id:

f1721c4a75908ded759aea8c561f2e1d66859eec

Johannes M Dieterich [Tue, 26 Feb 2019 19:35:26 +0000 (11:35 -0800)]

Support all ROCm supported uarchs simultaneously: gfx803, gfx900, gfx906 (#17367)

Summary:

Correct misspelled flag.

Remove dependency on debug flag (HCC_AMDGPU_TARGET)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17367

Differential Revision:

D14227334

Pulled By: bddppq

fbshipit-source-id:

d838f219a9a1854330b0bc851c40dfbba77a32ef

knightXun [Tue, 26 Feb 2019 18:09:54 +0000 (10:09 -0800)]

refactor: a bit intricate so I refactor it (#16995)

Summary:

this code is a bit intricate so i refactor it

Pull Request resolved: https://github.com/pytorch/pytorch/pull/16995

Differential Revision:

D14050667

Pulled By: ifedan

fbshipit-source-id:

55452339c6518166f3d4bc9898b1fe2f28601dc4

Elias Ellison [Tue, 26 Feb 2019 16:11:47 +0000 (08:11 -0800)]

new batch of expect file removals

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/17486

Differential Revision:

D14218963

Pulled By: eellison

fbshipit-source-id:

dadc8bb71e756f47cdb04525d47f66c13ed56d16

Michael Suo [Tue, 26 Feb 2019 09:24:05 +0000 (01:24 -0800)]

user defined types (#17314)

Summary:

First pass at user defined types. The following is contained in this PR:

- `UserType` type, which contains a reference to a module with all methods for the type, and a separate namespace for data attributes (map of name -> TypePtr).

- `UserTypeRegistry`, similar to the operator registry

- `UserObject` which is the runtime representation of the user type (just a map of names -> IValues)

- `UserTypeValue` SugaredValue, to manage getattr and setattr while generating IR, plus compiler.cpp changes to make that work.

- Frontend changes to get `torch.jit.script` to work as a class decorator

- `ClassDef` node in our AST.

- primitive ops for object creation, setattr, and getattr, plus alias analysis changes to make mutation safe.

Things that definitely need to get done:

- Import/export, python_print support

- String frontend doesn't understand class definitions yet

- Python interop (using a user-defined type outside TorchScript) is completely broken

- Static methods (without `self`) don't work

Things that are nice but not essential:

- Method definition shouldn't matter (right now you can only reference a method that's already been defined)

- Class definitions can only contain defs, no other expressions are supported.

Things I definitely won't do initially:

- Polymorphism/inheritance

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17314

Differential Revision:

D14194065

Pulled By: suo

fbshipit-source-id:

c5434afdb9b39f84b7c85a9fdc2891f8250b5025

Michael Suo [Tue, 26 Feb 2019 08:24:15 +0000 (00:24 -0800)]

add mutability to docs (#17454)

Summary:

Not sure the best way to integrate this…I wrote something that focuses on mutability "vertically" through the stack. Should I split it up and distribute it into the various sections, or keep it all together?

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17454

Differential Revision:

D14222883

Pulled By: suo

fbshipit-source-id:

3c83f6d53bba9186c32ee443aa9c32901a0951c0

Christian Puhrsch [Tue, 26 Feb 2019 01:44:07 +0000 (17:44 -0800)]

Remove usages of int64_t from native_functions.yaml

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/17387

Differential Revision:

D14185458

Pulled By: cpuhrsch

fbshipit-source-id:

5c8b358d36b77b60c3226afcd3443c2b1727cbc2

Michael Suo [Tue, 26 Feb 2019 00:55:55 +0000 (16:55 -0800)]

upload alias tracker graph for docs (#17476)

Summary:

as title

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17476

Differential Revision:

D14218312

Pulled By: suo

fbshipit-source-id:

64df096a3431a6f25cd2373f0959d415591fed15

Ailing Zhang [Tue, 26 Feb 2019 00:22:16 +0000 (16:22 -0800)]

Temporarily disable select/topk/kthvalue AD (#17470)

Summary:

Temporarily disable them for perf consideration. Will figure out a way to do `torch.zeros(sizes, grad.options())` in torchscript before enabling these.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17470

Differential Revision:

D14210313

Pulled By: ailzhang

fbshipit-source-id:

efaf44df1192ae42f4fe75998ff0073234bb4204

Elias Ellison [Tue, 26 Feb 2019 00:11:47 +0000 (16:11 -0800)]

Batch of expect file removals Remove dce expect files (#17471)

Summary:

Batch of removing expect test files

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17471

Differential Revision:

D14217265

Pulled By: eellison

fbshipit-source-id:

425da022115b7e83aca86ef61d4d41fd046d439e

Dmytro Dzhulgakov [Tue, 26 Feb 2019 00:00:56 +0000 (16:00 -0800)]

Back out part of "Fix NERPredictor for zero initialization"

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/17482

Reviewed By: david-y-lam

Differential Revision:

D14216135

fbshipit-source-id:

2ef4cb5dea74fc5c68e9b8cb43fcb180f219cb32

Stefan Krah [Mon, 25 Feb 2019 23:30:59 +0000 (15:30 -0800)]

Followup to #17049: change more instances of RuntimeError to IndexError

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/17114

Differential Revision:

D14150890

Pulled By: gchanan

fbshipit-source-id:

579ca71665166c6a904b894598a0b334f0d8acc7

Krishna Kalyan [Mon, 25 Feb 2019 22:32:34 +0000 (14:32 -0800)]

Missing argument description (value) in scatter_ function documentation (#17467)

Summary: Update the docs to include the value parameter that was missing in the `scatter_` function.

Differential Revision:

D14209225

Pulled By: soumith

fbshipit-source-id:

5c65e4d8fbd93fcd11a0a47605bce6d57570f248

Lu Fang [Mon, 25 Feb 2019 22:25:18 +0000 (14:25 -0800)]

Throw exception when foxi is not checked out (#17477)

Summary:

Add check and provide useful warning/error information to user if foxi is not checked out.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17477

Reviewed By: zrphercule

Differential Revision:

D14212896

Pulled By: houseroad

fbshipit-source-id:

557247d5d8fdc016b1c24c2a21503e59f874ad09

svcscm [Mon, 25 Feb 2019 21:42:28 +0000 (13:42 -0800)]

Updating submodules

Reviewed By: yns88

fbshipit-source-id:

ae3e05c2ee3af5df171556698ff1469780d739d1

Michael Suo [Mon, 25 Feb 2019 21:27:43 +0000 (13:27 -0800)]

simplify aliasdb interface (#17453)

Summary:

Stack:

:black_circle: **#17453 [jit] simplify aliasdb interface** [:yellow_heart:](https://our.intern.facebook.com/intern/diff/

D14205209/)

The previous "getWrites" API relies on the user to do alias checking, which is confusing and inconsistent with the rest of the interface. So replace it with a higher-level call.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17453

Differential Revision:

D14209942

Pulled By: suo

fbshipit-source-id:

d4aff2af6062ab8465ee006fc6dc603296bcb7ab

Elias Ellison [Mon, 25 Feb 2019 21:26:49 +0000 (13:26 -0800)]

fix list type unification (#17424)

Summary:

Previously we were unifying the types of lists across if block outputs. This now fails with Optional subtyping because two types which can be unified have different runtime representations.

```

torch.jit.script

def list_optional_fails(x):

# type: (bool) -> Optional[int]

if x:

y = [1]

else:

y = [None]

return y[0]

```

the indexing op will expect y to be a generic list, but it will find an intlist.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17424

Differential Revision:

D14210903

Pulled By: eellison

fbshipit-source-id:

4b8b26ba2e7e5bebf617e40316475f91e9109cc2

Shen Li [Mon, 25 Feb 2019 20:02:35 +0000 (12:02 -0800)]

Restore current streams on dst device after switching streams (#17439)

Summary:

When switching back to `d0` from a stream on a different device `d1`, we need to restore the current streams on both `d0` and `d1`. The current implementation only does that for `d0`.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17439

Differential Revision:

D14208919

Pulled By: mrshenli

fbshipit-source-id:

89f2565b9977206256efbec42adbd789329ccad8

Lu Fang [Mon, 25 Feb 2019 19:05:10 +0000 (11:05 -0800)]

update of fbcode/onnx to

e18bb41d255a23daf368ffd62a2645db55db4c72 (#17460)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17460

Previous import was

4c091e048ca42682d63ccd3c1811560bc12b732d

Included changes:

- **[e18bb41](https://github.com/onnx/onnx/commit/e18bb41)**: Infer shape of the second output of Dropout op (#1822) <Shinichiro Hamaji>

- **[cb544d0](https://github.com/onnx/onnx/commit/cb544d0)**: Clarify dtype of Dropout's mask output (#1826) <Shinichiro Hamaji>

- **[b60f693](https://github.com/onnx/onnx/commit/b60f693)**: Fix shape inference when auto_pad is notset (#1824) <Li-Wen Chang>

- **[80346bd](https://github.com/onnx/onnx/commit/80346bd)**: update test datat (#1825) <Rui Zhu>

- **[b37fc6d](https://github.com/onnx/onnx/commit/b37fc6d)**: Add stringnormalizer operator to ONNX (#1745) <Dmitri Smirnov>

Reviewed By: zrphercule

Differential Revision:

D14206264

fbshipit-source-id:

0575fa3374ff2b93b2ecee9989cfa4793c599117

Will Feng [Mon, 25 Feb 2019 18:56:03 +0000 (10:56 -0800)]

Fix variable checking in THCPModule_setRNGState (#17474)

Summary:

See https://github.com/pytorch/pytorch/pull/16325/files#r259576901

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17474

Differential Revision:

D14209549

Pulled By: yf225

fbshipit-source-id:

2ae091955ae17f5d1540f7d465739c4809c327f8

vishwakftw [Mon, 25 Feb 2019 18:32:48 +0000 (10:32 -0800)]

Fix reduction='none' in poisson_nll_loss (#17358)

Summary:

Changelog:

- Modify `if` to `elif` in reduction mode comparison

- Add error checking for reduction mode

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17358

Differential Revision:

D14190523

Pulled By: zou3519

fbshipit-source-id:

2b734d284dc4c40679923606a1aa148e6a0abeb8

Michael Liu [Mon, 25 Feb 2019 16:10:14 +0000 (08:10 -0800)]

Apply modernize-use-override (4)

Summary:

Use C++11’s override and remove virtual where applicable.

Change are automatically generated.

bypass-lint

drop-conflicts

Reviewed By: ezyang

Differential Revision:

D14191981

fbshipit-source-id:

1f3421335241cbbc0cc763b8c1e85393ef2fdb33

Gregory Chanan [Mon, 25 Feb 2019 16:08:15 +0000 (08:08 -0800)]

Fix nonzero for scalars on cuda, to_sparse for scalars on cpu/cuda. (#17406)

Summary:

I originally set out to fix to_sparse for scalars, which had some overly restrictive checking (sparse_dim > 0, which is impossible for a scalar).

This fix uncovered an issue with nonzero: it didn't properly return a size (z, 0) tensor for an input scalar, where z is the number of nonzero elements (i.e. 0 or 1).

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17406

Differential Revision:

D14185393

Pulled By: gchanan

fbshipit-source-id:

f37a6e1e3773fd9cbf69eeca7fdebb3caa192a19

Tongliang Liao [Mon, 25 Feb 2019 16:07:56 +0000 (08:07 -0800)]

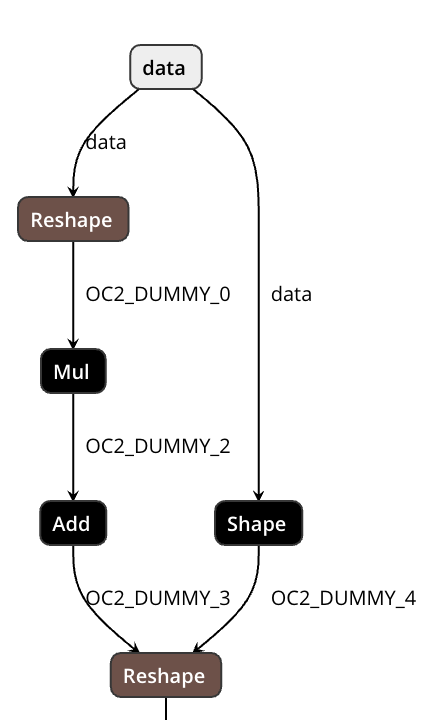

Export ElementwiseLinear to ONNX (Mul + Add). (#17411)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17411

Reshape-based approach to support dynamic shape.

The first Reshape flatten inner dimensions and the second one recover the actual shape.

No Shape/Reshape will be generated unless necessary.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/16716

Reviewed By: zrphercule

Differential Revision:

D14094532

Pulled By: houseroad

fbshipit-source-id:

bad6a1fbf5963ef3dd034ef4bf440f5a5d6980bc

Lu Fang [Mon, 25 Feb 2019 15:57:21 +0000 (07:57 -0800)]

Add foxi submodule (ONNXIFI facebook extension)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/17178

Reviewed By: yinghai

Differential Revision:

D14197987

Pulled By: houseroad

fbshipit-source-id:

c21d7235e40c2ca4925a10c467c2b4da2f1024ad

Michael Liu [Mon, 25 Feb 2019 15:26:27 +0000 (07:26 -0800)]

Fix remaining -Wreturn-std-move violations in fbcode (#17308)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17308

In some cases there is still no RVO/NRVO and std::move is still needed. Latest

Clang gained -Wreturn-std-move warning to detect cases like this (see

https://reviews.llvm.org/D43322).

Reviewed By: igorsugak

Differential Revision:

D14150915

fbshipit-source-id:

0df158f0b2874f1e16f45ba9cf91c56e9cb25066

Michael Suo [Mon, 25 Feb 2019 07:01:32 +0000 (23:01 -0800)]

add debug/release tip to cpp docs (#17452)

Summary:

as title. These were already added to the tutorials, but I didn't add them to the cpp docs.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17452

Differential Revision:

D14206501

Pulled By: suo

fbshipit-source-id:

89b5c8aaac22d05381bc4a7ab60d0bb35e43f6f5

Michael Suo [Mon, 25 Feb 2019 07:00:10 +0000 (23:00 -0800)]

add pointer to windows FAQ in contributing.md (#17450)

Summary:

" ProTip! Great commit summaries contain fewer than 50 characters. Place extra information in the extended description."

lol

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17450

Differential Revision:

D14206500

Pulled By: suo

fbshipit-source-id:

af7ffe299f8c8f04fa8e720847a1f6d576ebafc1

Thomas Viehmann [Sun, 24 Feb 2019 04:57:28 +0000 (20:57 -0800)]

Remove ROIPooling (#17434)

Summary:

Fixes: #17399

It's undocumented, unused and, according to the issue, not actually working.

Differential Revision:

D14200088

Pulled By: soumith

fbshipit-source-id:

a81f0d0f5516faea2bd6aef5667b92c7dd012dbd

Krishna Kalyan [Sun, 24 Feb 2019 04:24:21 +0000 (20:24 -0800)]

Add example to WeightedRandomSampler doc string (#17432)

Summary: Example for the weighted random sampler are missing [here](https://pytorch.org/docs/stable/data.html#torch.utils.data.WeightedRandomSampler)

Differential Revision:

D14198642

Pulled By: soumith

fbshipit-source-id:

af6d8445d31304011002dd4308faaf40b0c1b609

Michael Suo [Sat, 23 Feb 2019 23:52:38 +0000 (15:52 -0800)]

Revert

D14095703: [pytorch][PR] [jit] Add generic list/dict custom op bindings

Differential Revision:

D14095703

Original commit changeset:

2b5ae20d42ad

fbshipit-source-id:

85b23fe4ce0090922da953403c95691bf3e28710

svcscm [Sat, 23 Feb 2019 20:43:01 +0000 (12:43 -0800)]

Updating submodules

Reviewed By: zpao

fbshipit-source-id:

8fa0be05e7410a863febb98b18be55ab723a41db

Jaliya Ekanayake [Sat, 23 Feb 2019 16:46:24 +0000 (08:46 -0800)]

Jaliyae/chunk buffer fix (#17409)

Summary:

The chunk buffer had a possibility to hang when no data is read and the buffer size is lower than chunk size. We detected this while running with larger dataset and hence the fix. I added a test to mimic the situation and validated that the fix is working. Thank you Xueyun for finding this issue.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17409

Differential Revision:

D14198546

Pulled By: soumith

fbshipit-source-id:

b8ca43b0400deaae2ebb6601fdc65b47f32b0554

Stefan Krah [Sat, 23 Feb 2019 16:24:05 +0000 (08:24 -0800)]

Skip test_event_handle_multi_gpu() on a single GPU system (#17402)

Summary:

This fixes the following test failure:

```

======================================================================

ERROR: test_event_handle_multi_gpu (__main__.TestMultiprocessing)

----------------------------------------------------------------------

Traceback (most recent call last):

File "test_multiprocessing.py", line 445, in test_event_handle_multi_gpu

with torch.cuda.device(d1):

File "/home/stefan/rel/lib/python3.7/site-packages/torch/cuda/__init__.py", line 229, in __enter__

torch._C._cuda_setDevice(self.idx)

RuntimeError: cuda runtime error (10) : invalid device ordinal at /home/stefan/pytorch/torch/csrc/cuda/Module.cpp:33

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17402

Differential Revision:

D14195190

Pulled By: soumith

fbshipit-source-id:

e911f3782875856de3cfbbd770b6d0411d750279

Olen ANDONI [Sat, 23 Feb 2019 16:19:09 +0000 (08:19 -0800)]

fix(typo): Change 'integeral' to 'integer'

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/17396

Differential Revision:

D14195023

Pulled By: soumith

fbshipit-source-id:

300ab68c24bfbf10768fefac44fad64784463c8f

Lu Fang [Sat, 23 Feb 2019 07:56:21 +0000 (23:56 -0800)]

Fix the ONNX expect file (#17430)

Summary:

The CI is broken now, this diff should fix it.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17430

Differential Revision:

D14198045

Pulled By: houseroad

fbshipit-source-id:

a1c8cb5ccff66f32488702bf72997f634360eb5b

Karl Ostmo [Sat, 23 Feb 2019 04:10:22 +0000 (20:10 -0800)]

order caffe2 ubuntu configs contiguously (#17427)

Summary:

This involves another purely cosmetic (ordering) change to the `config.yml` to facilitate simpler logic.

Other changes:

* add some review feedback as comments

* exit with nonzero status on config.yml mismatch

* produce a diagram for pytorch builds

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17427

Differential Revision:

D14197618

Pulled By: kostmo

fbshipit-source-id:

267439d3aa4c0a80801adcde2fa714268865900e

Jongsoo Park [Sat, 23 Feb 2019 04:03:09 +0000 (20:03 -0800)]

remove redundant inference functions for FC (#17407)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17407

As title says

Reviewed By: csummersea

Differential Revision:

D14177921

fbshipit-source-id:

e48e1086d37de2c290922d1f498e2d2dad49708a

Jongsoo Park [Sat, 23 Feb 2019 03:38:38 +0000 (19:38 -0800)]

optimize max pool 2d (#17418)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17418

Retry of

D14181620 this time with CMakeLists.txt changes

Reviewed By: jianyuh

Differential Revision:

D14190538

fbshipit-source-id:

c59b1bd474edf6376f4c2767a797b041a2ddf742

Roy Li [Sat, 23 Feb 2019 02:33:18 +0000 (18:33 -0800)]

Generate derived extension backend Type classes for each scalar type (#17278)

Summary:

Previously we only generate one class for each extension backend. This caused issues with scalarType() calls and mapping from variable Types to non-variable types. With this change we generate one Type for each scalar type.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17278

Reviewed By: ezyang

Differential Revision:

D14161489

Pulled By: li-roy

fbshipit-source-id:

91e6a8f73d19a45946c43153ea1d7bc9d8fb2409

Ilia Cherniavskii [Sat, 23 Feb 2019 02:30:58 +0000 (18:30 -0800)]

Better handling of net errors in prof_dag counters (#17384)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17384

Better handling of possible net run errors in prof_dag counters.

Reviewed By: yinghai

Differential Revision:

D14177619

fbshipit-source-id:

51bc952c684c53136ce97e22281b1af5706f871e

eellison [Sat, 23 Feb 2019 01:54:09 +0000 (17:54 -0800)]

Batch of Expect Files removal (#17414)

Summary:

Batch of removing expect files, and some tests that no longer test anything.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17414

Differential Revision:

D14196342

Pulled By: eellison

fbshipit-source-id:

75c45649d1dd1ce39958fb02f5b7a2622c1d1d01

Arthur Crippa Búrigo [Sat, 23 Feb 2019 01:11:06 +0000 (17:11 -0800)]

Fix target name.

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/17365

Differential Revision:

D14195831

Pulled By: soumith

fbshipit-source-id:

fdf03f086f650148c34f4c548c66ef1eee698f05

Zachary DeVito [Sat, 23 Feb 2019 01:10:19 +0000 (17:10 -0800)]

jit technical docs - parts 1, 2, and most of 3 (#16887)

Summary:

This will evolve into complete technical docs for the jit. Posting what I have so far so people can start reading it and offering suggestions. Goto to Files Changed and click 'View File' to see markdown formatted.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/16887

Differential Revision:

D14191219

Pulled By: zdevito

fbshipit-source-id:

071a0e7db05e4f2eb657fbb99bcd903e4f46d84a

Vishwak Srinivasan [Sat, 23 Feb 2019 01:03:49 +0000 (17:03 -0800)]

USE_ --> BUILD_ for CAFFE2_OPS and TEST (#17390)

Differential Revision:

D14195572

Pulled By: soumith

fbshipit-source-id:

28e4ff3fe03a151cd4ed014c64253389cb85de3e

Gemfield [Sat, 23 Feb 2019 00:56:06 +0000 (16:56 -0800)]

Fix install libcaffe2_protos.a issue mentioned in #14317 (#17393)

Summary:

Fix install libcaffe2_protos.a issue mentioned in #14317.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17393

Differential Revision:

D14195359

Pulled By: soumith

fbshipit-source-id:

ed4da594905d708d03fcd719dc50aec6811d5d3f

Yinghai Lu [Sat, 23 Feb 2019 00:53:32 +0000 (16:53 -0800)]

Improve onnxifi backend init time (#17375)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17375

Previously we create the onnxGraph first and take it to the onnx manager for registration. It doesn't work well in practice. This diff takes "bring your own constructor" approach to reduce the resource spent doing backend compilation.

Reviewed By: kimishpatel, rdzhabarov

Differential Revision:

D14173793

fbshipit-source-id:

cbc4fe99fc522f017466b2fce88ffc67ae6757cf

vfdev [Sat, 23 Feb 2019 00:18:17 +0000 (16:18 -0800)]

fix code block typo

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/17421

Differential Revision:

D14194877

Pulled By: soumith

fbshipit-source-id:

6173835d833ce9e9c02ac7bd507cd424a20f2738

Junjie Bai [Fri, 22 Feb 2019 23:01:46 +0000 (15:01 -0800)]

Double resnet50 batch size in benchmark script (#17416)

Summary:

The benchmarks are now running on gpu cards with more memory

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17416

Differential Revision:

D14190493

Pulled By: bddppq

fbshipit-source-id:

66db1ca1fa693d24c24b9bc0185a6dd8a3337103

Mikhail Zolotukhin [Fri, 22 Feb 2019 22:56:02 +0000 (14:56 -0800)]

Preserve names when converting to/from NetDef.

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/17378

Differential Revision:

D14176515

Pulled By: ZolotukhinM

fbshipit-source-id:

da9ea28310250ab3ca3a99cdc210fd8d1fbbc82b

David Riazati [Fri, 22 Feb 2019 22:38:33 +0000 (14:38 -0800)]

Add generic list/dict custom op bindings (#17037)

Summary:

Fixes #17017

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17037

Differential Revision:

D14095703

Pulled By: driazati

fbshipit-source-id:

2b5ae20d42ad21c98c86a8f1cd7f1de175510507

Elias Ellison [Fri, 22 Feb 2019 22:30:44 +0000 (14:30 -0800)]

fix test

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/17304

Differential Revision:

D14151545

Pulled By: eellison

fbshipit-source-id:

d85535b709c58e2630b505ba57e9823d5a59c1d5

Ailing Zhang [Fri, 22 Feb 2019 22:19:04 +0000 (14:19 -0800)]

Improvements for current AD (#17187)

Summary:

This PR removes a few size of `self` that passed from forward pass to backward pass when `self` is already required in backward pass. This could be reason that cause the potential slow down in #16689 . I will attach a few perf numbers (still a bit volatile among runs tho) I got in the comment.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17187

Differential Revision:

D14179512

Pulled By: ailzhang

fbshipit-source-id:

5f3b1f6f26a3fef6dec15623b940380cc13656fa

Lu Fang [Fri, 22 Feb 2019 22:05:33 +0000 (14:05 -0800)]

Bump up the producer version in ONNX exporter

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/17410

Reviewed By: zrphercule

Differential Revision:

D14187821

Pulled By: houseroad

fbshipit-source-id:

a8c1d2f7b6ef63e7e92cba638e90922ef98b8702

Michael Kösel [Fri, 22 Feb 2019 21:58:08 +0000 (13:58 -0800)]

list add insert and remove (#17200)

Summary:

See https://github.com/pytorch/pytorch/issues/16662

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17200

Differential Revision:

D14144020

Pulled By: driazati

fbshipit-source-id:

c9a52954fd5f4fb70e3a0dc02d2768e0de237142

Jesse Hellemn [Fri, 22 Feb 2019 21:53:11 +0000 (13:53 -0800)]

Pin nightly builds to last commit before 5am UTC (#17381)

Summary:

This fell through the cracks from the migration from pytorch/builder to circleci. It's technically still racey, but is much less likely now

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17381

Differential Revision:

D14190137

Pulled By: pjh5

fbshipit-source-id:

2d4cd04ee874cacce47d1d50b87a054b0503bb82

Zachary DeVito [Fri, 22 Feb 2019 21:37:26 +0000 (13:37 -0800)]

Lazily load libcuda libnvrtc from c++ (#17317)

Summary:

Fixes https://github.com/pytorch/pytorch/issues/16860

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17317

Differential Revision:

D14157877

Pulled By: zdevito

fbshipit-source-id:

c37aec2d77c2e637d4fc6ceffe2bd32901c70317

Elias Ellison [Fri, 22 Feb 2019 21:34:48 +0000 (13:34 -0800)]

Refactor Type Parser b/w Schemas & IRParser into a type common parser (#17383)

Summary:

Creates a new shared type parser to be shared between the IR parser and the Schema Parser.

Also adds parsing of CompleteTensorType and DimensionedTensorType, and feature-gates that for the IRParser.

Renames the existing type_parser for python annotations, python_type_parser, and names the new one jit_type_parser.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17383

Differential Revision:

D14186438

Pulled By: eellison

fbshipit-source-id:

bbd5e337917d8862c7c6fa0a0006efa101c76afe

Lu Fang [Fri, 22 Feb 2019 19:57:38 +0000 (11:57 -0800)]

add the support for stable ONNX opsets in exporter (#16068)

Summary:

Still wip, need more tests and correct handling for opset 8 in symbolics.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/16068

Reviewed By: zrphercule

Differential Revision:

D14185855

Pulled By: houseroad

fbshipit-source-id:

55200be810c88317c6e80a46bdbeb22e0b6e5f9e

Karl Ostmo [Fri, 22 Feb 2019 19:22:14 +0000 (11:22 -0800)]

add readme and notice at the top of config.yml (#17323)

Summary:

reorder some envars for consistency

add readme and notice at the top of config.yml

generate more yaml from Python

closes #17322

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17323

Differential Revision:

D14186734

Pulled By: kostmo

fbshipit-source-id:

23b2b2c1960df6f387f1730c8df1ec24a30433fd

Lu Fang [Fri, 22 Feb 2019 19:15:11 +0000 (11:15 -0800)]

Revert

D14181620: [caffe2/int8] optimize max pool 2d

Differential Revision:

D14181620

Original commit changeset:

ffc6c4412bd1

fbshipit-source-id:

4391703164a672c9a8daecb24a46578765df67c6

Gu, Jinghui [Fri, 22 Feb 2019 18:32:07 +0000 (10:32 -0800)]

fallback operators to CPU for onnx support (#15270)

Summary:

fallback operators to CPU for onnx support

Pull Request resolved: https://github.com/pytorch/pytorch/pull/15270

Differential Revision:

D14099496

Pulled By: yinghai

fbshipit-source-id:

52b744aa5917700a802bdf19f7007cdcaa6e640a

Jongsoo Park [Fri, 22 Feb 2019 18:20:24 +0000 (10:20 -0800)]

optimize max pool 2d (#17391)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17391

Optimize 2D max pool using AVX2 intrinsics.

Reviewed By: jianyuh

Differential Revision:

D14181620

fbshipit-source-id:

ffc6c4412bd1c1d7839fe06226921df40d9cab83

Iurii Zdebskyi [Fri, 22 Feb 2019 17:40:17 +0000 (09:40 -0800)]

Fixed the script for the THC generated files (#17370)

Summary:

As of tight now, the script will produce a new generated file which will be inconsistent with the rest.

Test Result:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17370

Differential Revision:

D14184943

Pulled By: izdeby

fbshipit-source-id:

5d3b956867bee661256cb4f38f086f33974a1c8b

Gregory Chanan [Fri, 22 Feb 2019 16:59:53 +0000 (08:59 -0800)]

Fix coalesce, clone, to_dense for sparse scalars.

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/17379

Differential Revision:

D14183641

Pulled By: gchanan

fbshipit-source-id:

dbd071b648695d51502ed34ab204a1aee7e6259b

Tongzhou Wang [Fri, 22 Feb 2019 16:27:04 +0000 (08:27 -0800)]

Fix DataParallel(cpu_m).cuda() not working by checking at forward (#17363)

Summary:

Fixes #17362

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17363

Differential Revision:

D14175151

Pulled By: soumith

fbshipit-source-id:

7b7e2335d553ed2133287deeaca3f6b6254aea4a

Will Feng [Fri, 22 Feb 2019 15:54:47 +0000 (07:54 -0800)]

Rename BatchNorm running_variance to running_var (#17371)

Summary:

Currently there is a mismatch in naming between Python BatchNorm `running_var` and C++ BatchNorm `running_variance`, which causes JIT model parameters loading to fail (https://github.com/pytorch/vision/pull/728#issuecomment-

466067138):

```

terminate called after throwing an instance of 'c10::Error'

what(): No such serialized tensor 'running_variance' (read at /home/shahriar/Build/pytorch/torch/csrc/api/src/serialize/input-archive.cpp:27)

frame #0: c10::Error::Error(c10::SourceLocation, std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&) + 0x85 (0x7f2d92d32f95 in /usr/local/lib/libc10.so)

frame #1: torch::serialize::InputArchive::read(std::__cxx11::basic_string<char, std::char_traits<char>, std::allocator<char> > const&, at::Tensor&, bool) + 0xdeb (0x7f2d938551ab in /usr/local/lib/libtorch.so.1)

frame #2: torch::nn::Module::load(torch::serialize::InputArchive&) + 0x98 (0x7f2d9381cd08 in /usr/local/lib/libtorch.so.1)

frame #3: torch::nn::Module::load(torch::serialize::InputArchive&) + 0xf9 (0x7f2d9381cd69 in /usr/local/lib/libtorch.so.1)

frame #4: torch::nn::Module::load(torch::serialize::InputArchive&) + 0xf9 (0x7f2d9381cd69 in /usr/local/lib/libtorch.so.1)

frame #5: torch::nn::operator>>(torch::serialize::InputArchive&, std::shared_ptr<torch::nn::Module> const&) + 0x32 (0x7f2d9381c7b2 in /usr/local/lib/libtorch.so.1)

frame #6: <unknown function> + 0x2b16c (0x5645f4d1916c in /home/shahriar/Projects/CXX/build-TorchVisionTest-Desktop_Qt_5_12_1_GCC_64bit-Debug/TorchVisionTest)

frame #7: <unknown function> + 0x27a3c (0x5645f4d15a3c in /home/shahriar/Projects/CXX/build-TorchVisionTest-Desktop_Qt_5_12_1_GCC_64bit-Debug/TorchVisionTest)

frame #8: <unknown function> + 0x2165c (0x5645f4d0f65c in /home/shahriar/Projects/CXX/build-TorchVisionTest-Desktop_Qt_5_12_1_GCC_64bit-Debug/TorchVisionTest)

frame #9: <unknown function> + 0x1540b (0x5645f4d0340b in /home/shahriar/Projects/CXX/build-TorchVisionTest-Desktop_Qt_5_12_1_GCC_64bit-Debug/TorchVisionTest)

frame #10: __libc_start_main + 0xf3 (0x7f2d051dd223 in /usr/lib/libc.so.6)

frame #11: <unknown function> + 0x1381e (0x5645f4d0181e in /home/shahriar/Projects/CXX/build-TorchVisionTest-Desktop_Qt_5_12_1_GCC_64bit-Debug/TorchVisionTest)

```

Renaming C++ BatchNorm `running_variance` to `running_var` should fix this problem.

This is a BC-breaking change, but it should be easy for end user to rename `running_variance` to `running_var` in their call sites.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17371

Reviewed By: goldsborough

Differential Revision:

D14172775

Pulled By: yf225

fbshipit-source-id:

b9d3729ec79272a8084269756f28a8f7c4dd16b6

svcscm [Fri, 22 Feb 2019 06:46:32 +0000 (22:46 -0800)]

Updating submodules

Reviewed By: zpao

fbshipit-source-id:

ac16087a2b27b028d8e9def81369008c4723d70f

Chandler Zuo [Fri, 22 Feb 2019 03:31:21 +0000 (19:31 -0800)]

Fix concat dimension check bug (#17343)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17343

See [post](https://fb.workplace.com/groups/

1405155842844877/permalink/

2630764056950710/)

Reviewed By: dzhulgakov

Differential Revision:

D14163001

fbshipit-source-id:

038f15d6a58b3bc31910e7bfa47c335e25739f12

David Riazati [Fri, 22 Feb 2019 01:37:22 +0000 (17:37 -0800)]

Add dict to docs

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/16640

Differential Revision:

D14178270

Pulled By: driazati

fbshipit-source-id:

581040abd0b7f8636c53fd97c7365df99a2446cf

David Riazati [Fri, 22 Feb 2019 00:11:37 +0000 (16:11 -0800)]

Add LSTM to standard library (#15744)

Summary:

**WIP**

Attempt 2 at #14831

This adds `nn.LSTM` to the jit standard library. Necessary changes to the module itself are detailed in comments. The main limitation is the lack of a true `PackedSequence`, instead this PR uses an ordinary `tuple` to stand in for `PackedSequence`.

Most of the new code in `rnn.py` is copied to `nn.LSTM` from `nn.RNNBase` to specialize it for LSTM since `hx` is a `Tuple[Tensor, Tensor]` (rather than just a `Tensor` as in the other RNN modules) for LSTM.

As a hack it adds an internal annotation `@_parameter_list` to mark that a function returns all the parameters of a module. The weights for `RNN` modules are passed to the corresponding op as a `List[Tensor]`. In Python this has to be gathered dynamically since Parameters could be moved from CPU to GPU or be deleted and replaced (i.e. if someone calls `weight_norm` on their module, #15766), but in the JIT parameter lists are immutable, hence a builtin to handle this differently in Python/JIT.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/15744

Differential Revision:

D14173198

Pulled By: driazati

fbshipit-source-id:

4ee8113159b3a8f29a9f56fe661cfbb6b30dffcd

David Riazati [Fri, 22 Feb 2019 00:09:43 +0000 (16:09 -0800)]

Dict mutability (#16884)

Summary:

Adds `aten::_set_item` for `dict[key]` calls

Pull Request resolved: https://github.com/pytorch/pytorch/pull/16884

Differential Revision:

D14000488

Pulled By: driazati

fbshipit-source-id:

ea1b46e0a736d095053effb4bc52753f696617b2

Soumith Chintala [Fri, 22 Feb 2019 00:05:16 +0000 (16:05 -0800)]

Fix static linkage cases and NO_DISTRIBUTED=1 + CUDA (#16705) (#17337)

Summary:

Attempt #2 (attempt 1 is https://github.com/pytorch/pytorch/pull/16705 and got reverted because of CI failures)

Fixes https://github.com/pytorch/pytorch/issues/14805

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17337

Differential Revision:

D14175626

Pulled By: soumith

fbshipit-source-id:

66f2e10e219a1bf88ed342ec5c89da6f2994d8eb

Elias Ellison [Thu, 21 Feb 2019 23:50:08 +0000 (15:50 -0800)]

Fix Insert Constant Lint Fail (#17316)

Summary:

The test I added was failing lint because a constant was being created that wasn't being destroyed.

It was being inserted to all_nodes, then failing the check

` AT_ASSERT(std::includes(ALL_OF(sum_set), ALL_OF(all_nodes_set)));`

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17316

Differential Revision:

D14172548

Pulled By: eellison

fbshipit-source-id:

0922db21b7660e0c568c0811ebf09b22081991a4

Zachary DeVito [Thu, 21 Feb 2019 23:24:23 +0000 (15:24 -0800)]

Partial support for kwarg_only arguments in script (#17339)

Summary:

This provides the minimum necessary to allow derivative formulas for things that have a kwarg only specifier in their schema. Support for non-parser frontend default arguments for kwargs is not completed.

Fixes #16921

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17339

Differential Revision:

D14160923

Pulled By: zdevito

fbshipit-source-id:

822e964c5a3fe2806509cf24d9f51c6dc01711c3

Natalia Gimelshein [Thu, 21 Feb 2019 22:35:20 +0000 (14:35 -0800)]

fix double backward for half softmax/logsoftmax (#17330)

Summary:

Fix for #17261, SsnL do you have tests for it in your other PR? If not, I'll add to this. Example from #17261 now does not error out (and same for log_softmax).

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17330

Differential Revision:

D14171529

Pulled By: soumith

fbshipit-source-id:

ee925233feb1b44ef9f1d757db59ca3601aadef2

Christian Puhrsch [Thu, 21 Feb 2019 22:31:24 +0000 (14:31 -0800)]

Revisit some native functions to increase number of jit matches (#17340)

Summary:

Adds about 30 matches due to new functions / misuse of double.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17340

Differential Revision:

D14161109

Pulled By: cpuhrsch

fbshipit-source-id:

bb3333446b32551f7469206509b480db290f28ee

Mikhail Zolotukhin [Thu, 21 Feb 2019 22:18:21 +0000 (14:18 -0800)]

Add Value::isValidName method. (#17372)

Summary:

The method will be used in IRParser and in NetDef converter.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17372

Differential Revision:

D14172494

Pulled By: ZolotukhinM

fbshipit-source-id:

96cae8422bc73c3c2eb27524f44ec1ee8cae92f3

Bharat123Rox [Thu, 21 Feb 2019 22:06:24 +0000 (14:06 -0800)]

Fix #17218 by updating documentation (#17258)

Summary:

Fix Issue #17218 by updating the corresponding documentation in [BCEWithLogitsLoss](https://pytorch.org/docs/stable/nn.html#torch.nn.BCEWithLogitsLoss)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17258

Differential Revision:

D14157336

Pulled By: ezyang

fbshipit-source-id:

fb474d866464faeaae560ab58214cccaa8630f08

Soumith Chintala [Thu, 21 Feb 2019 21:37:00 +0000 (13:37 -0800)]

fix lint (#17366)

Summary:

fix lint

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17366

Differential Revision:

D14171702

Pulled By: soumith

fbshipit-source-id:

5d8ecfac442e93b11bf4095f9977fd3302d033eb

Nikolay Korovaiko [Thu, 21 Feb 2019 20:35:23 +0000 (12:35 -0800)]

switch to Operation in register_prim_ops.cpp (#17183)

Summary:

This PR switches from `OperationCreator` to `Operation` to simplify the logic.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17183

Differential Revision:

D14169829

Pulled By: Krovatkin

fbshipit-source-id:

27f40a30c92e29651cea23f08b5b1f13d7eced8c

Karl Ostmo [Thu, 21 Feb 2019 19:38:28 +0000 (11:38 -0800)]

Use standard docker image for XLA build

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/17287

Differential Revision:

D14169689

Pulled By: kostmo

fbshipit-source-id:

24e255be23936542093008ed51d2c061b2924993

Gregory Chanan [Thu, 21 Feb 2019 19:00:05 +0000 (11:00 -0800)]

Modernize test_sparse. (#17324)

Summary:

Our sparse tests still almost exclusively use legacy constructors. This means you can't, for example, easily test scalars (because the legacy constructors don't allow them), and not surprisingly, many operations are broken with sparse scalars.

Note: this doesn't address the SparseTensor constructor itself, because there is a separate incompatibility there that I will address in a follow-on commit, namely, that torch.sparse.FloatTensor() is supported, but torch.sparse_coo_tensor() is not (because the size is ambiguous).

The follow-on PR will explicitly set the size for sparse tensor constructors and add a test for the legacy behavior, so we don't lose it.

Included in this PR are changes to the constituent sparse tensor pieces (indices, values):

1) IndexTensor becomes index_tensor

2) ValueTensor becomes value_tensor if it is a data-based construction, else value_empty.

3) Small changes around using the legacy tensor type directly, e.g. torch.FloatTensor.dtype exists, but torch.tensor isn't a type.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/17324

Differential Revision:

D14159270

Pulled By: gchanan

fbshipit-source-id:

71ee63e1ea6a4bc98f50be41d138c9c72f5ca651