Thomas J. Fan [Mon, 30 Aug 2021 22:03:40 +0000 (15:03 -0700)]

BUG Fixes regression for nllloss gradcheck (#64203)

Summary:

Fixes https://github.com/pytorch/pytorch/issues/64163

This PR includes the fix and the opinfo from https://github.com/pytorch/pytorch/pull/63854/ for non-regression testing.

cc albanD mruberry jbschlosser

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64203

Reviewed By: albanD

Differential Revision:

D30647522

Pulled By: jbschlosser

fbshipit-source-id:

2974d299763505908fa93532aca2bd5d5b71f2e9

Ivan Yashchuk [Mon, 30 Aug 2021 22:03:15 +0000 (15:03 -0700)]

Enable Half, BFloat16, and Complex dtypes for coo-coo sparse matmul [CUDA] (#59980)

Summary:

This PR enables Half, BFloat16, ComplexFloat, and ComplexDouble support for matrix-matrix multiplication of COO sparse matrices.

The change is applied only to CUDA 11+ builds.

`cusparseSpGEMM` also supports `CUDA_C_16F` (complex float16) and `CUDA_C_16BF` (complex bfloat16). PyTorch also supports the complex float16 dtype (`ScalarType::ComplexHalf`), but there is no convenient dispatch, so this dtype is omitted in this PR.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/59980

Reviewed By: ngimel

Differential Revision:

D29699456

Pulled By: cpuhrsch

fbshipit-source-id:

407ae53392acb2f92396a62a57cbaeb0fe6e950b

Alban Desmaison [Mon, 30 Aug 2021 21:56:35 +0000 (14:56 -0700)]

Revert

D30561459: Fix bytes_written and bytes_read

Test Plan: revert-hammer

Differential Revision:

D30561459 (https://github.com/pytorch/pytorch/commit/

e98173ff3423247c597e21c923c8f47470ef07ab)

Original commit changeset:

976fa5167097

fbshipit-source-id:

43f4c234ca400820fe6db5b4f37a25e14dc4b0dd

Alban Desmaison [Mon, 30 Aug 2021 21:46:50 +0000 (14:46 -0700)]

Back out "Added reference tests to ReductionOpInfo" (#64183)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64183

Original commit changeset:

6a1f82ac2819

Test Plan: CI

Reviewed By: soulitzer

Differential Revision:

D30639835

fbshipit-source-id:

e238043c6fbd0453317a9ed219e348298f98aaca

Jerry Zhang [Mon, 30 Aug 2021 21:21:39 +0000 (14:21 -0700)]

[quant][graphmode][fx] Add reference quantized conv module (#63828)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63828

Added reference quantized conv module for the custom backend flow, the reference quantized module will

have the following code:

```

w(float) -- quant - dequant \

x(float) ------------- F.conv2d ---

```

In the full model, we will see

```

w(float) -- quant - *dequant \

x -- quant --- *dequant -- *F.conv2d --- *quant - dequant

```

and the backend should be able to fuse the ops with `*` into a quantized linear

Test Plan:

python test/test_quantization.py TestQuantizeFx.test_conv_linear_reference

Imported from OSS

Reviewed By: vkuzo

Differential Revision:

D30504749

fbshipit-source-id:

e1d8c43a0e0d6d9ea2375b8ca59a9c0f455514fb

Daya Khudia [Mon, 30 Aug 2021 20:58:47 +0000 (13:58 -0700)]

Back out "[JIT] Add aten::slice optimization"

Summary:

Original commit changeset:

d12ee39f6828

build-break

overriding_review_checks_triggers_an_audit_and_retroactive_review

Oncall Short Name: dskhudia

Test Plan: Local run succeeds

Differential Revision:

D30633990

fbshipit-source-id:

91cf7cc0ad7e47d919347c2a1527688e062e0c62

Eli Uriegas [Mon, 30 Aug 2021 20:55:19 +0000 (13:55 -0700)]

.github: Adding configuration for backwards_compat (#64204)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64204

Adds backwards_compat to our existing test matrix for github actions

Signed-off-by: Eli Uriegas <eliuriegas@fb.com>

cc ezyang seemethere malfet walterddr lg20987 pytorch/pytorch-dev-infra

Test Plan: Imported from OSS

Reviewed By: malfet

Differential Revision:

D30646764

Pulled By: seemethere

fbshipit-source-id:

f0da6027e29fab03aff058cb13466fae5dcf3678

Eli Uriegas [Mon, 30 Aug 2021 20:55:19 +0000 (13:55 -0700)]

.github: Adding configuration for docs_test (#64201)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64201

Adds docs_test to our existing test matrix for github actions

Signed-off-by: Eli Uriegas <eliuriegas@fb.com>

cc ezyang seemethere malfet walterddr lg20987 pytorch/pytorch-dev-infra

Test Plan: Imported from OSS

Reviewed By: malfet

Differential Revision:

D30646765

Pulled By: seemethere

fbshipit-source-id:

946adae01ff1f1f7ebe626e408e161b77b19a011

Will Constable [Mon, 30 Aug 2021 20:29:51 +0000 (13:29 -0700)]

Make name() part of IMethod interface (#63995)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63995

JIT methods already have name() in their interface, and Py methods have names in their implementation. I'm adding this for a particular case where someone tried to use name() on a JIT method that we're replacing with an IMethod.

Test Plan: add case to imethod API test

Reviewed By: suo

Differential Revision:

D30559401

fbshipit-source-id:

76236721f5cd9a9d9d488ddba12bfdd01d679a2c

Nikita Shulga [Mon, 30 Aug 2021 20:26:00 +0000 (13:26 -0700)]

Fix type annotation in tools/nightly.py (#64202)

Summary:

`tempfile.TemporaryDirectory` is a generic only in python-3.9 and above

Workaround by wrapping type annotation in quotes

Fixes https://github.com/pytorch/pytorch/issues/64017

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64202

Reviewed By: janeyx99

Differential Revision:

D30644215

Pulled By: malfet

fbshipit-source-id:

3c16240b9fa899bd4d572c1732a7d87d3dd0fbd5

lezcano [Mon, 30 Aug 2021 20:10:23 +0000 (13:10 -0700)]

Implements the orthogonal parametrization (#62089)

Summary:

Implements an orthogonal / unitary parametrisation.

It does passes the tests and I have trained a couple models with this implementation, so I believe it should be somewhat correct. Now, the implementation is very subtle. I'm tagging nikitaved and IvanYashchuk as reviewers in case they have comments / they see some room for optimisation of the code, in particular of the `forward` function.

Fixes https://github.com/pytorch/pytorch/issues/42243

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62089

Reviewed By: ezyang

Differential Revision:

D30639063

Pulled By: albanD

fbshipit-source-id:

988664f333ac7a75ce71ba44c8d77b986dff2fe6

Tanvir Zaman [Mon, 30 Aug 2021 19:56:15 +0000 (12:56 -0700)]

Fix bytes_written and bytes_read (#64040)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64040

In operator cost inference functions, in many places we are using sizeof(x.data_type()). Since data_type() returns a 32 bit integer from [this enum](https://www.internalfb.com/code/fbsource/[

15e7ffe4073cf08c61077c7c24a4839504b964a2]/fbcode/caffe2/caffe2/proto/caffe2.proto?lines=20), we are basically always getting 4 for sizeof(x.data_type()) no matter what actual data type x has. Big thanks to Jack Langman for specifically pointing to this bug.

We would instead use the size in bytes based on actual data type.

Test Plan:

Added unit tests BatchMatMulMemCostTest:

buck test //caffe2/caffe2/fb/fbgemm:batch_matmul_op_test -- BatchMatMulMemCostTest

Extended existing unit test test_columnwise_concat for different data types:

buck test //caffe2/caffe2/python/operator_test:concat_op_cost_test -- test_columnwise_concat

Differential Revision:

D30561459

fbshipit-source-id:

976fa5167097a35af548498480001aafd7851d93

Philip Meier [Mon, 30 Aug 2021 19:28:39 +0000 (12:28 -0700)]

remove componentwise comparison of complex values in torch.testing.assert_close (#63841)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63841

Closes #61906.

cc ezyang gchanan

Test Plan: Imported from OSS

Reviewed By: ezyang

Differential Revision:

D30633526

Pulled By: mruberry

fbshipit-source-id:

ddb5d61838cd1e12d19d0093799e827344382cdc

Philip Meier [Mon, 30 Aug 2021 19:28:39 +0000 (12:28 -0700)]

remove componentwise comparison of complex values in TestCase.assertEqual (#63572)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63572

Addresses #61906. Issue will be fixed later in the stack when `torch.testing.assert_close` got the same treatment.

cc ezyang gchanan

Test Plan: Imported from OSS

Reviewed By: ezyang

Differential Revision:

D30633527

Pulled By: mruberry

fbshipit-source-id:

c2002a4998a7a75cb2ab83f87190bde43a9d4f7c

Xiang Gao [Mon, 30 Aug 2021 19:25:29 +0000 (12:25 -0700)]

Bring back old algorithm for sorting on small number of segments (#64127)

Summary:

Fixes https://github.com/pytorch/pytorch/issues/63456

The code was copy-pasted from the previous commit without modification.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64127

Reviewed By: mruberry

Differential Revision:

D30632090

Pulled By: ngimel

fbshipit-source-id:

58bbdd9b0423f01d4e65e2ec925ad9a3f88efc9b

Kushashwa Ravi Shrimali [Mon, 30 Aug 2021 19:16:23 +0000 (12:16 -0700)]

[Doc] `make_tensor` to `torch.testing` module (#63925)

Summary:

This PR aims to add `make_tensor` to the `torch.testing` module in PyTorch docs.

TODOs:

* [x] Add examples

cc: pmeier mruberry brianjo

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63925

Reviewed By: ngimel

Differential Revision:

D30633487

Pulled By: mruberry

fbshipit-source-id:

8e5a1f880c6ece5925b4039fee8122bd739538af

Peter Bell [Mon, 30 Aug 2021 19:14:09 +0000 (12:14 -0700)]

Fix bad use of channels last kernel in sync batch norm backward (#64100)

Summary:

Fixes https://github.com/pytorch/pytorch/issues/64039

There are two distinct problems here.

1. If `grad_output` is channels last but not input, then input would be read as-if it were channels last. So reading the wrong values.

2. `use_channels_last_kernels` doesn't guarunte that `suggest_memory_format` will actually return channels last, so use `empty_like` instead so the strides always match.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64100

Reviewed By: mruberry

Differential Revision:

D30622127

Pulled By: ngimel

fbshipit-source-id:

e28cc57215596817f1432fcdd6c49d69acfedcf2

Zhengxu Chen [Mon, 30 Aug 2021 18:46:14 +0000 (11:46 -0700)]

[jit] Make operation call accept Stack& instead Stack* (#63414)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63414

Misuse of raw pointer in here where stack is never nullable.

ghstack-source-id:

136938318

Test Plan:

compiles.

Imported from OSS

Reviewed By: ejguan

Differential Revision:

D30375410

fbshipit-source-id:

9d65b620bb76d90d886c800f54308520095d58ee

= [Mon, 30 Aug 2021 16:43:25 +0000 (09:43 -0700)]

Improve performance of index_select by avoiding item (#63008)

Summary:

Partially fixes https://github.com/pytorch/pytorch/issues/61788

From a CUDA perspective: item already pulls all Tensor content onto the host (albeit one-by-one), which incurs very expensive memory transfers. This way we'll do it all at once.

From a CPU perspective: item has a lot of overhead as a native function in comparison to simply using a pointer.

Overall there's still lots of performance gains to be had, but this is a small change that should take us into a more usable landscape. This doesn't land a separate benchmark, but I postulate that's not necessary to decide on the benefit of this (we'll also see if it shows up indirectly), however is still a good follow-up item.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63008

Reviewed By: zou3519

Differential Revision:

D30211160

Pulled By: cpuhrsch

fbshipit-source-id:

70b752be5df51afc66b5aa1c77135d1205520cdd

Harut Movsisyan [Mon, 30 Aug 2021 16:36:46 +0000 (09:36 -0700)]

[Static Runtime] aten::cat out version when it is not being replaced by prim::VarConcat (#64157)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64157

UseVariadicCat optimization is not applied to aten::cat if list input to the op can not be moved to the position before op (https://fburl.com/diffusion/l6kweimu). For these cases we will need out version for SR.

Test Plan:

Confirm out variant is called:

```

> buck run //caffe2/benchmarks/static_runtime:static_runtime_cpptest -- --v=1

```

Reviewed By: d1jang

Differential Revision:

D30598574

fbshipit-source-id:

74cfa8291dc8b5df4aef58adfb1ab2a16f10d90a

Scott Wolchok [Mon, 30 Aug 2021 16:34:24 +0000 (09:34 -0700)]

[PyTorch] Fix missing move in unpickler (#63974)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63974

Saw some time spent in this for model loading, no reason not to move here.

ghstack-source-id:

136760979

Test Plan: Re-profile model loading on devserver; IValue copy ctor time has gone down

Reviewed By: dhruvbird

Differential Revision:

D30548923

fbshipit-source-id:

42000f2e18582762b43353cca10ae094833de3b3

Scott Wolchok [Mon, 30 Aug 2021 16:34:24 +0000 (09:34 -0700)]

[PyTorch] Reduce copies/refcount bumps in BytecodeDeserializer::parseMethods (#63961)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63961

Saw a report that this function was slow and was doing unexplained vector copies. First pass to remove a bunch of copying.

ghstack-source-id:

136760976

Test Plan:

Pixel 3

before: https://our.intern.facebook.com/intern/aibench/details/

461850118893980

after: https://www.internalfb.com/intern/aibench/details/

48965886029524

MilanBoard failed to return data from simpleperf

Reviewed By: dhruvbird

Differential Revision:

D30544551

fbshipit-source-id:

0e2b5471a10c0803d52c923e6fb5625f5542b99d

Raghavan Raman [Mon, 30 Aug 2021 16:26:20 +0000 (09:26 -0700)]

[MicroBench] Added a micro benchmark for a signed log1p kernel. (#64032)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/64032

Test Plan: Imported from OSS

Reviewed By: ezyang

Differential Revision:

D30579198

Pulled By: navahgar

fbshipit-source-id:

a53d68225fba768b26491d14b535f8f2dcf50c0e

Facebook Community Bot [Mon, 30 Aug 2021 15:27:36 +0000 (08:27 -0700)]

Automated submodule update: FBGEMM (#64149)

Summary:

This is an automated pull request to update the first-party submodule for [pytorch/FBGEMM](https://github.com/pytorch/FBGEMM).

New submodule commit: https://github.com/pytorch/FBGEMM/commit/

f6dfed87a10ed5729bce83e98788e437a94cbda0

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64149

Test Plan: Ensure that CI jobs succeed on GitHub before landing.

Reviewed By: jspark1105

Differential Revision:

D30632209

fbshipit-source-id:

aa1cebaf50169c3a93dbcb994fa47e29d6b6a0d7

Vitaly Fedyunin [Mon, 30 Aug 2021 14:54:11 +0000 (07:54 -0700)]

[DataLoader2] Adding Messages, Protocols, Loop wrappers (#63882)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/63882

Test Plan: Imported from OSS

Reviewed By: ejguan

Differential Revision:

D30627452

Pulled By: VitalyFedyunin

fbshipit-source-id:

561ea2df07f3572e04401171946154024126387b

Rong Rong (AI Infra) [Mon, 30 Aug 2021 14:49:27 +0000 (07:49 -0700)]

remove one more distributed test (#64108)

Summary:

Follow up on https://github.com/pytorch/pytorch/issues/62896. one more place we should remove distributed test

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64108

Reviewed By: janeyx99, soulitzer

Differential Revision:

D30614062

Pulled By: walterddr

fbshipit-source-id:

6576415dc2d481d65419da19c5aa0afc37a86cff

Raghavan Raman [Mon, 30 Aug 2021 11:38:00 +0000 (04:38 -0700)]

[nnc] Updated internal asserts to include more detailed error messages (#64118)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/64118

Test Plan: Imported from OSS

Reviewed By: ZolotukhinM

Differential Revision:

D30616944

Pulled By: navahgar

fbshipit-source-id:

35289696cc0e7faa01599304243b86f0febc6daf

Raghavan Raman [Mon, 30 Aug 2021 11:38:00 +0000 (04:38 -0700)]

[nnc] Fixed warning due to implicit parameter conversion (#64117)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/64117

Test Plan: Imported from OSS

Reviewed By: ZolotukhinM

Differential Revision:

D30616945

Pulled By: navahgar

fbshipit-source-id:

eaf69232ac4a684ab5f97a54a514971655f86ef3

Thomas J. Fan [Mon, 30 Aug 2021 06:31:42 +0000 (23:31 -0700)]

ENH Adds label_smoothing to cross entropy loss (#63122)

Summary:

Fixes https://github.com/pytorch/pytorch/issues/7455

Partially resolves pytorch/vision#4281

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63122

Reviewed By: iramazanli

Differential Revision:

D30586076

Pulled By: jbschlosser

fbshipit-source-id:

06afc3aa1f8b9edb07fe9ed68c58968ad1926924

Harut Movsisyan [Mon, 30 Aug 2021 03:58:45 +0000 (20:58 -0700)]

[Static Runtime] Out version for torch.linalg.norm (#64070)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/64070

Test Plan:

Confirm out variant is called for both versions:

```

> buck run //caffe2/benchmarks/static_runtime:static_runtime_cpptest -- --v=1

```

Reviewed By: d1jang

Differential Revision:

D30595816

fbshipit-source-id:

e88d88d4fc698774e83a98efce66b8fa4e281563

Zafar Takhirov [Mon, 30 Aug 2021 03:28:32 +0000 (20:28 -0700)]

[quant] AO migration of the `quantize.py` (#64086)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64086

AO Team is migrating the existing torch.quantization into torch.ao.quantization. We are doing it one file at a time to make sure that the internal callsites are updated properly.

This migrates the `quantize.py` from torch.quantization to `torch.ao.quantization`.

At this point both locations will be supported. Eventually the torch.quantization will be deprecated.

Test Plan: `buck test mode/opt //caffe2/test:quantization`

Reviewed By: jerryzh168, raghuramank100

Differential Revision:

D30055886

fbshipit-source-id:

8ef7470f9fa640c0042bef5bb843e7a05ecd0b9f

Mike Ruberry [Mon, 30 Aug 2021 02:37:06 +0000 (19:37 -0700)]

Removes beta warning from the special module documentation (#64148)

Summary:

Updates documentation per feature review. torch.special is now stable.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64148

Reviewed By: ngimel

Differential Revision:

D30632049

Pulled By: mruberry

fbshipit-source-id:

8f6148ec7737e7b3a90644eeca23eb217eda513d

mingfeima [Mon, 30 Aug 2021 01:35:37 +0000 (18:35 -0700)]

add channel last support for MaxUnpool2d (#49984)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/49984

Test Plan: Imported from OSS

Reviewed By: ejguan

Differential Revision:

D26007051

Pulled By: VitalyFedyunin

fbshipit-source-id:

6c54751ade4092e03c1651aaa60380f7d6e92f6b

Nikita Shulga [Sun, 29 Aug 2021 22:49:59 +0000 (15:49 -0700)]

Revert

D30620966: [pytorch][PR] Move Parallel[Native|TBB] to GHA

Test Plan: revert-hammer

Differential Revision:

D30620966 (https://github.com/pytorch/pytorch/commit/

223f886032978487099da4f54e86e9e0549cde0c)

Original commit changeset:

9a23e4b3e168

fbshipit-source-id:

b9248d377b9a7b850dfb3f10f3350fbc9855acfe

Tugsbayasgalan (Tugsuu) Manlaibaatar [Sun, 29 Aug 2021 21:17:54 +0000 (14:17 -0700)]

[DOC] Add doc for maybe_wrap_dim (#63161)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/63161

Test Plan: Imported from OSS

Reviewed By: pbelevich

Differential Revision:

D30629451

Pulled By: tugsbayasgalan

fbshipit-source-id:

b03f030f197e10393a8ff223b240d23c30858028

Garrett Cramer [Sun, 29 Aug 2021 18:33:48 +0000 (11:33 -0700)]

add support for sending cpu sparse tensors over rpc (#62794)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62794

This pr updates jit serialization to support pickling Sparse COO tensors.

This pr updates message.cpp to support Sparse COO tensors.

A bug was filed a few years ago https://github.com/pytorch/pytorch/issues/30807.

I tested the fix by adding sparse tensor tests to rpc_test.py and dist_autograd_test.py.

cc pietern mrshenli pritamdamania87 zhaojuanmao satgera rohan-varma gqchen aazzolini osalpekar jiayisuse agolynski SciPioneer H-Huang mrzzd cbalioglu gcramer23 gmagogsfm

Test Plan: Imported from OSS

Reviewed By: soulitzer

Differential Revision:

D30608848

Pulled By: gcramer23

fbshipit-source-id:

629ba8e4a3d8365875a709c9b87447c7a71204fb

Tugsbayasgalan (Tugsuu) Manlaibaatar [Sun, 29 Aug 2021 17:19:56 +0000 (10:19 -0700)]

[DOC] improve docstring for Optimizer.state_dict (#63153)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63153

Fixes: https://github.com/pytorch/pytorch/issues/60121

Test Plan: Imported from OSS

Reviewed By: pbelevich

Differential Revision:

D30629462

Pulled By: tugsbayasgalan

fbshipit-source-id:

a9160e02ac53bb1a6219879747d73aae9ebe4d2f

Facebook Community Bot [Sun, 29 Aug 2021 16:56:34 +0000 (09:56 -0700)]

Automated submodule update: FBGEMM (#64141)

Summary:

This is an automated pull request to update the first-party submodule for [pytorch/FBGEMM](https://github.com/pytorch/FBGEMM).

New submodule commit: https://github.com/pytorch/FBGEMM/commit/

9939bac9defab4d18fb7fdded7e1a76c0c2b49b4

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64141

Test Plan: Ensure that CI jobs succeed on GitHub before landing.

Reviewed By: jspark1105

Differential Revision:

D30629417

fbshipit-source-id:

1b1ad3d4caff925f798b86b358ab193554c9b8e0

Bert Maher [Sun, 29 Aug 2021 02:57:10 +0000 (19:57 -0700)]

[nnc] Make 64-bit dimensions work (#64077)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64077

We were assuming kernel dimensions fit in 32 bits (the old fuser made

this assumption too), but we should be able to support 64.

ghstack-source-id:

136933272

Test Plan: unit tests; new IR level test with huge sizes

Reviewed By: ZolotukhinM

Differential Revision:

D30596689

fbshipit-source-id:

23b7e393a2ebaecb0c391a6b1f0c4b05a98bcc94

Bert Maher [Sun, 29 Aug 2021 02:57:10 +0000 (19:57 -0700)]

Parse int64 sizes/strides (#64076)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64076

We were parsing sizes into int32s, so if you had a tensor with more

than 2^32 elements, you couldn't represent it.

ghstack-source-id:

136933273

Test Plan: parseIR with size of 4e9

Reviewed By: ZolotukhinM

Differential Revision:

D30521116

fbshipit-source-id:

1e28e462cba52d648e0e2acb4e234d86aae25a3e

Bert Maher [Sun, 29 Aug 2021 02:18:10 +0000 (19:18 -0700)]

[nnc] Fix batchnorm implementation (#64112)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64112

Fixes #64062

Test Plan: Imported from OSS

Reviewed By: zhxchen17

Differential Revision:

D30622897

Pulled By: bertmaher

fbshipit-source-id:

7d7c6131aa786e61fa1d0a517288396a0bdb1d22

Ilqar Ramazanli [Sat, 28 Aug 2021 22:54:53 +0000 (15:54 -0700)]

To add RMSProp algorithm documentation (#63721)

Summary:

It has been discussed before that adding description of Optimization algorithms to PyTorch Core documentation may result in a nice Optimization research tutorial. In the following tracking issue we mentioned about all the necessary algorithms and links to the originally published paper https://github.com/pytorch/pytorch/issues/63236.

In this PR we are adding description of RMSProp to the documentation. For more details, we refer to the paper https://www.cs.toronto.edu/~tijmen/csc321/slides/lecture_slides_lec6.pdf

<img width="464" alt="RMSProp" src="https://user-images.githubusercontent.com/

73658284/

131179226-

3fb6fe5a-5301-4948-afbe-

f38bf57f24ff.png">

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63721

Reviewed By: albanD

Differential Revision:

D30612426

Pulled By: iramazanli

fbshipit-source-id:

c3ac630a9658d1282866b53c86023ac10cf95398

Facebook Community Bot [Sat, 28 Aug 2021 18:50:49 +0000 (11:50 -0700)]

Automated submodule update: FBGEMM (#64129)

Summary:

This is an automated pull request to update the first-party submodule for [pytorch/FBGEMM](https://github.com/pytorch/FBGEMM).

New submodule commit: https://github.com/pytorch/FBGEMM/commit/

f14e79481460a7c0dedf452a258072231cb343e6

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64129

Test Plan: Ensure that CI jobs succeed on GitHub before landing.

Reviewed By: jspark1105

Differential Revision:

D30621549

fbshipit-source-id:

34c109e75c96a261bf370f7a06dbb8b9004860ab

Nikita Shulga [Sat, 28 Aug 2021 18:46:40 +0000 (11:46 -0700)]

Move Parallel[Native|TBB] to GHA (#64123)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/64123

Reviewed By: driazati

Differential Revision:

D30620966

Pulled By: malfet

fbshipit-source-id:

9a23e4b3e16870f77bf18df4370cd468603d592d

Tugsbayasgalan (Tugsuu) Manlaibaatar [Sat, 28 Aug 2021 18:44:58 +0000 (11:44 -0700)]

Enhancement for smart serialization for out schemas (#63096)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/63096

Test Plan: Imported from OSS

Reviewed By: gmagogsfm

Differential Revision:

D30415255

Pulled By: tugsbayasgalan

fbshipit-source-id:

eb40440a3b46258394d035479f5fc4a4baa12bcc

Priya Ramani [Sat, 28 Aug 2021 05:50:20 +0000 (22:50 -0700)]

[Light] Fix error message (#64010)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64010

Fixing typos in a error message

Test Plan:

Error message before fix:

Lite Interpreter verson number does not match. The model version must be between 3 and 5But the model version is 6

Error message after fix:

Lite Interpreter version number does not match. The model version must be between 3 and 5 but the model version is 6

Reviewed By: larryliu0820

Differential Revision:

D30568367

fbshipit-source-id:

205f3278ee8dcf38579dbb828580a9e986ccacc1

Jerry Zhang [Sat, 28 Aug 2021 03:58:20 +0000 (20:58 -0700)]

[quant][graphmode][fx] Add reference quantized linear module (#63627)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63627

Added reference quantized linear module for the custom backend flow, the reference quantized module will

have the following code:

```

w(float) -- quant - dequant \

x(float) ------------- F.linear ---

```

In the full model, we will see

```

w(float) -- quant - *dequant \

x -- quant --- *dequant -- *F.linear --- *quant - dequant

```

and the backend should be able to fuse the ops with `*` into a quantized linear

Test Plan:

python test/test_quantization.py TestQuantizeFx.test_conv_linear_reference

Imported from OSS

Reviewed By: vkuzo

Differential Revision:

D30504750

fbshipit-source-id:

5729921745c2b6a0fb344efc3689f3b170e89500

Yuchen Huang [Sat, 28 Aug 2021 01:57:22 +0000 (18:57 -0700)]

[iOS][GPU] Consolidate array and non-array kernel for hardswish (#63369)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63369

ghstack-source-id:

136918152

(Note: this ignores all push blocking failures!)

Test Plan:

- `buck test pp-macos`

- Op tests in PyTorchPlayground app

- Run mobilenetv3 test

https://pxl.cl/1Ncls

Reviewed By: xta0

Differential Revision:

D30354454

fbshipit-source-id:

88bf4f8b5871e63170161b3f3e44f99b8a3086c6

Ilqar Ramazanli [Sat, 28 Aug 2021 01:51:09 +0000 (18:51 -0700)]

To add Nesterov Adam algorithm description to documentation (#63793)

Summary:

It has been discussed before that adding description of Optimization algorithms to PyTorch Core documentation may result in a nice Optimization research tutorial. In the following tracking issue we mentioned about all the necessary algorithms and links to the originally published paper https://github.com/pytorch/pytorch/issues/63236.

In this PR we are adding description of Nesterov Adam Algorithm to the documentation. For more details, we refer to the paper https://openreview.net/forum?id=OM0jvwB8jIp57ZJjtNEZ

<img width="439" alt="NAdam" src="https://user-images.githubusercontent.com/

73658284/

131185124-

e81b2edf-33d9-4a9d-a7bf-

f7e5eea47d7c.png">

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63793

Reviewed By: NivekT

Differential Revision:

D30617057

Pulled By: iramazanli

fbshipit-source-id:

cd2054b0d9b6883878be74576e86e307f32f1435

Mike Iovine [Sat, 28 Aug 2021 00:37:05 +0000 (17:37 -0700)]

[Static Runtime] Optimize memory planner initialization (#64101)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64101

Checking `getOutOfPlaceOperation(n)` is a very expensive operation, especially in multithreaded environments, due to a lock acquisition when the NNC cache is queried. This slows down the memory planner initialization time, and by extension, the latency for the first static runtime inference.

There are two optimizations in this diff:

* Cache the result of `p_node->has_out_variant()` to avoid the call to `getOutOfPlaceOperation`. This speeds up calls to `canReuseInputOutputs`, which in turn speeds up `isOptimizableContainerType`

* Precompute all `isOptimizableContainerType` during static runtime initialization to avoid a pass over all of each node's inputs.

Test Plan: All unit tests pass: `buck test caffe2/benchmarks/static_runtime/...`

Reviewed By: movefast1990

Differential Revision:

D30595579

fbshipit-source-id:

70aaa7af9589c739c672788bf662f711731864f2

Mikhail Zolotukhin [Fri, 27 Aug 2021 23:15:55 +0000 (16:15 -0700)]

[TensorExpr] Update tutorial. (#64109)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/64109

Test Plan: Imported from OSS

Reviewed By: bertmaher

Differential Revision:

D30614050

Pulled By: ZolotukhinM

fbshipit-source-id:

e8f9bd9ef2483e6eafbc0bd5394d311cd694c7b2

Eli Uriegas [Fri, 27 Aug 2021 23:02:49 +0000 (16:02 -0700)]

.github: Add cpp_docs job to current gcc5 workflow (#64044)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64044

Adds the cpp_docs job to the current workflow, also modifies the scripts

surrounding building docs so that they can be powered through

environment variables with sane defaults rather than having to have

passed arguments.

Ideally should not break current jobs running in circleci but those

should eventually be turned off anyways.

Coincides with work from:

* https://github.com/seemethere/upload-artifact-s3/pull/1

* https://github.com/seemethere/upload-artifact-s3/pull/2

Signed-off-by: Eli Uriegas <eliuriegas@fb.com>

cc ezyang seemethere malfet walterddr lg20987 pytorch/pytorch-dev-infra

Test Plan: Imported from OSS

Reviewed By: malfet

Differential Revision:

D30610010

Pulled By: seemethere

fbshipit-source-id:

f67adeb1bd422bb9e24e0f1ec0098cf9c648f283

soulitzer [Fri, 27 Aug 2021 21:59:08 +0000 (14:59 -0700)]

Update codegen to use boxed kernel (#63459)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63459

- Replaces the usual registration basically when "requires_derivative" is True (as in we still need a grad_fn), but `fn.info` is `None` (TODO maybe make sure differentiable inputs > 0 also to match requires_derivative).

- Adds some (temporary?) fixes to some sparse functions See: https://github.com/pytorch/pytorch/issues/63549

- To remove the codegen that generates NotImplemented node (though that should only be one line), because there are some ops listed under `RESET_GRAD_ACCUMULATOR` that have a extra function call. We would need to make this list of ops available to c++, but this would either mean we'd have to codegen a list of strings, or move the RESET_GRAD_ACCUMULATOR to cpp land. We could do this in a future PR if necessary.

Test Plan: Imported from OSS

Reviewed By: heitorschueroff

Differential Revision:

D30518571

Pulled By: soulitzer

fbshipit-source-id:

99a35cbced46292d1b4e51594ae4d534c2caf8b6

soulitzer [Fri, 27 Aug 2021 21:59:08 +0000 (14:59 -0700)]

Add autograd not implemented boxed fallback (#63458)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63458

See description and discussion from https://github.com/pytorch/pytorch/pull/62450

Test Plan: Imported from OSS

Reviewed By: heitorschueroff

Differential Revision:

D30518572

Pulled By: soulitzer

fbshipit-source-id:

3b1504d49abb84560ae17077f0dec335749c9882

Jessica Choi [Fri, 27 Aug 2021 21:46:31 +0000 (14:46 -0700)]

Removing references to ProcessGroupAgent in comments (#64051)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64051

cc pietern mrshenli pritamdamania87 zhaojuanmao satgera rohan-varma gqchen aazzolini osalpekar jiayisuse agolynski SciPioneer H-Huang mrzzd cbalioglu gcramer23

Test Plan: Imported from OSS

Reviewed By: mrshenli

Differential Revision:

D30587076

Pulled By: jaceyca

fbshipit-source-id:

414cb95faad0b4da0eaf2956c0668af057f93574

Erjia Guan [Fri, 27 Aug 2021 21:15:23 +0000 (14:15 -0700)]

Add README to datapipes (#63982)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63982

Add a readme to `datapipes` for developer. This is can be a replacement of https://github.com/pytorch/pytorch/blob/master/torch/utils/data/datapipes_tutorial_dev_loaders.ipynb

After this PR is landed, the README.md will be added to PyTorch Wiki

Test Plan: Imported from OSS

Reviewed By: soulitzer

Differential Revision:

D30554198

Pulled By: ejguan

fbshipit-source-id:

6091aae8ef915c7c1f00fbf45619c86c9558d308

Vincent Phan [Fri, 27 Aug 2021 20:51:38 +0000 (13:51 -0700)]

Implement leaky relu op

Summary: Implemented leaky relu op as per: https://www.internalfb.com/tasks/?t=

97492679

Test Plan:

buck build -c ndk.custom_libcxx=false -c pt.enable_qpl=0 //xplat/caffe2:pt_vulkan_api_test_binAndroid\#android-arm64 --show-output

adb push buck-out/gen/xplat/caffe2/pt_vulkan_api_test_binAndroid\#android-arm64 /data/local/tmp/vulkan_api_test

adb shell "/data/local/tmp/vulkan_api_test"

all tests pass, including new ones

Reviewed By: SS-JIA

Differential Revision:

D30186225

fbshipit-source-id:

fdb1f8f7b3a28b5504581822185c0475dcd53a3e

Patrick Hu [Fri, 27 Aug 2021 20:37:38 +0000 (13:37 -0700)]

[FX] Validate data type of target on Node Construction (#64050)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/64050

Test Plan: Imported from OSS

Reviewed By: jamesr66a

Differential Revision:

D30585535

Pulled By: yqhu

fbshipit-source-id:

96778a87e75f510b4ef42f0e5cf76b35b7b2f331

Ivan Yashchuk [Fri, 27 Aug 2021 20:21:04 +0000 (13:21 -0700)]

Sparse CUDA: rename files *.cu -> *.cpp (#63894)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63894

This PR introduces a few code structure changes. There is no need to use

.cu extension for pure c++ code without cuda. Moved

`s_addmm_out_csr_sparse_dense_cuda_worker` to a separate cpp file from

cu file.

cc nikitaved pearu cpuhrsch IvanYashchuk ngimel

Test Plan: Imported from OSS

Reviewed By: malfet

Differential Revision:

D30548771

Pulled By: cpuhrsch

fbshipit-source-id:

6f12d36e7e506d2fdbd57ef33eb73192177cd904

Scott Wolchok [Fri, 27 Aug 2021 19:55:26 +0000 (12:55 -0700)]

[PyTorch] Reduce code size of register_prim_ops.cpp (#61494)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61494

Creating a constexpr array and then looping over it is much cheaper than emitting a function call per item.

ghstack-source-id:

136639302

Test Plan:

fitsships

Buildsizebot some mobile apps to check size impact.

Reviewed By: dhruvbird, iseeyuan

Differential Revision:

D29646977

fbshipit-source-id:

6144999f6acfc4e5dcd659845859702051344d88

Marjan Fariborz [Fri, 27 Aug 2021 19:45:01 +0000 (12:45 -0700)]

Adding alltoall_single collective to collective quantization API (#63154)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63154

The collective quantization API now supports alltoall, alltoall_single, and allscatter. The test is also included.

ghstack-source-id:

136856877

Test Plan: buck test mode/dev-nosan //caffe2/test/distributed/algorithms/quantization:DistQuantizationTests_nccl -- test_all_to_all_single_bfp16

Reviewed By: wanchaol

Differential Revision:

D30255251

fbshipit-source-id:

856f4fa12de104689a03a0c8dc9e3ecfd41cad29

albanD [Fri, 27 Aug 2021 18:53:27 +0000 (11:53 -0700)]

New TLS to disable forward mode AD (#63117)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/63117

Test Plan: Imported from OSS

Reviewed By: ngimel

Differential Revision:

D30388097

Pulled By: albanD

fbshipit-source-id:

f1bc777064645db1ff848bdd64af95bffb530984

Karen Zhou [Fri, 27 Aug 2021 18:51:09 +0000 (11:51 -0700)]

[pruner] add README to repo (#64099)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64099

adding readme to pruner in OSS

ghstack-source-id:

136867516

Test Plan: should not affect behavior

Reviewed By: z-a-f

Differential Revision:

D30608045

fbshipit-source-id:

3e9899a853395b2e91e8a69a5d2ca5f3c2acc646

mrshenli [Fri, 27 Aug 2021 18:28:31 +0000 (11:28 -0700)]

Improve `distributed.get_rank()` API docstring (#63296)

Summary:

See discussion in https://pytorch.slack.com/archives/CBHSWPNM7/p1628792389008600

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63296

Reviewed By: cbalioglu

Differential Revision:

D30332042

Pulled By: mrshenli

fbshipit-source-id:

3a642fda2e106fd35b67709ed2adb60e408854c2

Joel Schlosser [Fri, 27 Aug 2021 18:28:03 +0000 (11:28 -0700)]

Modules note v2 (#63963)

Summary:

This PR expands the [note on modules](https://pytorch.org/docs/stable/notes/modules.html) with additional info for 1.10.

It adds the following:

* Examples of using hooks

* Examples of using apply()

* Examples for ParameterList / ParameterDict

* register_parameter() / register_buffer() usage

* Discussion of train() / eval() modes

* Distributed training overview / links

* TorchScript overview / links

* Quantization overview / links

* FX overview / links

* Parametrization overview / link to tutorial

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63963

Reviewed By: albanD

Differential Revision:

D30606604

Pulled By: jbschlosser

fbshipit-source-id:

c1030b19162bcb5fe7364bcdc981a2eb6d6e89b4

Tugsbayasgalan (Tugsuu) Manlaibaatar [Fri, 27 Aug 2021 18:18:52 +0000 (11:18 -0700)]

Detect out argument in the schema (#62755)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62755

After this change, out argument can be checked by calling is_out()

Test Plan: Imported from OSS

Reviewed By: mruberry

Differential Revision:

D30415256

Pulled By: tugsbayasgalan

fbshipit-source-id:

b2e1fa46bab7c813aaede1f44149081ef2df566d

Don Jang [Fri, 27 Aug 2021 17:42:50 +0000 (10:42 -0700)]

[Static Runtime] Add out variant of quantized::embedding_bag_byte_prepack (#64081)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64081

This change add an out variant of `quantized::embedding_bag_byte_prepack`.

Test Plan:

- Added `ShapeInferenceTest.QEmbeddingBagByteUnpack`.

- Observed

```

V0824 13:38:49.723708 1322143 impl.cpp:1394] Switch to out variant for node: %2 : Tensor = quantized::embedding_bag_byte_prepack(%input)

```

Reviewed By: hlu1

Differential Revision:

D30504216

fbshipit-source-id:

1d9d428e77a15bcc7da373d65e7ffabaf9c6caf2

BBuf [Fri, 27 Aug 2021 17:42:24 +0000 (10:42 -0700)]

fix resize bug (#61166)

Summary:

I think the original intention here is to only take effect in the case of align_corners (because output_size = 1 and the divisor will be 0), but it affects non-align_corners too. For example:

```python

input = torch.tensor(

np.arange(1, 5, dtype=np.int32).reshape((1, 1, 2, 2)) )

m = torch.nn.Upsample(scale_factor=0.5, mode="bilinear")

of_out = m(input)

```

The result we expect should be [[[[2.5]]]]

but pytorch get [[[[1.0]]]] which is different from OpenCV and PIL, this pr try to fixed it。

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61166

Reviewed By: malfet

Differential Revision:

D30543178

Pulled By: heitorschueroff

fbshipit-source-id:

21a4035483981986b0ae4a401ef0efbc565ccaf1

Pierluigi Taddei [Fri, 27 Aug 2021 17:36:08 +0000 (10:36 -0700)]

[caffe2] fixes to allow stricter compilation flag (#64016)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64016

In order to increase the strictness of the compilation for some target depending on caffe2 we need to fix some errors uncovered when rising such flags.

This change introduces the required override tokens for virtual destructors

Test Plan: CI. Moreover targets depending on caffe2 using clang strict warnings now compile

Reviewed By: kalman5

Differential Revision:

D30541714

fbshipit-source-id:

564af31b4a9df3536d7d6f43ad29e1d0c7040551

Heitor Schueroff [Fri, 27 Aug 2021 17:16:02 +0000 (10:16 -0700)]

Added reference tests to ReductionOpInfo (#62900)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/62900

Test Plan: Imported from OSS

Reviewed By: mruberry

Differential Revision:

D30408815

Pulled By: heitorschueroff

fbshipit-source-id:

6a1f82ac281920ff7405a42f46ccd796e60af9d6

Mike Iovine [Fri, 27 Aug 2021 17:10:48 +0000 (10:10 -0700)]

[JIT] Add aten::slice optimization (#63049)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63049

Given a graph produced from a function like this:

```

def foo():

li = [1, 2, 3, 4, 5, 6]

return li[0:2]

```

This pass produces a graph like this:

```

def foo():

li = [1, 2]

return li

```

These changes are mostly adapted from https://github.com/pytorch/pytorch/pull/62297/

Test Plan: `buck test //caffe2/test:jit -- TestPeephole`

Reviewed By: eellison

Differential Revision:

D30231044

fbshipit-source-id:

d12ee39f68289a574f533041a5adb38b2f000dd5

Jonathan Chang [Fri, 27 Aug 2021 16:49:39 +0000 (09:49 -0700)]

Add doc for nn.MultiMarginLoss (shape, example) (#63760)

Summary:

Fixes https://github.com/pytorch/pytorch/issues/63747

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63760

Reviewed By: malfet

Differential Revision:

D30541581

Pulled By: jbschlosser

fbshipit-source-id:

99560641e614296645eb0e51999513f57dfcfa98

Peter Bell [Fri, 27 Aug 2021 16:37:10 +0000 (09:37 -0700)]

Refactor structured set_output in Register{DispatchKey}.cpp (#62188)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62188

These parts of the `set_output` code are identical for all operators in the

kernel registration files. So, this moves them from being copied into every

class to two helper functions at the top of the file.

Test Plan: Imported from OSS

Reviewed By: soulitzer

Differential Revision:

D29962045

Pulled By: albanD

fbshipit-source-id:

753b8aac755f3c91b77ffa2c30a89ac91a84b7c4

Sergei Vorobev [Fri, 27 Aug 2021 16:31:36 +0000 (09:31 -0700)]

[bazel] GPU-support: add @local_config_cuda and @cuda (#63604)

Summary:

## Context

We take the first step at tackling the GPU-bazel support by adding bazel external workspaces `local_config_cuda` and `cuda`, where the first one has some hardcoded values and lists of files, and the second one provides a nicer, high-level wrapper that maps into the already expected by pytorch bazel targets that are guarded with `if_cuda` macro.

The prefix `local_config_` signifies the fact that we are breaking the bazel hermeticity philosophy by explicitly relaying on the CUDA installation that is present on the machine.

## Testing

Notice an important scenario that is unlocked by this change: compilation of cpp code that depends on cuda libraries (i.e. cuda.h and so on).

Before:

```

sergei.vorobev@cs-sv7xn77uoy-gpu-

1628706590:~/src/pytorch4$ bazelisk build --define=cuda=true //:c10

ERROR: /home/sergei.vorobev/src/pytorch4/tools/config/BUILD:12:1: no such package 'tools/toolchain': BUILD file not found in any of the following directories. Add a BUILD file to a directory to mark it as a package.

- /home/sergei.vorobev/src/pytorch4/tools/toolchain and referenced by '//tools/config:cuda_enabled_and_capable'

ERROR: While resolving configuration keys for //:c10: Analysis failed

ERROR: Analysis of target '//:c10' failed; build aborted: Analysis failed

INFO: Elapsed time: 0.259s

INFO: 0 processes.

FAILED: Build did NOT complete successfully (2 packages loaded, 2 targets configured)

```

After:

```

sergei.vorobev@cs-sv7xn77uoy-gpu-

1628706590:~/src/pytorch4$ bazelisk build --define=cuda=true //:c10

INFO: Analyzed target //:c10 (6 packages loaded, 246 targets configured).

INFO: Found 1 target...

Target //:c10 up-to-date:

bazel-bin/libc10.lo

bazel-bin/libc10.so

INFO: Elapsed time: 0.617s, Critical Path: 0.04s

INFO: 0 processes.

INFO: Build completed successfully, 1 total action

```

The `//:c10` target is a good testing one for this, because it has such cases where the [glob is different](https://github.com/pytorch/pytorch/blob/

075024b9a34904ec3ecdab3704c3bcaa329bdfea/BUILD.bazel#L76-L81), based on do we compile for CUDA or not.

## What is out of scope of this PR

This PR is a first in a series of providing the comprehensive GPU bazel build support. Namely, we don't tackle the [cu_library](https://github.com/pytorch/pytorch/blob/

11a40ad915d4d3d8551588e303204810887fcf8d/tools/rules/cu.bzl#L2) implementation here. This would be a separate large chunk of work.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63604

Reviewed By: soulitzer

Differential Revision:

D30442083

Pulled By: malfet

fbshipit-source-id:

b2a8e4f7e5a25a69b960a82d9e36ba568eb64595

Hanton Yang [Fri, 27 Aug 2021 16:23:45 +0000 (09:23 -0700)]

[OSS] Enable Metal in PyTorch MacOS nightly builds (#63718)

Summary:

Build on https://github.com/pytorch/pytorch/pull/63825

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63718

Test Plan:

1.Add `ci/binaries` label to PR, so the CI will build those nightly builds

2.Make sure the following CI jobs build with `USE_PYTORCH_METAL_EXPORT` option is `ON`:

```

ci/circleci: binary_macos_arm64_conda_3_8_cpu_nightly_build

ci/circleci: binary_macos_arm64_conda_3_9_cpu_nightly_build

ci/circleci: binary_macos_arm64_wheel_3_8_cpu_nightly_build

ci/circleci: binary_macos_arm64_wheel_3_9_cpu_nightly_build

ci/circleci: binary_macos_conda_3_6_cpu_nightly_build

ci/circleci: binary_macos_conda_3_7_cpu_nightly_build

ci/circleci: binary_macos_conda_3_8_cpu_nightly_build

ci/circleci: binary_macos_conda_3_9_cpu_nightly_build

ci/circleci: binary_macos_libtorch_3_7_cpu_nightly_build

ci/circleci: binary_macos_wheel_3_6_cpu_nightly_build

ci/circleci: binary_macos_wheel_3_7_cpu_nightly_build

ci/circleci: binary_macos_wheel_3_8_cpu_nightly_build

ci/circleci: binary_macos_wheel_3_9_cpu_nightly_build

```

3.Test `conda` and `wheel` builds locally on [HelloWorld-Metal](https://github.com/pytorch/ios-demo-app/tree/master/HelloWorld-Metal) demo with [(Prototype) Use iOS GPU in PyTorch](https://pytorch.org/tutorials/prototype/ios_gpu_workflow.html)

(1) conda

```

conda install https://

15667941-

65600975-gh.circle-artifacts.com/0/Users/distiller/project/final_pkgs/pytorch-1.10.0.dev20210826-py3.8_0.tar.bz2

```

(2) wheel

```

pip3 install https://

15598647-

65600975-gh.circle-artifacts.com/0/Users/distiller/project/final_pkgs/torch-1.10.0.dev20210824-cp38-none-macosx_10_9_x86_64.whl

```

Reviewed By: xta0

Differential Revision:

D30593167

Pulled By: hanton

fbshipit-source-id:

471da204e94b29c11301c857c50501307a5f0785

Aswin Murali [Fri, 27 Aug 2021 16:02:22 +0000 (09:02 -0700)]

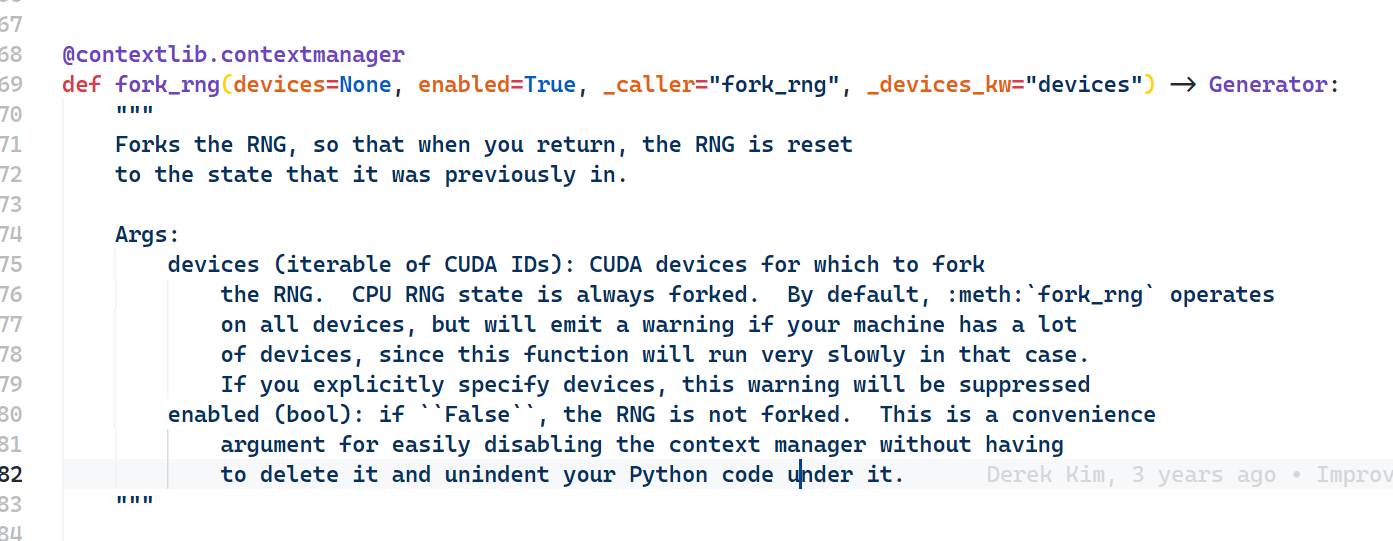

Adds return type annotation for fork_rng function (#63724)

Summary:

Fixes https://github.com/pytorch/pytorch/issues/63723

Since it's a generator function the type annotation shall be `Generator`.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63724

Reviewed By: iramazanli

Differential Revision:

D30543098

Pulled By: heitorschueroff

fbshipit-source-id:

ebdd34749defe1e26c899146786a0357ab4b4b9b

gmagogsfm [Fri, 27 Aug 2021 15:49:54 +0000 (08:49 -0700)]

More robust check of whether a class is defined in torch (#64083)

Summary:

This would prevent bugs for classes that

1) Is defined in a module that happens to start with `torch`, say `torchvision`

2) Is defined in torch but with an import alias like `import torch as th`

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64083

Reviewed By: soulitzer

Differential Revision:

D30598369

Pulled By: gmagogsfm

fbshipit-source-id:

9d3a7135737b2339c9bd32195e4e69a9c07549d4

Harut Movsisyan [Fri, 27 Aug 2021 10:03:32 +0000 (03:03 -0700)]

[Static Runtime] Out version for fmod (#64046)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/64046

Test Plan:

Confirm out variant is used:

```

> //caffe2/benchmarks/static_runtime:static_runtime_cpptest -- --v=1

V0826 23:31:30.321382 193428 impl.cpp:1395] Switch to out variant for node: %4 : Tensor = aten::fmod(%a.1, %b.1)

```

Reviewed By: mikeiovine

Differential Revision:

D30581228

fbshipit-source-id:

dfab9a16ff8afd40b29338037769f938f154bf74

Don Jang [Fri, 27 Aug 2021 09:43:22 +0000 (02:43 -0700)]

[Static Runtime] Manage temporary Tensors for aten::layer_norm (#64078)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64078

This change converts `aten::layer_norm -> output Tensor` to `static_runtime::layer_norm -> (output Tensor, temp1 Tensor, tmp2 Tensor)` to manage `tmp1` and `tmp2` Tensors by the static runtime.

Currently the out-variant of `aten::layer_norm` creates two temporary Tensors inside it:

```

at::Tensor mean = create_empty_from({M}, *X);

at::Tensor rstd = create_empty_from({M}, *X);

```

that the static runtime misses an opportunity to manage.

This change puts them into (unused) output Tensors of a new placeholder op `static_runtime::layer_norm` so that the static runtime can mange them since the static runtime as of now chooses to manage only output tensors.

Test Plan:

- Enhanced `StaticRuntime.LayerNorm` to ensure that `static_runtime::layer_norm` gets activated.

- Confirmed that the new op gets activated during testing:

```

V0825 12:51:50.017890 2265227 impl.cpp:1396] Switch to out variant for node: %8 : Tensor, %9 : Tensor, %10 : Tensor = static_runtime::layer_norm(%input.1, %normalized_shape.1, %4, %4, %5, %3)

```

Reviewed By: hlu1

Differential Revision:

D30486475

fbshipit-source-id:

5121c44ab58c2d8a954aa0bbd9dfeb7468347a2d

Hao Lu [Fri, 27 Aug 2021 08:39:14 +0000 (01:39 -0700)]

[Static Runtime] Use F14FastMap/F14FastSet (#63999)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63999

Use folly::F14FastMap/F14FastSet instead of std::unordered_map/unordered_set in the Static Runtime code base. folly::F14FastMap/F14FastSet implements the same APIs as std::unordered_map/unordered_set but faster. For details see https://github.com/facebook/folly/blob/master/folly/container/F14.md

Reviewed By: d1jang

Differential Revision:

D30566149

fbshipit-source-id:

20a7fa2519e4dde96fb3fc61ef6c92bf6d759383

Ansha Yu [Fri, 27 Aug 2021 06:17:42 +0000 (23:17 -0700)]

[static runtime] port c2 argmin kernel (#63632)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63632

Local benchmarking with 1 input repeated 10k iter on 290331537_4 local net. Reduces argmin runtime by about 80% and and local net execution by about ~0.71-0.77ms.

Before:

```

I0826 17:25:53.972786 1104614 PyTorchPredictorBenchLib.cpp:313] PyTorch run finished. Milliseconds per iter: 7.37599. Iters per second: 135.57

```

```

Static runtime ms per iter: 8.22086. Iters per second: 121.642

Time per node type:

4.13527 ms. 50.9157%. fb::sigrid_transforms_torch_bind (1 nodes, out variant)

0.868506 ms. 10.6935%. aten::argmin (1 nodes, out variant)

...

```

After:

```

I0826 17:17:54.165174 1064079 PyTorchPredictorBenchLib.cpp:313] PyTorch run finished. Milliseconds per iter: 6.66724. Iters per second: 149.987

```

```

Static runtime ms per iter: 7.68172. Iters per second: 130.179

Time per node type:

4.1452 ms. 54.0612%. fb::sigrid_transforms_torch_bind (1 nodes, out variant)

0.656778 ms. 8.56562%. fb::quantized_linear (8 nodes)

0.488229 ms. 6.36741%. static_runtime::to_copy (827 nodes, out variant)

0.372678 ms. 4.86042%. aten::argmin (1 nodes, out variant)

...Time per node type:

3.39387 ms. 53.5467%. fb::sigrid_transforms_torch_bind (1 nodes, out variant)

0.636216 ms. 10.0379%. fb::quantized_linear (8 nodes, out variant)

0.410535 ms. 6.47721%. fb::clip_ranges_to_gather_to_offsets (304 nodes, out variant)

0.212721 ms. 3.3562%. fb::clip_ranges_gather_sigrid_hash_precompute_v3 (157 nodes, out variant)

0.173736 ms. 2.74111%. aten::matmul (1 nodes, out variant)

0.150514 ms. 2.37474%. aten::argmin (1 nodes, out variant)

```

P447422384

Test Plan:

Test with local replayer sending traffic to `ansha_perf_test_0819.test`, and compare outputs to jit interpreter.

Start compute tier:

```

RUN_UUID=ansha_perf_test_0819.test.storage JOB_EXPIRE_TIME=864000 MODEL_ID=290331537_4 PREDICTOR_TAG= PREDICTOR_VERSION=405 PREDICTOR_TYPE=CPU ADDITIONAL_FLAGS="--enable_disagg_file_split=true --enable_adx=false --load_remote_file_locally=true --pytorch_predictor_static_runtime_whitelist_by_id=

290331537" GFLAGS_CONFIG_PATH=sigrid/predictor/gflags/predictor_gflags_ads_perf_cpu_pyper SMC_TIER_NAME=sigrid.predictor.perf.ansha_per_test_0819.test.storage CLUSTER=tsp_rva ENTITLEMENT_NAME=ads_ranking_infra_test_t6 PREDICTOR_LOCAL_DIRECTORY= ICET_CONFIG_PATH= NNPI_COMPILATION_CONFIG_FILE= NUM_TASKS=1 NNPI_NUM_WORKERS=0 tw job start /data/users/ansha/fbsource/fbcode/tupperware/config/admarket/sigrid/predictor/predictor_perf_canary.tw

```

Start nnpi tier:

```

RUN_UUID=ansha_perf_test_0819.test JOB_EXPIRE_TIME=247200 MODEL_ID=290331537_4 PREDICTOR_TAG= PREDICTOR_VERSION=343 PREDICTOR_TYPE=NNPI_TWSHARED ADDITIONAL_FLAGS="--torch_glow_min_fusion_group_size=30 --pytorch_storage_tier_replayer_sr_connection_options=overall_timeout:1000000,processing_timeout:1000000 --predictor_storage_smc_tier=sigrid.predictor.perf.ansha_perf_test_0819.test.storage --pytorch_predictor_static_runtime_whitelist_by_id=

290331537" GFLAGS_CONFIG_PATH=sigrid/predictor/gflags/predictor_gflags_ads_perf_glow_nnpi_pyper_v1 SMC_TIER_NAME=sigrid.predictor.perf.ansha_perf_test_0819.test CLUSTER=tsp_rva ENTITLEMENT_NAME=ads_ranking_infra_test_t17 PREDICTOR_LOCAL_DIRECTORY= ICET_CONFIG_PATH= NNPI_COMPILATION_CONFIG_FILE= NUM_TASKS=1 NNPI_NUM_WORKERS=0 tw job start /data/users/ansha/fbsource/fbcode/tupperware/config/admarket/sigrid/predictor/predictor_perf_canary.tw

```

```buck test caffe2/benchmarks/static_runtime:static_runtime_cpptest -- StaticRuntime.IndividualOps_Argmin --print-passing-details```

Compared outputs to jit interpreter to check for no differences greater than 1e-3 (with nnc on) https://www.internalfb.com/intern/diff/view-version/

136824794/

Reviewed By: hlu1

Differential Revision:

D30445635

fbshipit-source-id:

048de8867ac72f764132295d1ebfa843cde2fa27

Supriya Rao [Fri, 27 Aug 2021 04:05:56 +0000 (21:05 -0700)]

[quant] Add support for linear_relu fusion for FP16 dynamic quant (#63826)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63826

Support the conversion of the intrinsic linearRelu module to the quantized dynamic LinearReLU module

Verify the support works for both linear module and functional linear fusion

Test Plan:

python test/test_quantization.py test_dynamic_with_fusion

Imported from OSS

Reviewed By: iramazanli

Differential Revision:

D30503513

fbshipit-source-id:

70446797e9670dfef7341cba2047183d6f88b70f

Supriya Rao [Fri, 27 Aug 2021 04:05:56 +0000 (21:05 -0700)]

[quant] Add op support for linear_relu_dynamic_fp16 (#63824)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63824

Add a fused operator implementation that will work with the quantization fusion APIs.

Once FBGEMM FP16 kernel supports relu fusion natively we can remove the addition from the PT operator.

Test Plan:

python test/test_quantization.py

Imported from OSS

Reviewed By: heitorschueroff

Differential Revision:

D30503514

fbshipit-source-id:

6bf3bd53f47ffaa3f1d178eaad8cc980a7f5258a

Supriya Rao [Fri, 27 Aug 2021 04:05:56 +0000 (21:05 -0700)]

[quant] support linear_relu_dynamic for qnnpack backend (#63820)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63820

Adds support in the operator directly to call relu operator if relu fusion is enabled.

Once QNNPACK natively supports relu fusion in the linear_dynamic this can be removed

Test Plan:

python test/test_quantization.py TestDynamicQuantizedLinear.test_qlinear

Imported from OSS

Reviewed By: vkuzo

Differential Revision:

D30502813

fbshipit-source-id:

3352ee5f73e482b6d1941f389d720a461b84ba23

Supriya Rao [Fri, 27 Aug 2021 04:05:56 +0000 (21:05 -0700)]

[quant][fx] Add support for dynamic linear + relu fusion (INT8) (#63799)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63799

Add a new module that can be used for module swap with the nni.LinearReLU module in convert function.

Supports INT8 currently (since FP16 op doesn't have relu fusion yet).

Fixes #55393

Test Plan:

python test/test_quantization.py test_dynamic_fusion

Imported from OSS

Reviewed By: heitorschueroff

Differential Revision:

D30502812

fbshipit-source-id:

3668e4f001a0626d469e17ac323acf582ee28a51

Michael Suo [Fri, 27 Aug 2021 03:54:54 +0000 (20:54 -0700)]

[torch/deploy] add torch.distributed to build (#63918)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63918

Previously we were building with `USE_DISTRIBUTED` off, because c10d was built as a separately library for historical reasons. Since then, lw has merged the c10d build into libtorch, so this is fairly easy to turn on.

Differential Revision:

D30492442

**NOTE FOR REVIEWERS**: This PR has internal Facebook specific changes or comments, please review them on [Phabricator](https://our.intern.facebook.com/intern/diff/

D30492442/)!

D30492442

D30492442

Test Plan: added a unit test

Reviewed By: wconstab

Pulled By: suo

fbshipit-source-id:

843b8fcf349a72a7f6fcbd1fcc8961268690fb8c

Can Balioglu [Fri, 27 Aug 2021 03:16:10 +0000 (20:16 -0700)]

Introduce the torchrun entrypoint (#64049)

Summary:

This PR introduces a new `torchrun` entrypoint that simply "points" to `python -m torch.distributed.run`. It is shorter and less error-prone to type and gives a nicer syntax than a rather cryptic `python -m ...` command line. Along with the new entrypoint the documentation is also updated and places where `torch.distributed.run` are mentioned are replaced with `torchrun`.

cc pietern mrshenli pritamdamania87 zhaojuanmao satgera rohan-varma gqchen aazzolini osalpekar jiayisuse agolynski SciPioneer H-Huang mrzzd cbalioglu gcramer23

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64049

Reviewed By: cbalioglu

Differential Revision:

D30584041

Pulled By: kiukchung

fbshipit-source-id:

d99db3b5d12e7bf9676bab70e680d4b88031ae2d

nikithamalgi [Fri, 27 Aug 2021 01:54:51 +0000 (18:54 -0700)]

Merge script and _script_pdt API (#62420)

Summary:

Merge `torch.jit.script` and `torch.jit._script_pdt` API. This PR merges profile directed typing with script api

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62420

Reviewed By: iramazanli

Differential Revision:

D30579015

Pulled By: nikithamalgifb

fbshipit-source-id:

99ba6839d235d61b2dd0144b466b2063a53ccece

Maksim Levental [Fri, 27 Aug 2021 00:59:59 +0000 (17:59 -0700)]

port glu to use structured kernel approach (#61800)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61800

resubmitting because the [last one](https://github.com/pytorch/pytorch/pull/61433) was unrecoverable due to making changes incorrectly in the stack

Test Plan: Imported from OSS

Reviewed By: iramazanli

Differential Revision:

D29812492

Pulled By: makslevental

fbshipit-source-id:

c3dfeacd1e00a526e24fbaab02dad48069d690ef

Jane Xu [Fri, 27 Aug 2021 00:36:56 +0000 (17:36 -0700)]

Run through failures on trunk (#64063)

Summary:

This PR runs all the tests on trunk instead of stopping on first failure.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64063

Reviewed By: malfet, seemethere

Differential Revision:

D30592020

Pulled By: janeyx99

fbshipit-source-id:

318b225cdf918a98f73e752d1cc0227d9227f36c

Paul Johnson [Fri, 27 Aug 2021 00:28:35 +0000 (17:28 -0700)]

[pytorch] add per_sample_weights support for embedding_bag_4bit_rowwise_offsets (#63605)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/63605

Reviewed By: houseroad

Differential Revision:

D30434664

fbshipit-source-id:

eb4cbae3c705f9dec5c073a56f0f23daee353bc1

Michael Dagitses [Fri, 27 Aug 2021 00:26:52 +0000 (17:26 -0700)]

document that `torch.triangular_solve` has optional out= parameter (#63253)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63253

Fixes #57955

Test Plan: Imported from OSS

Reviewed By: malfet

Differential Revision:

D30312134

Pulled By: dagitses

fbshipit-source-id:

4f484620f5754f4324a99bbac1ff783c64cee6b8

Jiewen Tan [Thu, 26 Aug 2021 23:49:13 +0000 (16:49 -0700)]

Enable test_api IMethodTest in OSS (#63345)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63345

This diff did the following few things to enable the tests:

1. Exposed IMethod as TORCH_API.

2. Linked torch_deploy to test_api if USE_DEPLOY == 1.

3. Generated torch::deploy examples when building torch_deploy library.

Test Plan: ./build/bin/test_api --gtest_filter=IMethodTest.*

Reviewed By: ngimel

Differential Revision:

D30346257

Pulled By: alanwaketan

fbshipit-source-id:

932ae7d45790dfb6e00c51893933a054a0fad86d

Don Jang [Thu, 26 Aug 2021 23:28:35 +0000 (16:28 -0700)]

[Static Runtime] Remove unnecessary fb::equally_split nodes (#64022)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/64022

Test Plan: - Added unittest `StaticRuntime.RemoveEquallySplitListUnpack`.

Reviewed By: hlu1

Differential Revision:

D30472189

fbshipit-source-id:

36040b0146f4be9d0d0fda293f7205f43aad0b87

Shijun Kong [Thu, 26 Aug 2021 23:06:17 +0000 (16:06 -0700)]

[pytorch][quant][oss] Support 2-bit embedding_bag op "embedding_bag_2bit_rowwise_offsets" (#63658)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63658

Support 2-bit embedding_bag op "embedding_bag_2bit_rowwise_offsets"

Reviewed By: jingsh, supriyar

Differential Revision:

D30454994

fbshipit-source-id:

7aa7bfe405c2ffff639d5658a35181036e162dc9

soulitzer [Thu, 26 Aug 2021 23:00:21 +0000 (16:00 -0700)]

Move variabletype functions around (#63330)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63330

- This is in preparation for templated/boxed autograd-not-implemented fallback

- Make sure VariableTypeUtils does not depend on generated code

- Lift `isFwGradDefined` into `autograd/functions/utils.cpp` so it's available to mobile builds

- Removes `using namespace at` from VariableTypeUtils, previously we needed this for Templated version, but now its not strictly necessary but still a good change to avoid name conflicts if this header is included elsewhere in the future.

Test Plan: Imported from OSS

Reviewed By: heitorschueroff

Differential Revision:

D30518573

Pulled By: soulitzer

fbshipit-source-id:

a0fb904baafc9713de609fffec4b813f6cfcc000

Bo Wang [Thu, 26 Aug 2021 23:00:16 +0000 (16:00 -0700)]

More sharded_tensor creation ops: harded_tensor.zeros, sharded_tensor.full, sharded_tensor.rand (#63732)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/63732

Test Plan:

$ python test/distributed/_sharded_tensor/test_sharded_tensor.py --v

$ python test/distributed/_sharded_tensor/test_sharded_tensor.py TestCreateTensorFromParams --v

$ python test/distributed/_sharded_tensor/test_sharded_tensor.py TestShardedTensorChunked --v

Imported from OSS

Differential Revision:

D30472621

D30472621

Reviewed By: pritamdamania87

Pulled By: bowangbj

fbshipit-source-id:

fd8ebf9b815fdc292ad1aad521f9f4f454163d0e

Jane Xu [Thu, 26 Aug 2021 22:42:00 +0000 (15:42 -0700)]

Add shard number to print_test_stats.py upload name (#64055)

Summary:

Now that the render test results job is gone, each shard on GHA is uploading a JSON test stats report. To ensure differentiation, this PR includes the shard number in the report name.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64055

Reviewed By: iramazanli

Differential Revision:

D30586869

Pulled By: janeyx99

fbshipit-source-id:

fd19f347131deec51486bb0795e4e13ac19bc71a

MengeTM [Thu, 26 Aug 2021 22:32:06 +0000 (15:32 -0700)]

Derivatives of relu (#63027) (#63089)

Summary:

Optimization of relu and leaky_relu derivatives for reduction of VRAM needed for the backward-passes

Fixes https://github.com/pytorch/pytorch/issues/63027

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63089

Reviewed By: iramazanli

Differential Revision:

D30582049

Pulled By: albanD

fbshipit-source-id:

a9481fe8c10cbfe2db485e28ce80cabfef501eb8

Facebook Community Bot [Thu, 26 Aug 2021 22:18:37 +0000 (15:18 -0700)]

Automated submodule update: FBGEMM (#62879)

Summary:

This is an automated pull request to update the first-party submodule for [pytorch/FBGEMM](https://github.com/pytorch/FBGEMM).

New submodule commit: https://github.com/pytorch/FBGEMM/commit/

ce5470385723b0262b47250d6af05f1b734e4509

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62879

Test Plan: Ensure that CI jobs succeed on GitHub before landing.

Reviewed By: jspark1105

Differential Revision:

D30154801

fbshipit-source-id:

b2ce185da6f6cadf5128f82b15097d9e13e9e6a0