Gregory Chanan [Sun, 21 Apr 2019 20:43:02 +0000 (13:43 -0700)]

Hook up non_differentiability in derivatives.yaml when no autograd function is generated. (#19520)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19520

ghimport-source-id:

a1272aa0b23692fb189974c4daba7b2e4e0dad50

Differential Revision:

D15021380

Pulled By: gchanan

fbshipit-source-id:

ec83efd4bb6d17714c060f13a0527a33a10452db

Gregory Chanan [Sun, 21 Apr 2019 18:03:09 +0000 (11:03 -0700)]

Move non_differentiable_arg_names from autograd functions to differentiability_info. (#19519)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19519

ghimport-source-id:

74e603688b2e4ed33f6c46c7da9d009336140e74

Differential Revision:

D15021378

Pulled By: gchanan

fbshipit-source-id:

e366a914c67a90ba0552b67d0bf5b347edbaf189

Tongzhou Wang [Sun, 21 Apr 2019 04:36:54 +0000 (21:36 -0700)]

Move cuFFT plan cache note outside Best Practices (#19538)

Summary:

I mistakenly put it there.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19538

Differential Revision:

D15026500

Pulled By: soumith

fbshipit-source-id:

0c13499571fdfd789c3bd1c4b58abd870725d422

Michael Suo [Sat, 20 Apr 2019 15:45:22 +0000 (08:45 -0700)]

Revert

D14689639: [pytorch] Allow passing lists as trace inputs.

Differential Revision:

D14689639

Original commit changeset:

6dcec8a64319

fbshipit-source-id:

03a5e7c80e7f2420e33b056b5844a78d7fd41141

Gu, Jinghui [Sat, 20 Apr 2019 09:09:15 +0000 (02:09 -0700)]

Improve optimizations for DNNLOWP support on MKL-DNN (#18843)

Summary:

In this PR, the fusion alogrithms are improved to support DNNLOWP.

1. Enabled conv fusions for DNNLOWP

2. Fused order switch op into following quantize op

3. Improve conv+sum fusion to parse larger scope/window

4. re-org fusion code to fix random crash issue due to changing graph

Pull Request resolved: https://github.com/pytorch/pytorch/pull/18843

Differential Revision:

D15021030

Pulled By: yinghai

fbshipit-source-id:

88d2199d9fc69f392de9bfbe1f291e0ebf78ab08

Nishant Pandit [Sat, 20 Apr 2019 04:39:00 +0000 (21:39 -0700)]

Make Observer class as template Quant class for QuantConfig (#19418)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19418

This change makes Observer class template which always

takes an observer function as argument. Second test-case becomes redundant, hence removing

it.

Reviewed By: jerryzh168

Differential Revision:

D15000594

fbshipit-source-id:

9555fe98a5f2054b8fd01e64e9ac2db72c043bfa

Sam Leeman-Munk [Sat, 20 Apr 2019 04:35:35 +0000 (21:35 -0700)]

Support compilation on gcc-7.4.0 (#19470)

Summary:

There are two corrections in this pull request.

The first is specific to gcc-7.4.0.

compiled with -std=c++14 gcc-7.4.0 has __cplusplus = 201402L

This does not meet the check set in Deprecated.h, which asks for >201402L.

The compiler goes down to the __GNUC__ check, which passes and sets C10_DEPRECATED_MESSAGE to a value that c++14 does not appear to support or even recognize, leading to a compile time error.

My recommended solution, which worked for my case, was to change the = into a >=

The second correction comes in response to this error:

caffe2/operators/crash_op.cc: In member function ‘virtual bool caffe2::CrashOp::RunOnDevice()’:

caffe2/operators/crash_op.cc:14:11: error: ‘SIGABRT’ was not declared in this scope

I am merely committing to the repository the solution suggested here (which worked for me)

https://discuss.pytorch.org/t/building-pytorch-from-source-in-conda-fails-in-pytorch-caffe2-operators-crash-op-cc/42859

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19470

Differential Revision:

D15019529

Pulled By: ailzhang

fbshipit-source-id:

9ce9d713c860ee5fd4266e5c2a7f336a97d7a90d

James Reed [Sat, 20 Apr 2019 02:13:10 +0000 (19:13 -0700)]

Improve embedding_bag add kernel (#19329)

Summary:

This was actually getting pretty poor throughput with respect to memory bandwidth. I used this test to measure the memory bandwidth specifically for the AXPY call: https://gist.github.com/jamesr66a/

b27ff9ecbe036eed5ec310c0a3cc53c5

And I got ~8 GB/s before this change, but ~14 GB/s after this change.

This seems to speed up the operator overall by around 1.3x (benchmark: https://gist.github.com/jamesr66a/

c533817c334d0be432720ef5e54a4166):

== Before ==

time_per_iter 0.

0001298875093460083

GB/s 3.

082544287868467

== After ==

time_per_iter 0.

00010104801654815674

GB/s 3.

9623142905451076

The large difference between the local BW increase and the full-op BW increase likely indicates significant time is being spent elsewhere in the op, so I will investigate that.

EDIT: I updated this PR to include a call into caffe2/perfkernels. This is the progression:

before

time_per_iter 8.

983819484710693e-05

GB/s 4.

456723564864611

After no axpy

time_per_iter 7.

19951868057251e-05

GB/s 5.

56126065872172

AFter perfkernels

time_per_iter 5.

6699180603027346e-05

GB/s 7.

061548257694262

After perfkernels no grad

time_per_iter 4.

388842582702637e-05

GB/s 9.

122769670026413

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19329

Reviewed By: dzhulgakov

Differential Revision:

D14969630

Pulled By: jamesr66a

fbshipit-source-id:

42d1015772c87bedd119e33c0aa2c8105160a738

Pieter Noordhuis [Sat, 20 Apr 2019 00:20:37 +0000 (17:20 -0700)]

Make finding unused model parameters optional (#19515)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19515

This is still done by default, but can now be disabled by specifying

`find_unused_parameters=False`. There are use cases where finding

unused parameters results in erroneous behavior, because a subset of

model parameters is used *outside* the `forward` function. One can

argue that doing this is not a good idea, but we should not break

existing use cases without an escape hatch. This configuration

parameter is that escape hatch.

Reviewed By: bddppq

Differential Revision:

D15016381

fbshipit-source-id:

f2f86b60771b3801ab52776e62b5fd6748ddeed0

Sebastian Messmer [Fri, 19 Apr 2019 23:59:50 +0000 (16:59 -0700)]

Disallow std::vector arguments (#19511)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19511

In the c10 operator registration API, disallow std::vector arguments and show a nice error message

pointing users towards using ArrayRef instead.

Reviewed By: ezyang

Differential Revision:

D15017423

fbshipit-source-id:

157ecc1298bbc598d2e310a16041edf195aaeff5

Sebastian Messmer [Fri, 19 Apr 2019 23:59:50 +0000 (16:59 -0700)]

Drop instead of pop (#19503)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19503

After reading the arguments from the stack, the c10 kernel wrapper accidentally popped them again, causing a vector to be allocated.

Instead, it should just drop them because they have already been read.

Reviewed By: ezyang

Differential Revision:

D15016023

fbshipit-source-id:

b694a2929f97fa77cebe247ec2e49820a3c818d5

Mikhail Zolotukhin [Fri, 19 Apr 2019 23:29:02 +0000 (16:29 -0700)]

Add minimalistic implementation of subgraph matcher. (#19322)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19322

ghimport-source-id:

93c713f829d1b2a9aa5d104cb1f30148dd37c967

Differential Revision:

D14962182

Pulled By: ZolotukhinM

fbshipit-source-id:

3989fba06502011bed9c24f12648d0baa2a4480c

Mingzhe Li [Fri, 19 Apr 2019 23:22:13 +0000 (16:22 -0700)]

Fix op benchmarks error in OSS environment (#19518)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19518

Previous design needs to run the op benchmarks from PyTorch root directory which could lead to `module not found` error in OSS environment. This diff fixes that issue by making the benchmark to be launched in the `benchmarks` folder.

Reviewed By: ilia-cher

Differential Revision:

D15020787

fbshipit-source-id:

eb09814a33432a66cc857702bc86538cd17bea3b

Mingzhe Li [Fri, 19 Apr 2019 23:22:12 +0000 (16:22 -0700)]

fix AI-PEP path error (#19514)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19514

as title

Reviewed By: hl475

Differential Revision:

D15018499

fbshipit-source-id:

9ce38e3a577432e0575a6743f5dcd2e907d3ab9d

eellison [Fri, 19 Apr 2019 23:04:01 +0000 (16:04 -0700)]

First step at container aliasing (#18710)

Summary:

First step at allowing container types within alias analysis.

Since the current implementation hides the concept of Wildcards within alias analysis and does not expose it to memory dag, we cannot represent whether a container type holds a wildcard. As a result, only handle TupleConstruct, where we can directly inspect if any input values are wildcards, and don't handle nested containers.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/18710

Differential Revision:

D15017068

Pulled By: eellison

fbshipit-source-id:

3ee76a5482cef1cc4a10f034593ca21019161c18

Xiaomeng Yang [Fri, 19 Apr 2019 22:14:50 +0000 (15:14 -0700)]

Fix relu bug for empty tensor (#19451)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19451

Fix relu bug for empty tensor

Reviewed By: xianjiec

Differential Revision:

D15009811

fbshipit-source-id:

b75e567c3bec08d7d12b950d8f1380c50c138704

Eric Faust [Fri, 19 Apr 2019 20:28:42 +0000 (13:28 -0700)]

Allow passing lists as trace inputs.

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/18636

Differential Revision:

D14689639

fbshipit-source-id:

6dcec8a64319ae3c4da9a93f574a13ce8ec223a5

Michael Suo [Fri, 19 Apr 2019 19:48:39 +0000 (12:48 -0700)]

Allow for segmented printing in PythonPrint (#19238)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19238

ghimport-source-id:

469d33cd187fa68840b201d625800a0f4fead547

Differential Revision:

D14928291

Reviewed By: zdevito

Pulled By: suo

fbshipit-source-id:

257fce3dd1601ba192092d3fc318374e3752907e

Michael Suo [Fri, 19 Apr 2019 19:48:39 +0000 (12:48 -0700)]

add resolveType to Resolver (#19237)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19237

ghimport-source-id:

70777ec37155be37efef1b743d564752e4dff9de

Differential Revision:

D14928289

Reviewed By: zdevito

Pulled By: suo

fbshipit-source-id:

46827da9ace16730669fc654bf781d83172d18b1

Michael Suo [Fri, 19 Apr 2019 19:48:39 +0000 (12:48 -0700)]

Turn resolver into a class (#19236)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19236

ghimport-source-id:

d36705ea5ecff085d0d84ea57bb96d18d7c260dd

Differential Revision:

D14928292

Reviewed By: zdevito

Pulled By: suo

fbshipit-source-id:

cd038100ac423fa1c19d0547b9e5487a633a2258

davidriazati [Fri, 19 Apr 2019 19:38:23 +0000 (12:38 -0700)]

Fix bad annotation in docs (#19501)

Summary:

Stack from [ghstack](https://github.com/ezyang/ghstack):

* **#19501 [jit] Fix bad annotation in docs**

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19501

Pulled By: driazati

Differential Revision:

D15016062

fbshipit-source-id:

3dcd0481eb48b84e98ffe8c5df2cbc9c2abf99f9

Yinghai Lu [Fri, 19 Apr 2019 19:15:59 +0000 (12:15 -0700)]

Fix out-of-topological-order issue in Nomnigraph (#19458)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19458

The algorithm in https://fburl.com/ggh9iyvc fails to really ensure topological ordering of nodes. The fix is ugly but effective. I think we need a real topological sort to fix this issue more nicely. Mikhail Zolotukhin, Bram Wasti.

Differential Revision:

D15011893

fbshipit-source-id:

130c3aa442f5d578adfb14fbe5f16aa722434942

Roy Li [Fri, 19 Apr 2019 18:55:46 +0000 (11:55 -0700)]

Remove uses of TypeID (#19452)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19452

ghimport-source-id:

816ae7fe1a18d76f064d5796dec44dca6a138a21

Differential Revision:

D15009920

Pulled By: li-roy

fbshipit-source-id:

722f05a927528148555561da62839f84dba645c6

Jerry Zhang [Fri, 19 Apr 2019 18:53:46 +0000 (11:53 -0700)]

Expose QScheme in frontend (#19381)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19381

Expose QScheme enum in frontend so that people can use it in

quantization configs in modules.

Differential Revision:

D14922992

fbshipit-source-id:

ab07b8a7ec42c1c1f5fe84a4a0c805adbcad408d

Gregory Chanan [Fri, 19 Apr 2019 18:23:53 +0000 (11:23 -0700)]

Revert

D15003385: Have embedding_dense_backward match JIT signature.

Differential Revision:

D15003385

Original commit changeset:

53cbe18aa454

fbshipit-source-id:

be904ee2212aa9e402715c436a84d95f6cde326f

Gregory Chanan [Fri, 19 Apr 2019 18:23:53 +0000 (11:23 -0700)]

Revert

D15003379: Have _embedding_bag_dense_backward match JIT signature.

Differential Revision:

D15003379

Original commit changeset:

f8e82800171f

fbshipit-source-id:

55f83557998d166aeb41d00d7a590acdc76fcf22

Gregory Chanan [Fri, 19 Apr 2019 18:23:52 +0000 (11:23 -0700)]

Revert

D15003387: Remove 'BoolTensor', 'IndexTensor' from frontend specifications.

Differential Revision:

D15003387

Original commit changeset:

e518e8ce3228

fbshipit-source-id:

af5b107239446ea8d6f229a427d5b157fcafd224

Gregory Chanan [Fri, 19 Apr 2019 18:23:52 +0000 (11:23 -0700)]

Revert

D15003382: Make one_hot non-differentiable.

Differential Revision:

D15003382

Original commit changeset:

e9244c7a5f0a

fbshipit-source-id:

84789cf4c46c77cce655e70c2a8ff425f32f48bd

Jerry Zhang [Fri, 19 Apr 2019 18:06:02 +0000 (11:06 -0700)]

Make empty_affine_quantized private (#19446)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19446

change empty_affine_quantized to _empty_affine_quantized

Reviewed By: dzhulgakov

Differential Revision:

D15008757

fbshipit-source-id:

c7699ac0c208a8f17d88e95193970c75ba7219d3

Gregory Chanan [Fri, 19 Apr 2019 17:57:46 +0000 (10:57 -0700)]

Make one_hot non-differentiable. (#19430)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19430

ghimport-source-id:

6787473873fdc21400138a4322e17fee8db62607

Differential Revision:

D15003382

Pulled By: gchanan

fbshipit-source-id:

e9244c7a5f0ad7cd2f79635944a8b37f910231c9

Gregory Chanan [Fri, 19 Apr 2019 17:57:03 +0000 (10:57 -0700)]

Remove 'BoolTensor', 'IndexTensor' from frontend specifications. (#19429)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19429

ghimport-source-id:

6116682b84210a34babb8b87a92e7050433e5d59

Differential Revision:

D15003387

Pulled By: gchanan

fbshipit-source-id:

e518e8ce322810e06175bb4e6672d4ea1eb18efd

Gregory Chanan [Fri, 19 Apr 2019 17:56:00 +0000 (10:56 -0700)]

Have embedding_dense_backward match JIT signature. (#19427)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19427

ghimport-source-id:

93438cd495129a1e41118c62e6339909783035fd

Differential Revision:

D15003385

Pulled By: gchanan

fbshipit-source-id:

53cbe18aa4541a2501f496abfee526e40093c0ff

Gregory Chanan [Fri, 19 Apr 2019 17:53:33 +0000 (10:53 -0700)]

Have _embedding_bag_dense_backward match JIT signature. (#19428)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19428

ghimport-source-id:

037efa3df95efc1fbff631826351d1698a3c49ec

Differential Revision:

D15003379

Pulled By: gchanan

fbshipit-source-id:

f8e82800171f632e28535e416283d858156068ec

Gregory Chanan [Fri, 19 Apr 2019 17:53:13 +0000 (10:53 -0700)]

Stop generating autograd functions for derivatives.yaml entries that only specify output differentiability. (#19424)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19424

ghimport-source-id:

e9d1b86742607f5cbe39fb278fa7f378739cd6ef

Differential Revision:

D15003380

Pulled By: gchanan

fbshipit-source-id:

8efb94fbc0b843863021bf25deab57c492086237

David Riazati [Fri, 19 Apr 2019 17:20:43 +0000 (10:20 -0700)]

Fix ord() when dealing with utf8 chars (#19423)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19423

ghimport-source-id:

e7449489fbc86ec1116f94027b3c1561942413ee

Reviewed By: eellison

Differential Revision:

D15002847

Pulled By: driazati

fbshipit-source-id:

4560cebcfca695447423d48d65ed364e7dbdbedb

barrh [Fri, 19 Apr 2019 17:12:46 +0000 (10:12 -0700)]

Fix copied optimizer (#19308)

Summary:

Add the defaults field to the copied object.

Prior to this patch, optimizer.__getattr__ has excluded the defaults

attribute of optimizer source object, required by some LR schedulers. (e.g. CyclicLR with momentum)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19308

Differential Revision:

D15012801

Pulled By: soumith

fbshipit-source-id:

95801b269f6f9d78d531d4fed95c973b280cc96f

MilesCranmer [Fri, 19 Apr 2019 17:08:50 +0000 (10:08 -0700)]

Add an identity module (#19249)

Summary:

This is a simple yet useful addition to the torch.nn modules: an identity module. This is a first draft - please let me know what you think and I will edit my PR.

There is no identity module - nn.Sequential() can be used, however it is argument sensitive so can't be used interchangably with any other module. This adds nn.Identity(...) which can be swapped with any module because it has dummy arguments. It's also more understandable than seeing an empty Sequential inside a model.

See discussion on #9160. The current solution is to use nn.Sequential(). However this won't work as follows:

```python

batch_norm = nn.BatchNorm2d

if dont_use_batch_norm:

batch_norm = Identity

```

Then in your network, you have:

```python

nn.Sequential(

...

batch_norm(N, momentum=0.05),

...

)

```

If you try to simply set `Identity = nn.Sequential`, this will fail since `nn.Sequential` expects modules as arguments. Of course there are many ways to get around this, including:

- Conditionally adding modules to an existing Sequential module

- Not using Sequential but writing the usual `forward` function with an if statement

- ...

**However, I think that an identity module is the most pythonic strategy,** assuming you want to use nn.Sequential.

Using the very simple class (this isn't the same as the one in my commit):

```python

class Identity(nn.Module):

def __init__(self, *args, **kwargs):

super().__init__()

def forward(self, x):

return x

```

we can get around using nn.Sequential, and `batch_norm(N, momentum=0.05)` will work. There are of course other situations this would be useful.

Thank you.

Best,

Miles

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19249

Differential Revision:

D15012969

Pulled By: ezyang

fbshipit-source-id:

9f47e252137a1679e306fd4c169dca832eb82c0c

Junjie Bai [Fri, 19 Apr 2019 16:47:52 +0000 (09:47 -0700)]

Remove no-fork workaround for running tests with ROCm (#19436)

Summary:

This should have been fixed in newest ROCm version.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19436

Reviewed By: ezyang

Differential Revision:

D15004685

Pulled By: bddppq

fbshipit-source-id:

19fd4cca94c914dc54aabfbb4e62b328aa348a35

Edward Yang [Fri, 19 Apr 2019 15:11:01 +0000 (08:11 -0700)]

Delete defunct test/ffi directory. (#19168)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19168

ghimport-source-id:

5190a8d00c529735e99e8745c5e7cf1901fdb800

Differential Revision:

D14938318

Pulled By: ezyang

fbshipit-source-id:

eaeb6814178c434f737b99ae1fce63fd9ecdb432

Bharat123rox [Fri, 19 Apr 2019 14:56:58 +0000 (07:56 -0700)]

Fix missing doc out= for torch.cumprod (#19340)

Summary:

Fix #19255 by adding the `out=None` argument for `torch.cumprod` missing [here](https://pytorch.org/docs/master/torch.html#torch.cumprod) also added the docstring for `out` in torch.cumsum which was missing [here](https://pytorch.org/docs/master/torch.html#torch.cumsum)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19340

Differential Revision:

D14973931

Pulled By: ezyang

fbshipit-source-id:

232f5c9a606b749d67d068afad151539866fedda

Clément Pinard [Fri, 19 Apr 2019 14:17:09 +0000 (07:17 -0700)]

Mention packed accessors in tensor basics doc (#19464)

Summary:

This is a continuation of efforts into packed accessor awareness.

A very simple example is added, along with the mention that the template can hold more arguments.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19464

Differential Revision:

D15012564

Pulled By: soumith

fbshipit-source-id:

a19ed536e016fae519b062d847cc58aef01b1b92

Gregory Chanan [Fri, 19 Apr 2019 13:58:41 +0000 (06:58 -0700)]

Rename 'not_differentiable' to 'non_differentiable'. (#19272)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19272

ghimport-source-id:

755e91efa68c5a1c4377a6853f21b3eee3f8cab5

Differential Revision:

D15003381

Pulled By: gchanan

fbshipit-source-id:

54db27c5c5e65acf65821543db3217de9dd9bdb5

Lu Fang [Fri, 19 Apr 2019 06:56:32 +0000 (23:56 -0700)]

Clean the onnx constant fold code a bit (#19398)

Summary:

This is a follow up PR of https://github.com/pytorch/pytorch/pull/18698 to lint the code using clang-format.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19398

Differential Revision:

D14994517

Pulled By: houseroad

fbshipit-source-id:

2ae9f93e66ce66892a1edc9543ea03932cd82bee

Eric Faust [Fri, 19 Apr 2019 06:48:59 +0000 (23:48 -0700)]

Allow passing dicts as trace inputs. (#18092)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/18092

Previously, tracing required all inputs to be either tensors,

or tuples of tensor. Now, we allow users to pass dicts as well.

Differential Revision:

D14491795

fbshipit-source-id:

7a2df218e5d00f898d01fa5b9669f9d674280be3

Lu Fang [Fri, 19 Apr 2019 06:31:32 +0000 (23:31 -0700)]

skip test_trace_c10_ops if _caffe2 is not built (#19099)

Summary:

fix https://github.com/pytorch/pytorch/issues/18142

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19099

Differential Revision:

D15010452

Pulled By: houseroad

fbshipit-source-id:

5bf158d7fce7bfde109d364a3a9c85b83761fffb

Gemfield [Fri, 19 Apr 2019 05:34:52 +0000 (22:34 -0700)]

remove needless ## in REGISTER_ALLOCATOR definition. (#19261)

Summary:

remove needless ## in REGISTER_ALLOCATOR definition.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19261

Differential Revision:

D15002025

Pulled By: soumith

fbshipit-source-id:

40614b1d79d1fe05ccf43f0ae5aab950e4c875c2

Lara Haidar-Ahmad [Fri, 19 Apr 2019 05:25:04 +0000 (22:25 -0700)]

Strip doc_string from exported ONNX models (#18882)

Summary:

Strip the doc_string by default from the exported ONNX models (this string has the stack trace and information about the local repos and folders, which can be confidential).

The users can still generate the doc_string by specifying add_doc_string=True in torch.onnx.export().

Pull Request resolved: https://github.com/pytorch/pytorch/pull/18882

Differential Revision:

D14889684

Pulled By: houseroad

fbshipit-source-id:

26d2c23c8dc3f484544aa854b507ada429adb9b8

Natalia Gimelshein [Fri, 19 Apr 2019 05:20:44 +0000 (22:20 -0700)]

improve dim sort performance (#19379)

Summary:

We are already using custom comparators for sorting (for a good reason), but are still making 2 sorting passes - global sort and stable sorting to bring values into their slices. Using a custom comparator to sort within a slice allows us to avoid second sorting pass and brings up to 50% perf improvement.

t-vi I know you are moving sort to ATen, and changing THC is discouraged, but #18350 seems dormant. I'm fine with #18350 landing first, and then I can put in these changes.

cc umanwizard for review.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19379

Differential Revision:

D15011019

Pulled By: soumith

fbshipit-source-id:

48e5f5aef51789b166bb72c75b393707a9aed57c

SsnL [Fri, 19 Apr 2019 05:16:05 +0000 (22:16 -0700)]

Fix missing import sys in pin_memory.py (#19419)

Summary:

kostmo pointed this out in #15331. Thanks :)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19419

Differential Revision:

D15002846

Pulled By: soumith

fbshipit-source-id:

c600fab3f7a7a5147994b9363910af4565c7ee65

Ran [Fri, 19 Apr 2019 05:10:34 +0000 (22:10 -0700)]

update documentation of PairwiseDistance#19241 (#19412)

Summary:

Fix the documentation of PairwiseDistance [#19241](https://github.com/pytorch/pytorch/issues/19241)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19412

Differential Revision:

D14998271

Pulled By: soumith

fbshipit-source-id:

bcb2aa46d3b3102c4480f2d24072a5e14b049888

Soumith Chintala [Fri, 19 Apr 2019 05:10:30 +0000 (22:10 -0700)]

fixes link in TripletMarginLoss (#19417)

Summary:

Fixes https://github.com/pytorch/pytorch/issues/19245

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19417

Differential Revision:

D15001610

Pulled By: soumith

fbshipit-source-id:

1b85ebe196eb5a3af5eb83d914dafa83b9b35b31

Mingzhe Li [Fri, 19 Apr 2019 02:56:50 +0000 (19:56 -0700)]

make separate operators as independent binaries (#19450)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19450

We want to make each operator benchmark as a separate binary. The previous way to run the benchmark is by collecting all operators into a single binary, it is unnecessary when we want to filter a specific operator. This diff aims to resolve that issue.

Reviewed By: ilia-cher

Differential Revision:

D14808159

fbshipit-source-id:

43cd25b219c6e358d0cd2a61463b34596bf3bfac

svcscm [Fri, 19 Apr 2019 01:26:20 +0000 (18:26 -0700)]

Updating submodules

Reviewed By: cdelahousse

fbshipit-source-id:

a727513842c0a240b377bda4e313fbedbc54c2e8

Xiang Gao [Fri, 19 Apr 2019 01:24:50 +0000 (18:24 -0700)]

Step 4: add support for unique with dim=None (#18651)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/18651

ghimport-source-id:

e11988130a3f9a73529de0b0d08b4ec25fbc639c

Differential Revision:

D15000463

Pulled By: VitalyFedyunin

fbshipit-source-id:

9e258e473dea6a3fc2307da2119b887ba3f7934a

Michael Suo [Fri, 19 Apr 2019 01:06:21 +0000 (18:06 -0700)]

allow bools to be used as attributes (#19440)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19440

ghimport-source-id:

9c962054d760526bf7da324b114455fcb1038521

Differential Revision:

D15005723

Pulled By: suo

fbshipit-source-id:

75fc87ae33894fc34d3b913881defb7e6b8d7af0

David Riazati [Fri, 19 Apr 2019 01:02:14 +0000 (18:02 -0700)]

Fix test build (#19444)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19444

ghimport-source-id:

c85db00e8037e7f6f0424eb8bd17f957d20b7247

Reviewed By: eellison

Differential Revision:

D15008679

Pulled By: driazati

fbshipit-source-id:

0987035116d9d0069794d96395c8ad458ba7c121

Thomas Viehmann [Fri, 19 Apr 2019 00:52:33 +0000 (17:52 -0700)]

pow scalar exponent / base autodiff, fusion (#19324)

Summary:

Fixes: #19253

Fixing pow(Tensor, float) is straightforward.

The breakage for pow(float, Tensor) is a bit more subtle to trigger, and fixing needs `torch.log` (`math.log` didn't work) from the newly merged #19115 (Thanks ngimel for pointing out this has landed.)

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19324

Differential Revision:

D15003531

Pulled By: ailzhang

fbshipit-source-id:

8b22138fa27a43806b82886fb3a7b557bbb5a865

Gao, Xiang [Fri, 19 Apr 2019 00:46:43 +0000 (17:46 -0700)]

Improve unique CPU performance for returning counts (#19352)

Summary:

Benchmark on a tensor of shape `torch.Size([15320, 2])`. Benchmark code:

```python

print(torch.__version__)

print()

a = tensor.flatten()

print('cpu, sorted=False:')

%timeit torch._unique2_temporary_will_remove_soon(a, sorted=False)

%timeit torch._unique2_temporary_will_remove_soon(a, sorted=False, return_inverse=True)

%timeit torch._unique2_temporary_will_remove_soon(a, sorted=False, return_counts=True)

%timeit torch._unique2_temporary_will_remove_soon(a, sorted=False, return_inverse=True, return_counts=True)

print()

print('cpu, sorted=True:')

%timeit torch._unique2_temporary_will_remove_soon(a)

%timeit torch._unique2_temporary_will_remove_soon(a, return_inverse=True)

%timeit torch._unique2_temporary_will_remove_soon(a, return_counts=True)

%timeit torch._unique2_temporary_will_remove_soon(a, return_inverse=True, return_counts=True)

print()

```

Before

```

1.1.0a0+36854fe

cpu, sorted=False:

340 µs ± 4.05 µs per loop (mean ± std. dev. of 7 runs, 1000 loops each)

724 µs ± 6.28 µs per loop (mean ± std. dev. of 7 runs, 1000 loops each)

54.3 ms ± 469 µs per loop (mean ± std. dev. of 7 runs, 10 loops each)

54.6 ms ± 659 µs per loop (mean ± std. dev. of 7 runs, 10 loops each)

cpu, sorted=True:

341 µs ± 7.21 µs per loop (mean ± std. dev. of 7 runs, 1000 loops each)

727 µs ± 7.05 µs per loop (mean ± std. dev. of 7 runs, 1000 loops each)

54.7 ms ± 795 µs per loop (mean ± std. dev. of 7 runs, 10 loops each)

54.3 ms ± 647 µs per loop (mean ± std. dev. of 7 runs, 10 loops each)

```

After

```

1.1.0a0+261d9e8

cpu, sorted=False:

350 µs ± 865 ns per loop (mean ± std. dev. of 7 runs, 1000 loops each)

771 µs ± 598 ns per loop (mean ± std. dev. of 7 runs, 1000 loops each)

1.09 ms ± 6.86 µs per loop (mean ± std. dev. of 7 runs, 1000 loops each)

1.09 ms ± 4.74 µs per loop (mean ± std. dev. of 7 runs, 1000 loops each)

cpu, sorted=True:

324 µs ± 4.99 µs per loop (mean ± std. dev. of 7 runs, 1000 loops each)

705 µs ± 3.18 µs per loop (mean ± std. dev. of 7 runs, 1000 loops each)

1.09 ms ± 5.22 µs per loop (mean ± std. dev. of 7 runs, 1000 loops each)

1.09 ms ± 5.63 µs per loop (mean ± std. dev. of 7 runs, 1000 loops each)

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19352

Differential Revision:

D14984717

Pulled By: VitalyFedyunin

fbshipit-source-id:

3c56f85705ab13a92ec7406f4f30be77226a3210

Pieter Noordhuis [Fri, 19 Apr 2019 00:44:37 +0000 (17:44 -0700)]

Revert

D14909203: Remove usages of TypeID

Differential Revision:

D14909203

Original commit changeset:

d716179c484a

fbshipit-source-id:

992ff1fcd6d35d3f2ae768c7e164b7a0ba871914

Sebastian Messmer [Fri, 19 Apr 2019 00:16:58 +0000 (17:16 -0700)]

Add tests for argument types (#19290)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19290

Add test cases for the supported argument types

And TODOs for some unsupported ones that we might want to support.

Reviewed By: dzhulgakov

Differential Revision:

D14931920

fbshipit-source-id:

c47bbb295a54ac9dc62569bf5c273368c834392c

David Riazati [Fri, 19 Apr 2019 00:06:09 +0000 (17:06 -0700)]

Allow optionals arguments from C++ (#19311)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19311

ghimport-source-id:

699f62eb2bbad53ff2045fb2e217eb1402f2cdc5

Reviewed By: eellison

Differential Revision:

D14983059

Pulled By: driazati

fbshipit-source-id:

442f96d6bd2a8ce67807ccad2594b39aae489ca5

Mingzhe Li [Fri, 19 Apr 2019 00:03:56 +0000 (17:03 -0700)]

Enhance front-end to add op (#19433)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19433

For operator benchmark project, we need to cover a lot of operators, so the interface for adding operators needs to be very clean and simple. This diff is implementing a new interface to add op.

Here is the logic to add new operator to the benchmark:

```

long_config = {}

short_config = {}

map_func

add_test(

[long_config, short_config],

map_func,

[caffe2 op]

[pt op]

)

```

Reviewed By: zheng-xq

Differential Revision:

D14791191

fbshipit-source-id:

ac6738507cf1b9d6013dc8e546a2022a9b177f05

Dmytro Dzhulgakov [Thu, 18 Apr 2019 23:34:20 +0000 (16:34 -0700)]

Fix cpp_custom_type_hack variable handling (#19400)

Summary:

My bad - it might be called in variable and non-variable context. So it's better to just inherit variable-ness from the caller.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19400

Reviewed By: ezyang

Differential Revision:

D14994781

Pulled By: dzhulgakov

fbshipit-source-id:

cb9d055b44a2e1d7bbf2e937d558e6bc75037f5b

Ailing Zhang [Thu, 18 Apr 2019 22:58:45 +0000 (15:58 -0700)]

fix hub doc formatting issues (#19434)

Summary:

minor fixes for doc

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19434

Differential Revision:

D15003903

Pulled By: ailzhang

fbshipit-source-id:

400768d9a5ee24f9183faeec9762b688c48c531b

Pieter Noordhuis [Thu, 18 Apr 2019 21:51:37 +0000 (14:51 -0700)]

Recursively find tensors in DDP module output (#19360)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19360

We'll return the output object verbatim since it is a freeform object.

We need to find any tensors in this object, though, because we need to

figure out which parameters were used during this forward pass, to

ensure we short circuit reduction for any unused parameters.

Before this commit only lists were handled and the functionality went

untested. This commit adds support for dicts and recursive structures,

and also adds a test case.

Closes #19354.

Reviewed By: mrshenli

Differential Revision:

D14978016

fbshipit-source-id:

4bb6999520871fb6a9e4561608afa64d55f4f3a8

Sebastian Messmer [Thu, 18 Apr 2019 21:07:30 +0000 (14:07 -0700)]

Moving at::Tensor into caffe2::Tensor without bumping refcount (#19388)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19388

The old implementation forced a refcount bump when converting at::Tensor to caffe2::Tensor.

Now, it is possible to move it without a refcount bump.

Reviewed By: dzhulgakov

Differential Revision:

D14986815

fbshipit-source-id:

92b4b0a6f323ed38376ffad75f960cad250ecd9b

Ailing Zhang [Thu, 18 Apr 2019 19:07:17 +0000 (12:07 -0700)]

Fix pickling torch.float32 (#18045)

Summary:

Attempt fix for #14057 . This PR fixes the example script in the issue.

The old behavior is a bit confusing here. What happened to pickling is python2 failed to recognize `torch.float32` is in module `torch`, thus it's looking for `torch.float32` in module `__main__`. Python3 is smart enough to handle it.

According to the doc [here](https://docs.python.org/2/library/pickle.html#object.__reduce__), it seems `__reduce__` should return `float32` instead of the old name `torch.float32`. In this way python2 is able to find `float32` in `torch` module.

> If a string is returned, it names a global variable whose contents are pickled as normal. The string returned by __reduce__() should be the object’s local name relative to its module

Pull Request resolved: https://github.com/pytorch/pytorch/pull/18045

Differential Revision:

D14990638

Pulled By: ailzhang

fbshipit-source-id:

816b97d63a934a5dda1a910312ad69f120b0b4de

David Riazati [Thu, 18 Apr 2019 18:07:45 +0000 (11:07 -0700)]

Respect order of Parameters in rnn.py (#18198)

Summary:

Previously to get a list of parameters this code was just putting them in the reverse order in which they were defined, which is not always right. This PR allows parameter lists to define the order themselves. To do this parameter lists need to have a corresponding function that provides the names of the parameters.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/18198

Differential Revision:

D14966270

Pulled By: driazati

fbshipit-source-id:

59331aa59408660069785906304b2088c19534b2

Nikolay Korovaiko [Thu, 18 Apr 2019 16:56:02 +0000 (09:56 -0700)]

Refactor EmitLoopCommon to make it more amenable to future extensions (#19341)

Summary:

This PR paves the way for support more iterator types in for-in loops.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19341

Differential Revision:

D14992749

Pulled By: Krovatkin

fbshipit-source-id:

e2d4c9465c8ec3fc74fbf23006dcb6783d91795f

Kutta Srinivasan [Thu, 18 Apr 2019 16:31:03 +0000 (09:31 -0700)]

Cleanup init_process_group (#19033)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19033

torch.distributed.init_process_group() has had many parameters added, but the contract isn't clear. Adding documentation, asserts, and explicit args should make this clearer to callers and more strictly enforced.

Reviewed By: mrshenli

Differential Revision:

D14813070

fbshipit-source-id:

80e4e7123087745bed436eb390887db9d1876042

peterjc123 [Thu, 18 Apr 2019 13:57:41 +0000 (06:57 -0700)]

Sync FindCUDA/select_computer_arch.cmake from upstream (#19392)

Summary:

1. Fixes auto detection for Turing cards.

2. Adds Turing Support

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19392

Differential Revision:

D14996142

Pulled By: soumith

fbshipit-source-id:

3cd45c58212cf3db96e5fa19b07d9f1b59a1666a

Alexandros Metsai [Thu, 18 Apr 2019 13:33:18 +0000 (06:33 -0700)]

Update module.py documentation. (#19347)

Summary:

Added the ">>>" python interpreter sign(three greater than symbols), so that the edited lines will appear as code, not comments/output, in the documentation. Normally, the interpreter would display "..." when expecting a block, but I'm not sure how this would work on the pytorch docs website. It seems that in other code examples the ">>>" sign is used as well, therefore I used with too.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19347

Differential Revision:

D14986154

Pulled By: soumith

fbshipit-source-id:

8f4d07d71ff7777b46c459837f350eb0a1f17e84

Tongzhou Wang [Thu, 18 Apr 2019 13:33:08 +0000 (06:33 -0700)]

Add device-specific cuFFT plan caches (#19300)

Summary:

Fixes https://github.com/pytorch/pytorch/issues/19224

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19300

Differential Revision:

D14986967

Pulled By: soumith

fbshipit-source-id:

8c31237db50d6924bba1472434c10326610d9255

Mingfei Ma [Thu, 18 Apr 2019 13:31:24 +0000 (06:31 -0700)]

Improve bmm() performance on CPU when input tensor is non-contiguous (#19338)

Summary:

This PR aims to improve Transformer performance on CPU, `bmm()` is one of the major bottlenecks now.

Current logic of `bmm()` on CPU only uses MKL batch gemm when the inputs `A` and `B` are contiguous or transposed. So when `A` or `B` is a slice of a larger tensor, it falls to a slower path.

`A` and `B` are both 3D tensors. MKL is able to handle the batch matrix multiplication on occasion that `A.stride(1) == 1 || A.stride(2) == 1` and `B.stride(1) == || B.stride(2) == 1`.

From [fairseq](https://github.com/pytorch/fairseq) implementation of Transformer, multi-head attention has two places to call bmm(), [here](https://github.com/pytorch/fairseq/blob/master/fairseq/modules/multihead_attention.py#L167) and [here](https://github.com/pytorch/fairseq/blob/master/fairseq/modules/multihead_attention.py#L197), `q`, `k`, `v` are all slices from larger tensor. So the `bmm()` falls to slow path at the moment.

Results on Xeon 6148 (20*2 cores 2.5GHz) indicate this PR improves Transformer training performance by **48%** (seconds per iteration reduced from **5.48** to **3.70**), the inference performance should also be boosted.

Before:

```

| epoch 001: 0%| | 27/25337 [02:27<38:31:26, 5.48s/it, loss=16.871, nll_loss=16.862, ppl=119099.70, wps=865, ups=0, wpb=4715.778, bsz=129.481, num_updates=27, lr=4.05e-06, gnorm=9.133,

```

After:

```

| epoch 001: 0%| | 97/25337 [05:58<25:55:49, 3.70s/it, loss=14.736, nll_loss=14.571, ppl=24339.38, wps=1280, ups=0, wpb=4735.299, bsz=131.134, num_updates=97, lr=1.455e-05, gnorm=3.908,

```

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19338

Differential Revision:

D14986346

Pulled By: soumith

fbshipit-source-id:

827106245af908b8a4fda69ed0288d322b028f08

Sebastian Messmer [Thu, 18 Apr 2019 09:00:51 +0000 (02:00 -0700)]

Optional inputs and outputs (#19289)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19289

Allow optional inputs and outputs in native c10 operators

Reviewed By: dzhulgakov

Differential Revision:

D14931927

fbshipit-source-id:

48f8bec009c6374345b34d933f148c08bb4f7118

Sebastian Messmer [Thu, 18 Apr 2019 09:00:51 +0000 (02:00 -0700)]

Add some tests (#19288)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19288

-

Reviewed By: dzhulgakov

Differential Revision:

D14931924

fbshipit-source-id:

6c53b5d1679080939973d33868e58ca4ad70361d

Sebastian Messmer [Thu, 18 Apr 2019 09:00:49 +0000 (02:00 -0700)]

Use string based schema for exposing caffe2 ops (#19287)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19287

Since we now have a string-schema-based op registration API, we can also use it when exposing caffe2 operators.

Reviewed By: dzhulgakov

Differential Revision:

D14931925

fbshipit-source-id:

ec162469d2d94965e8c99d431c801ae7c43849c8

Sebastian Messmer [Thu, 18 Apr 2019 09:00:49 +0000 (02:00 -0700)]

Allow registering ops without specifying the full schema (#19286)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19286

The operator registration API now allows registering an operator by only giving the operator name and not the full operator schema,

as long as the operator schema can be inferred from the kernel function.

Reviewed By: dzhulgakov

Differential Revision:

D14931921

fbshipit-source-id:

3776ce43d4ce67bb5a3ea3d07c37de96eebe08ba

Sebastian Messmer [Thu, 18 Apr 2019 09:00:49 +0000 (02:00 -0700)]

Add either type (#19285)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19285

The either type is a tagged union with two members.

This is going to be used in a diff stacked on top to allow a function to return one of two types.

Also, generally, either<Error, Result> is a great pattern for returning value_or_error from a function without using exceptions and we could use this class for that later.

Reviewed By: dzhulgakov

Differential Revision:

D14931923

fbshipit-source-id:

7d1dd77b3e5b655f331444394dcdeab24772ab3a

Sebastian Messmer [Thu, 18 Apr 2019 09:00:49 +0000 (02:00 -0700)]

Allow ops without tensor args if only fallback kernel exists (#19284)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19284

Instantiating a dispatch table previously only worked when the op had a tensor argument we could dispatch on.

However, the legacy API for custom operators didn't have dispatch and also worked for operators without tensor arguments, so we need to continue supporting that.

It probably generally makes sense to support this as long as there's only a fallback kernel and no dispatched kernel registered.

This diff adds that functionality.

Reviewed By: dzhulgakov

Differential Revision:

D14931926

fbshipit-source-id:

38fadcba07e5577a7329466313c89842d50424f9

Sebastian Messmer [Thu, 18 Apr 2019 07:57:44 +0000 (00:57 -0700)]

String-based schemas in op registration API (#19283)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19283

Now that the function schema parser is available in ATen/core, we can use it from the operator registration API to register ops based on string schemas.

This does not allow registering operators based on only the name yet - the full schema string needs to be defined.

A diff stacked on top will add name based registration.

Reviewed By: dzhulgakov

Differential Revision:

D14931919

fbshipit-source-id:

71e490dc65be67d513adc63170dc3f1ce78396cc

Sebastian Messmer [Thu, 18 Apr 2019 07:57:44 +0000 (00:57 -0700)]

Move function schema parser to ATen/core build target (#19282)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19282

This is largely a hack because we need to use the function schema parser from ATen/core

but aren't clear yet on how the final software architecture should look like.

- Add function schema parser files from jit to ATen/core build target.

- Also move ATen/core build target one directory up to allow this.

We only change the build targets and don't move the files yet because this is likely

not the final build set up and we want to avoid repeated interruptions

for other developers. cc zdevito

Reviewed By: dzhulgakov

Differential Revision:

D14931922

fbshipit-source-id:

26462e2e7aec9e0964706138edd3d87a83b964e3

Lu Fang [Thu, 18 Apr 2019 07:33:00 +0000 (00:33 -0700)]

update of fbcode/onnx to

ad7313470a9119d7e1afda7edf1d654497ee80ab (#19339)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19339

Previous import was

971311db58f2fa8306d15e1458b5fd47dbc8d11c

Included changes:

- **[

ad731347](https://github.com/onnx/onnx/commit/

ad731347)**: Fix shape inference for matmul (#1941) <Bowen Bao>

- **[

3717dc61](https://github.com/onnx/onnx/commit/

3717dc61)**: Shape Inference Tests for QOps (#1929) <Ashwini Khade>

- **[

a80c3371](https://github.com/onnx/onnx/commit/

a80c3371)**: Prevent unused variables from generating warnings across all platforms. (#1930) <Pranav Sharma>

- **[

be9255c1](https://github.com/onnx/onnx/commit/

be9255c1)**: add title (#1919) <Prasanth Pulavarthi>

- **[

7a112a6f](https://github.com/onnx/onnx/commit/

7a112a6f)**: add quantization ops in onnx (#1908) <Ashwini Khade>

- **[

6de42d7d](https://github.com/onnx/onnx/commit/

6de42d7d)**: Create working-groups.md (#1916) <Prasanth Pulavarthi>

Reviewed By: yinghai

Differential Revision:

D14969962

fbshipit-source-id:

5ec64ef7aee5161666ed0c03e201be0ae20826f9

Roy Li [Thu, 18 Apr 2019 07:18:35 +0000 (00:18 -0700)]

Remove copy and copy_ special case on Type (#18972)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/18972

ghimport-source-id:

b5d3012b00530145fa24ab0cab693a7e80cb5989

Differential Revision:

D14816530

Pulled By: li-roy

fbshipit-source-id:

9c7a166abb22d2cd1f81f352e44d9df1541b1774

Spandan Tiwari [Thu, 18 Apr 2019 07:06:59 +0000 (00:06 -0700)]

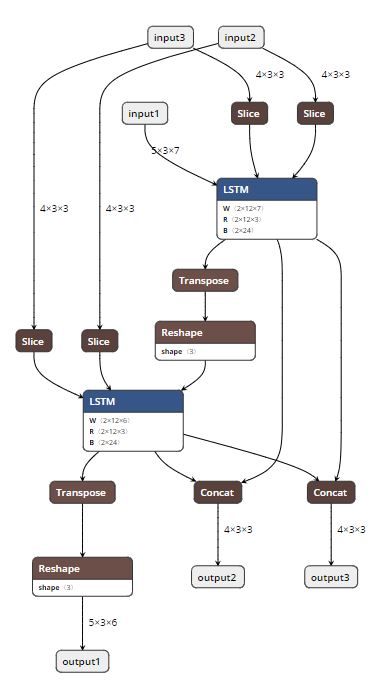

Add constant folding to ONNX graph during export (Resubmission) (#18698)

Summary:

Rewritten version of https://github.com/pytorch/pytorch/pull/17771 using graph C++ APIs.

This PR adds the ability to do constant folding on ONNX graphs during PT->ONNX export. This is done mainly to optimize the graph and make it leaner. The two attached snapshots show a multiple-node LSTM model before and after constant folding.

A couple of notes:

1. Constant folding is by default turned off for now. The goal is to turn it on by default once we have validated it through all the tests.

2. Support for folding in nested blocks is not in place, but will be added in the future, if needed.

**Original Model:**

**Constant-folded model:**

Pull Request resolved: https://github.com/pytorch/pytorch/pull/18698

Differential Revision:

D14889768

Pulled By: houseroad

fbshipit-source-id:

b6616b1011de9668f7c4317c880cb8ad4c7b631a

Roy Li [Thu, 18 Apr 2019 06:52:44 +0000 (23:52 -0700)]

Remove usages of TypeID (#19183)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19183

ghimport-source-id:

9af190b072523459fa61e5e79419b88ac8586a4d

Differential Revision:

D14909203

Pulled By: li-roy

fbshipit-source-id:

d716179c484aebfe3ec30087c5ecd4a11848ffc3

Sebastian Messmer [Thu, 18 Apr 2019 06:41:22 +0000 (23:41 -0700)]

Fixing function schema parser for Android (#19281)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19281

String<->Number conversions aren't available in the STL used in our Android environment.

This diff adds workarounds for that so that the function schema parser can be compiled for android

Reviewed By: dzhulgakov

Differential Revision:

D14931649

fbshipit-source-id:

d5d386f2c474d3742ed89e52dff751513142efad

Sebastian Messmer [Thu, 18 Apr 2019 06:41:21 +0000 (23:41 -0700)]

Split function schema parser from operator (#19280)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19280

We want to use the function schema parser from ATen/core, but with as little dependencies as possible.

This diff moves the function schema parser into its own file and removes some of its dependencies.

Reviewed By: dzhulgakov

Differential Revision:

D14931651

fbshipit-source-id:

c2d787202795ff034da8cba255b9f007e69b4aea

Ailing Zhang [Thu, 18 Apr 2019 06:38:26 +0000 (23:38 -0700)]

fix hub doc format

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/19396

Differential Revision:

D14993859

Pulled By: ailzhang

fbshipit-source-id:

bdf94e54ec35477cfc34019752233452d84b6288

Mikhail Zolotukhin [Thu, 18 Apr 2019 05:05:49 +0000 (22:05 -0700)]

Clang-format torch/csrc/jit/passes/quantization.cpp. (#19385)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19385

ghimport-source-id:

67f808db7dcbcb6980eac79a58416697278999b0

Differential Revision:

D14991917

Pulled By: ZolotukhinM

fbshipit-source-id:

6c2e57265cc9f0711752582a04d5a070482ed1e6

Shen Li [Thu, 18 Apr 2019 04:18:49 +0000 (21:18 -0700)]

Allow DDP to wrap multi-GPU modules (#19271)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19271

allow DDP to take multi-gpu models

Reviewed By: pietern

Differential Revision:

D14822375

fbshipit-source-id:

1eebfaa33371766d3129f0ac6f63a573332b2f1c

Jiyan Yang [Thu, 18 Apr 2019 04:07:42 +0000 (21:07 -0700)]

Add validator for optimizers when parameters are shared

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/18497

Reviewed By: kennyhorror

Differential Revision:

D14614738

fbshipit-source-id:

beddd8349827dcc8ccae36f21e5d29627056afcd

Ailing Zhang [Thu, 18 Apr 2019 04:01:36 +0000 (21:01 -0700)]

hub minor fixes (#19247)

Summary:

A few improvements while doing bert model

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19247

Differential Revision:

D14989345

Pulled By: ailzhang

fbshipit-source-id:

f4846813f62b6d497fbe74e8552c9714bd8dc3c7

Elias Ellison [Thu, 18 Apr 2019 02:52:16 +0000 (19:52 -0700)]

fix wrong schema (#19370)

Summary:

Op was improperly schematized previously. Evidently checkScript does not test if the outputs are the same type.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19370

Differential Revision:

D14985159

Pulled By: eellison

fbshipit-source-id:

feb60552afa2a6956d71f64801f15e5fe19c3a91

Mikhail Zolotukhin [Thu, 18 Apr 2019 01:34:50 +0000 (18:34 -0700)]

Fix printing format in examples in jit/README.md. (#19323)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19323

ghimport-source-id:

74a01917de70c9d59099cf601b24f3cb484ab7be

Differential Revision:

D14990100

Pulled By: ZolotukhinM

fbshipit-source-id:

87ede08c8ca8f3027b03501fbce8598379e8b96c

Eric Faust [Wed, 17 Apr 2019 23:59:34 +0000 (16:59 -0700)]

Allow for single-line deletions in clang_tidy.py (#19082)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19082

When you have just one line of deletions, just as with additions, there is no count printed.

Without this fix, we ignore all globs with single-line deletions when selecting which lines were changed.

When all the changes in the file were single-line, this meant no line-filtering at all!

Differential Revision:

D14860426

fbshipit-source-id:

c60e9d84f9520871fc0c08fa8c772c227d06fa27

Michael Suo [Wed, 17 Apr 2019 23:48:28 +0000 (16:48 -0700)]

Revert

D14901379: [jit] Add options to Operator to enable registration of alias analysis passes

Differential Revision:

D14901379

Original commit changeset:

d92a497e280f

fbshipit-source-id:

51d31491ab90907a6c95af5d8a59dff5e5ed36a4

Michael Suo [Wed, 17 Apr 2019 23:48:28 +0000 (16:48 -0700)]

Revert

D14901485: [jit] Only require python print on certain namespaces

Differential Revision:

D14901485

Original commit changeset:

4b02a66d325b

fbshipit-source-id:

93348056c00f43c403cbf0d34f8c565680ceda11

Yinghai Lu [Wed, 17 Apr 2019 23:40:58 +0000 (16:40 -0700)]

Remove unused template parameter in OnnxifiOp (#19362)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/19362

`float` type is never used in OnnxifiOp....

Reviewed By: bddppq

Differential Revision:

D14977970

fbshipit-source-id:

8fee02659dbe408e5a3e0ff95d74c04836c5c281

Jerry Zhang [Wed, 17 Apr 2019 23:10:05 +0000 (16:10 -0700)]

Add empty_quantized (#18960)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/18960

empty_affine_quantized creates an empty affine quantized Tensor from scratch.

We might need this when we implement quantized operators.

Differential Revision:

D14810261

fbshipit-source-id:

f07d8bf89822d02a202ee81c78a17aa4b3e571cc