Bert Maher [Sun, 29 Aug 2021 02:57:10 +0000 (19:57 -0700)]

[nnc] Make 64-bit dimensions work (#64077)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64077

We were assuming kernel dimensions fit in 32 bits (the old fuser made

this assumption too), but we should be able to support 64.

ghstack-source-id:

136933272

Test Plan: unit tests; new IR level test with huge sizes

Reviewed By: ZolotukhinM

Differential Revision:

D30596689

fbshipit-source-id:

23b7e393a2ebaecb0c391a6b1f0c4b05a98bcc94

Bert Maher [Sun, 29 Aug 2021 02:57:10 +0000 (19:57 -0700)]

Parse int64 sizes/strides (#64076)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64076

We were parsing sizes into int32s, so if you had a tensor with more

than 2^32 elements, you couldn't represent it.

ghstack-source-id:

136933273

Test Plan: parseIR with size of 4e9

Reviewed By: ZolotukhinM

Differential Revision:

D30521116

fbshipit-source-id:

1e28e462cba52d648e0e2acb4e234d86aae25a3e

Bert Maher [Sun, 29 Aug 2021 02:18:10 +0000 (19:18 -0700)]

[nnc] Fix batchnorm implementation (#64112)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64112

Fixes #64062

Test Plan: Imported from OSS

Reviewed By: zhxchen17

Differential Revision:

D30622897

Pulled By: bertmaher

fbshipit-source-id:

7d7c6131aa786e61fa1d0a517288396a0bdb1d22

Ilqar Ramazanli [Sat, 28 Aug 2021 22:54:53 +0000 (15:54 -0700)]

To add RMSProp algorithm documentation (#63721)

Summary:

It has been discussed before that adding description of Optimization algorithms to PyTorch Core documentation may result in a nice Optimization research tutorial. In the following tracking issue we mentioned about all the necessary algorithms and links to the originally published paper https://github.com/pytorch/pytorch/issues/63236.

In this PR we are adding description of RMSProp to the documentation. For more details, we refer to the paper https://www.cs.toronto.edu/~tijmen/csc321/slides/lecture_slides_lec6.pdf

<img width="464" alt="RMSProp" src="https://user-images.githubusercontent.com/

73658284/

131179226-

3fb6fe5a-5301-4948-afbe-

f38bf57f24ff.png">

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63721

Reviewed By: albanD

Differential Revision:

D30612426

Pulled By: iramazanli

fbshipit-source-id:

c3ac630a9658d1282866b53c86023ac10cf95398

Facebook Community Bot [Sat, 28 Aug 2021 18:50:49 +0000 (11:50 -0700)]

Automated submodule update: FBGEMM (#64129)

Summary:

This is an automated pull request to update the first-party submodule for [pytorch/FBGEMM](https://github.com/pytorch/FBGEMM).

New submodule commit: https://github.com/pytorch/FBGEMM/commit/

f14e79481460a7c0dedf452a258072231cb343e6

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64129

Test Plan: Ensure that CI jobs succeed on GitHub before landing.

Reviewed By: jspark1105

Differential Revision:

D30621549

fbshipit-source-id:

34c109e75c96a261bf370f7a06dbb8b9004860ab

Nikita Shulga [Sat, 28 Aug 2021 18:46:40 +0000 (11:46 -0700)]

Move Parallel[Native|TBB] to GHA (#64123)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/64123

Reviewed By: driazati

Differential Revision:

D30620966

Pulled By: malfet

fbshipit-source-id:

9a23e4b3e16870f77bf18df4370cd468603d592d

Tugsbayasgalan (Tugsuu) Manlaibaatar [Sat, 28 Aug 2021 18:44:58 +0000 (11:44 -0700)]

Enhancement for smart serialization for out schemas (#63096)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/63096

Test Plan: Imported from OSS

Reviewed By: gmagogsfm

Differential Revision:

D30415255

Pulled By: tugsbayasgalan

fbshipit-source-id:

eb40440a3b46258394d035479f5fc4a4baa12bcc

Priya Ramani [Sat, 28 Aug 2021 05:50:20 +0000 (22:50 -0700)]

[Light] Fix error message (#64010)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64010

Fixing typos in a error message

Test Plan:

Error message before fix:

Lite Interpreter verson number does not match. The model version must be between 3 and 5But the model version is 6

Error message after fix:

Lite Interpreter version number does not match. The model version must be between 3 and 5 but the model version is 6

Reviewed By: larryliu0820

Differential Revision:

D30568367

fbshipit-source-id:

205f3278ee8dcf38579dbb828580a9e986ccacc1

Jerry Zhang [Sat, 28 Aug 2021 03:58:20 +0000 (20:58 -0700)]

[quant][graphmode][fx] Add reference quantized linear module (#63627)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63627

Added reference quantized linear module for the custom backend flow, the reference quantized module will

have the following code:

```

w(float) -- quant - dequant \

x(float) ------------- F.linear ---

```

In the full model, we will see

```

w(float) -- quant - *dequant \

x -- quant --- *dequant -- *F.linear --- *quant - dequant

```

and the backend should be able to fuse the ops with `*` into a quantized linear

Test Plan:

python test/test_quantization.py TestQuantizeFx.test_conv_linear_reference

Imported from OSS

Reviewed By: vkuzo

Differential Revision:

D30504750

fbshipit-source-id:

5729921745c2b6a0fb344efc3689f3b170e89500

Yuchen Huang [Sat, 28 Aug 2021 01:57:22 +0000 (18:57 -0700)]

[iOS][GPU] Consolidate array and non-array kernel for hardswish (#63369)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63369

ghstack-source-id:

136918152

(Note: this ignores all push blocking failures!)

Test Plan:

- `buck test pp-macos`

- Op tests in PyTorchPlayground app

- Run mobilenetv3 test

https://pxl.cl/1Ncls

Reviewed By: xta0

Differential Revision:

D30354454

fbshipit-source-id:

88bf4f8b5871e63170161b3f3e44f99b8a3086c6

Ilqar Ramazanli [Sat, 28 Aug 2021 01:51:09 +0000 (18:51 -0700)]

To add Nesterov Adam algorithm description to documentation (#63793)

Summary:

It has been discussed before that adding description of Optimization algorithms to PyTorch Core documentation may result in a nice Optimization research tutorial. In the following tracking issue we mentioned about all the necessary algorithms and links to the originally published paper https://github.com/pytorch/pytorch/issues/63236.

In this PR we are adding description of Nesterov Adam Algorithm to the documentation. For more details, we refer to the paper https://openreview.net/forum?id=OM0jvwB8jIp57ZJjtNEZ

<img width="439" alt="NAdam" src="https://user-images.githubusercontent.com/

73658284/

131185124-

e81b2edf-33d9-4a9d-a7bf-

f7e5eea47d7c.png">

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63793

Reviewed By: NivekT

Differential Revision:

D30617057

Pulled By: iramazanli

fbshipit-source-id:

cd2054b0d9b6883878be74576e86e307f32f1435

Mike Iovine [Sat, 28 Aug 2021 00:37:05 +0000 (17:37 -0700)]

[Static Runtime] Optimize memory planner initialization (#64101)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64101

Checking `getOutOfPlaceOperation(n)` is a very expensive operation, especially in multithreaded environments, due to a lock acquisition when the NNC cache is queried. This slows down the memory planner initialization time, and by extension, the latency for the first static runtime inference.

There are two optimizations in this diff:

* Cache the result of `p_node->has_out_variant()` to avoid the call to `getOutOfPlaceOperation`. This speeds up calls to `canReuseInputOutputs`, which in turn speeds up `isOptimizableContainerType`

* Precompute all `isOptimizableContainerType` during static runtime initialization to avoid a pass over all of each node's inputs.

Test Plan: All unit tests pass: `buck test caffe2/benchmarks/static_runtime/...`

Reviewed By: movefast1990

Differential Revision:

D30595579

fbshipit-source-id:

70aaa7af9589c739c672788bf662f711731864f2

Mikhail Zolotukhin [Fri, 27 Aug 2021 23:15:55 +0000 (16:15 -0700)]

[TensorExpr] Update tutorial. (#64109)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/64109

Test Plan: Imported from OSS

Reviewed By: bertmaher

Differential Revision:

D30614050

Pulled By: ZolotukhinM

fbshipit-source-id:

e8f9bd9ef2483e6eafbc0bd5394d311cd694c7b2

Eli Uriegas [Fri, 27 Aug 2021 23:02:49 +0000 (16:02 -0700)]

.github: Add cpp_docs job to current gcc5 workflow (#64044)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64044

Adds the cpp_docs job to the current workflow, also modifies the scripts

surrounding building docs so that they can be powered through

environment variables with sane defaults rather than having to have

passed arguments.

Ideally should not break current jobs running in circleci but those

should eventually be turned off anyways.

Coincides with work from:

* https://github.com/seemethere/upload-artifact-s3/pull/1

* https://github.com/seemethere/upload-artifact-s3/pull/2

Signed-off-by: Eli Uriegas <eliuriegas@fb.com>

cc ezyang seemethere malfet walterddr lg20987 pytorch/pytorch-dev-infra

Test Plan: Imported from OSS

Reviewed By: malfet

Differential Revision:

D30610010

Pulled By: seemethere

fbshipit-source-id:

f67adeb1bd422bb9e24e0f1ec0098cf9c648f283

soulitzer [Fri, 27 Aug 2021 21:59:08 +0000 (14:59 -0700)]

Update codegen to use boxed kernel (#63459)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63459

- Replaces the usual registration basically when "requires_derivative" is True (as in we still need a grad_fn), but `fn.info` is `None` (TODO maybe make sure differentiable inputs > 0 also to match requires_derivative).

- Adds some (temporary?) fixes to some sparse functions See: https://github.com/pytorch/pytorch/issues/63549

- To remove the codegen that generates NotImplemented node (though that should only be one line), because there are some ops listed under `RESET_GRAD_ACCUMULATOR` that have a extra function call. We would need to make this list of ops available to c++, but this would either mean we'd have to codegen a list of strings, or move the RESET_GRAD_ACCUMULATOR to cpp land. We could do this in a future PR if necessary.

Test Plan: Imported from OSS

Reviewed By: heitorschueroff

Differential Revision:

D30518571

Pulled By: soulitzer

fbshipit-source-id:

99a35cbced46292d1b4e51594ae4d534c2caf8b6

soulitzer [Fri, 27 Aug 2021 21:59:08 +0000 (14:59 -0700)]

Add autograd not implemented boxed fallback (#63458)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63458

See description and discussion from https://github.com/pytorch/pytorch/pull/62450

Test Plan: Imported from OSS

Reviewed By: heitorschueroff

Differential Revision:

D30518572

Pulled By: soulitzer

fbshipit-source-id:

3b1504d49abb84560ae17077f0dec335749c9882

Jessica Choi [Fri, 27 Aug 2021 21:46:31 +0000 (14:46 -0700)]

Removing references to ProcessGroupAgent in comments (#64051)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64051

cc pietern mrshenli pritamdamania87 zhaojuanmao satgera rohan-varma gqchen aazzolini osalpekar jiayisuse agolynski SciPioneer H-Huang mrzzd cbalioglu gcramer23

Test Plan: Imported from OSS

Reviewed By: mrshenli

Differential Revision:

D30587076

Pulled By: jaceyca

fbshipit-source-id:

414cb95faad0b4da0eaf2956c0668af057f93574

Erjia Guan [Fri, 27 Aug 2021 21:15:23 +0000 (14:15 -0700)]

Add README to datapipes (#63982)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63982

Add a readme to `datapipes` for developer. This is can be a replacement of https://github.com/pytorch/pytorch/blob/master/torch/utils/data/datapipes_tutorial_dev_loaders.ipynb

After this PR is landed, the README.md will be added to PyTorch Wiki

Test Plan: Imported from OSS

Reviewed By: soulitzer

Differential Revision:

D30554198

Pulled By: ejguan

fbshipit-source-id:

6091aae8ef915c7c1f00fbf45619c86c9558d308

Vincent Phan [Fri, 27 Aug 2021 20:51:38 +0000 (13:51 -0700)]

Implement leaky relu op

Summary: Implemented leaky relu op as per: https://www.internalfb.com/tasks/?t=

97492679

Test Plan:

buck build -c ndk.custom_libcxx=false -c pt.enable_qpl=0 //xplat/caffe2:pt_vulkan_api_test_binAndroid\#android-arm64 --show-output

adb push buck-out/gen/xplat/caffe2/pt_vulkan_api_test_binAndroid\#android-arm64 /data/local/tmp/vulkan_api_test

adb shell "/data/local/tmp/vulkan_api_test"

all tests pass, including new ones

Reviewed By: SS-JIA

Differential Revision:

D30186225

fbshipit-source-id:

fdb1f8f7b3a28b5504581822185c0475dcd53a3e

Patrick Hu [Fri, 27 Aug 2021 20:37:38 +0000 (13:37 -0700)]

[FX] Validate data type of target on Node Construction (#64050)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/64050

Test Plan: Imported from OSS

Reviewed By: jamesr66a

Differential Revision:

D30585535

Pulled By: yqhu

fbshipit-source-id:

96778a87e75f510b4ef42f0e5cf76b35b7b2f331

Ivan Yashchuk [Fri, 27 Aug 2021 20:21:04 +0000 (13:21 -0700)]

Sparse CUDA: rename files *.cu -> *.cpp (#63894)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63894

This PR introduces a few code structure changes. There is no need to use

.cu extension for pure c++ code without cuda. Moved

`s_addmm_out_csr_sparse_dense_cuda_worker` to a separate cpp file from

cu file.

cc nikitaved pearu cpuhrsch IvanYashchuk ngimel

Test Plan: Imported from OSS

Reviewed By: malfet

Differential Revision:

D30548771

Pulled By: cpuhrsch

fbshipit-source-id:

6f12d36e7e506d2fdbd57ef33eb73192177cd904

Scott Wolchok [Fri, 27 Aug 2021 19:55:26 +0000 (12:55 -0700)]

[PyTorch] Reduce code size of register_prim_ops.cpp (#61494)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61494

Creating a constexpr array and then looping over it is much cheaper than emitting a function call per item.

ghstack-source-id:

136639302

Test Plan:

fitsships

Buildsizebot some mobile apps to check size impact.

Reviewed By: dhruvbird, iseeyuan

Differential Revision:

D29646977

fbshipit-source-id:

6144999f6acfc4e5dcd659845859702051344d88

Marjan Fariborz [Fri, 27 Aug 2021 19:45:01 +0000 (12:45 -0700)]

Adding alltoall_single collective to collective quantization API (#63154)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63154

The collective quantization API now supports alltoall, alltoall_single, and allscatter. The test is also included.

ghstack-source-id:

136856877

Test Plan: buck test mode/dev-nosan //caffe2/test/distributed/algorithms/quantization:DistQuantizationTests_nccl -- test_all_to_all_single_bfp16

Reviewed By: wanchaol

Differential Revision:

D30255251

fbshipit-source-id:

856f4fa12de104689a03a0c8dc9e3ecfd41cad29

albanD [Fri, 27 Aug 2021 18:53:27 +0000 (11:53 -0700)]

New TLS to disable forward mode AD (#63117)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/63117

Test Plan: Imported from OSS

Reviewed By: ngimel

Differential Revision:

D30388097

Pulled By: albanD

fbshipit-source-id:

f1bc777064645db1ff848bdd64af95bffb530984

Karen Zhou [Fri, 27 Aug 2021 18:51:09 +0000 (11:51 -0700)]

[pruner] add README to repo (#64099)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64099

adding readme to pruner in OSS

ghstack-source-id:

136867516

Test Plan: should not affect behavior

Reviewed By: z-a-f

Differential Revision:

D30608045

fbshipit-source-id:

3e9899a853395b2e91e8a69a5d2ca5f3c2acc646

mrshenli [Fri, 27 Aug 2021 18:28:31 +0000 (11:28 -0700)]

Improve `distributed.get_rank()` API docstring (#63296)

Summary:

See discussion in https://pytorch.slack.com/archives/CBHSWPNM7/p1628792389008600

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63296

Reviewed By: cbalioglu

Differential Revision:

D30332042

Pulled By: mrshenli

fbshipit-source-id:

3a642fda2e106fd35b67709ed2adb60e408854c2

Joel Schlosser [Fri, 27 Aug 2021 18:28:03 +0000 (11:28 -0700)]

Modules note v2 (#63963)

Summary:

This PR expands the [note on modules](https://pytorch.org/docs/stable/notes/modules.html) with additional info for 1.10.

It adds the following:

* Examples of using hooks

* Examples of using apply()

* Examples for ParameterList / ParameterDict

* register_parameter() / register_buffer() usage

* Discussion of train() / eval() modes

* Distributed training overview / links

* TorchScript overview / links

* Quantization overview / links

* FX overview / links

* Parametrization overview / link to tutorial

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63963

Reviewed By: albanD

Differential Revision:

D30606604

Pulled By: jbschlosser

fbshipit-source-id:

c1030b19162bcb5fe7364bcdc981a2eb6d6e89b4

Tugsbayasgalan (Tugsuu) Manlaibaatar [Fri, 27 Aug 2021 18:18:52 +0000 (11:18 -0700)]

Detect out argument in the schema (#62755)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62755

After this change, out argument can be checked by calling is_out()

Test Plan: Imported from OSS

Reviewed By: mruberry

Differential Revision:

D30415256

Pulled By: tugsbayasgalan

fbshipit-source-id:

b2e1fa46bab7c813aaede1f44149081ef2df566d

Don Jang [Fri, 27 Aug 2021 17:42:50 +0000 (10:42 -0700)]

[Static Runtime] Add out variant of quantized::embedding_bag_byte_prepack (#64081)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64081

This change add an out variant of `quantized::embedding_bag_byte_prepack`.

Test Plan:

- Added `ShapeInferenceTest.QEmbeddingBagByteUnpack`.

- Observed

```

V0824 13:38:49.723708 1322143 impl.cpp:1394] Switch to out variant for node: %2 : Tensor = quantized::embedding_bag_byte_prepack(%input)

```

Reviewed By: hlu1

Differential Revision:

D30504216

fbshipit-source-id:

1d9d428e77a15bcc7da373d65e7ffabaf9c6caf2

BBuf [Fri, 27 Aug 2021 17:42:24 +0000 (10:42 -0700)]

fix resize bug (#61166)

Summary:

I think the original intention here is to only take effect in the case of align_corners (because output_size = 1 and the divisor will be 0), but it affects non-align_corners too. For example:

```python

input = torch.tensor(

np.arange(1, 5, dtype=np.int32).reshape((1, 1, 2, 2)) )

m = torch.nn.Upsample(scale_factor=0.5, mode="bilinear")

of_out = m(input)

```

The result we expect should be [[[[2.5]]]]

but pytorch get [[[[1.0]]]] which is different from OpenCV and PIL, this pr try to fixed it。

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61166

Reviewed By: malfet

Differential Revision:

D30543178

Pulled By: heitorschueroff

fbshipit-source-id:

21a4035483981986b0ae4a401ef0efbc565ccaf1

Pierluigi Taddei [Fri, 27 Aug 2021 17:36:08 +0000 (10:36 -0700)]

[caffe2] fixes to allow stricter compilation flag (#64016)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64016

In order to increase the strictness of the compilation for some target depending on caffe2 we need to fix some errors uncovered when rising such flags.

This change introduces the required override tokens for virtual destructors

Test Plan: CI. Moreover targets depending on caffe2 using clang strict warnings now compile

Reviewed By: kalman5

Differential Revision:

D30541714

fbshipit-source-id:

564af31b4a9df3536d7d6f43ad29e1d0c7040551

Heitor Schueroff [Fri, 27 Aug 2021 17:16:02 +0000 (10:16 -0700)]

Added reference tests to ReductionOpInfo (#62900)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/62900

Test Plan: Imported from OSS

Reviewed By: mruberry

Differential Revision:

D30408815

Pulled By: heitorschueroff

fbshipit-source-id:

6a1f82ac281920ff7405a42f46ccd796e60af9d6

Mike Iovine [Fri, 27 Aug 2021 17:10:48 +0000 (10:10 -0700)]

[JIT] Add aten::slice optimization (#63049)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63049

Given a graph produced from a function like this:

```

def foo():

li = [1, 2, 3, 4, 5, 6]

return li[0:2]

```

This pass produces a graph like this:

```

def foo():

li = [1, 2]

return li

```

These changes are mostly adapted from https://github.com/pytorch/pytorch/pull/62297/

Test Plan: `buck test //caffe2/test:jit -- TestPeephole`

Reviewed By: eellison

Differential Revision:

D30231044

fbshipit-source-id:

d12ee39f68289a574f533041a5adb38b2f000dd5

Jonathan Chang [Fri, 27 Aug 2021 16:49:39 +0000 (09:49 -0700)]

Add doc for nn.MultiMarginLoss (shape, example) (#63760)

Summary:

Fixes https://github.com/pytorch/pytorch/issues/63747

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63760

Reviewed By: malfet

Differential Revision:

D30541581

Pulled By: jbschlosser

fbshipit-source-id:

99560641e614296645eb0e51999513f57dfcfa98

Peter Bell [Fri, 27 Aug 2021 16:37:10 +0000 (09:37 -0700)]

Refactor structured set_output in Register{DispatchKey}.cpp (#62188)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62188

These parts of the `set_output` code are identical for all operators in the

kernel registration files. So, this moves them from being copied into every

class to two helper functions at the top of the file.

Test Plan: Imported from OSS

Reviewed By: soulitzer

Differential Revision:

D29962045

Pulled By: albanD

fbshipit-source-id:

753b8aac755f3c91b77ffa2c30a89ac91a84b7c4

Sergei Vorobev [Fri, 27 Aug 2021 16:31:36 +0000 (09:31 -0700)]

[bazel] GPU-support: add @local_config_cuda and @cuda (#63604)

Summary:

## Context

We take the first step at tackling the GPU-bazel support by adding bazel external workspaces `local_config_cuda` and `cuda`, where the first one has some hardcoded values and lists of files, and the second one provides a nicer, high-level wrapper that maps into the already expected by pytorch bazel targets that are guarded with `if_cuda` macro.

The prefix `local_config_` signifies the fact that we are breaking the bazel hermeticity philosophy by explicitly relaying on the CUDA installation that is present on the machine.

## Testing

Notice an important scenario that is unlocked by this change: compilation of cpp code that depends on cuda libraries (i.e. cuda.h and so on).

Before:

```

sergei.vorobev@cs-sv7xn77uoy-gpu-

1628706590:~/src/pytorch4$ bazelisk build --define=cuda=true //:c10

ERROR: /home/sergei.vorobev/src/pytorch4/tools/config/BUILD:12:1: no such package 'tools/toolchain': BUILD file not found in any of the following directories. Add a BUILD file to a directory to mark it as a package.

- /home/sergei.vorobev/src/pytorch4/tools/toolchain and referenced by '//tools/config:cuda_enabled_and_capable'

ERROR: While resolving configuration keys for //:c10: Analysis failed

ERROR: Analysis of target '//:c10' failed; build aborted: Analysis failed

INFO: Elapsed time: 0.259s

INFO: 0 processes.

FAILED: Build did NOT complete successfully (2 packages loaded, 2 targets configured)

```

After:

```

sergei.vorobev@cs-sv7xn77uoy-gpu-

1628706590:~/src/pytorch4$ bazelisk build --define=cuda=true //:c10

INFO: Analyzed target //:c10 (6 packages loaded, 246 targets configured).

INFO: Found 1 target...

Target //:c10 up-to-date:

bazel-bin/libc10.lo

bazel-bin/libc10.so

INFO: Elapsed time: 0.617s, Critical Path: 0.04s

INFO: 0 processes.

INFO: Build completed successfully, 1 total action

```

The `//:c10` target is a good testing one for this, because it has such cases where the [glob is different](https://github.com/pytorch/pytorch/blob/

075024b9a34904ec3ecdab3704c3bcaa329bdfea/BUILD.bazel#L76-L81), based on do we compile for CUDA or not.

## What is out of scope of this PR

This PR is a first in a series of providing the comprehensive GPU bazel build support. Namely, we don't tackle the [cu_library](https://github.com/pytorch/pytorch/blob/

11a40ad915d4d3d8551588e303204810887fcf8d/tools/rules/cu.bzl#L2) implementation here. This would be a separate large chunk of work.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63604

Reviewed By: soulitzer

Differential Revision:

D30442083

Pulled By: malfet

fbshipit-source-id:

b2a8e4f7e5a25a69b960a82d9e36ba568eb64595

Hanton Yang [Fri, 27 Aug 2021 16:23:45 +0000 (09:23 -0700)]

[OSS] Enable Metal in PyTorch MacOS nightly builds (#63718)

Summary:

Build on https://github.com/pytorch/pytorch/pull/63825

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63718

Test Plan:

1.Add `ci/binaries` label to PR, so the CI will build those nightly builds

2.Make sure the following CI jobs build with `USE_PYTORCH_METAL_EXPORT` option is `ON`:

```

ci/circleci: binary_macos_arm64_conda_3_8_cpu_nightly_build

ci/circleci: binary_macos_arm64_conda_3_9_cpu_nightly_build

ci/circleci: binary_macos_arm64_wheel_3_8_cpu_nightly_build

ci/circleci: binary_macos_arm64_wheel_3_9_cpu_nightly_build

ci/circleci: binary_macos_conda_3_6_cpu_nightly_build

ci/circleci: binary_macos_conda_3_7_cpu_nightly_build

ci/circleci: binary_macos_conda_3_8_cpu_nightly_build

ci/circleci: binary_macos_conda_3_9_cpu_nightly_build

ci/circleci: binary_macos_libtorch_3_7_cpu_nightly_build

ci/circleci: binary_macos_wheel_3_6_cpu_nightly_build

ci/circleci: binary_macos_wheel_3_7_cpu_nightly_build

ci/circleci: binary_macos_wheel_3_8_cpu_nightly_build

ci/circleci: binary_macos_wheel_3_9_cpu_nightly_build

```

3.Test `conda` and `wheel` builds locally on [HelloWorld-Metal](https://github.com/pytorch/ios-demo-app/tree/master/HelloWorld-Metal) demo with [(Prototype) Use iOS GPU in PyTorch](https://pytorch.org/tutorials/prototype/ios_gpu_workflow.html)

(1) conda

```

conda install https://

15667941-

65600975-gh.circle-artifacts.com/0/Users/distiller/project/final_pkgs/pytorch-1.10.0.dev20210826-py3.8_0.tar.bz2

```

(2) wheel

```

pip3 install https://

15598647-

65600975-gh.circle-artifacts.com/0/Users/distiller/project/final_pkgs/torch-1.10.0.dev20210824-cp38-none-macosx_10_9_x86_64.whl

```

Reviewed By: xta0

Differential Revision:

D30593167

Pulled By: hanton

fbshipit-source-id:

471da204e94b29c11301c857c50501307a5f0785

Aswin Murali [Fri, 27 Aug 2021 16:02:22 +0000 (09:02 -0700)]

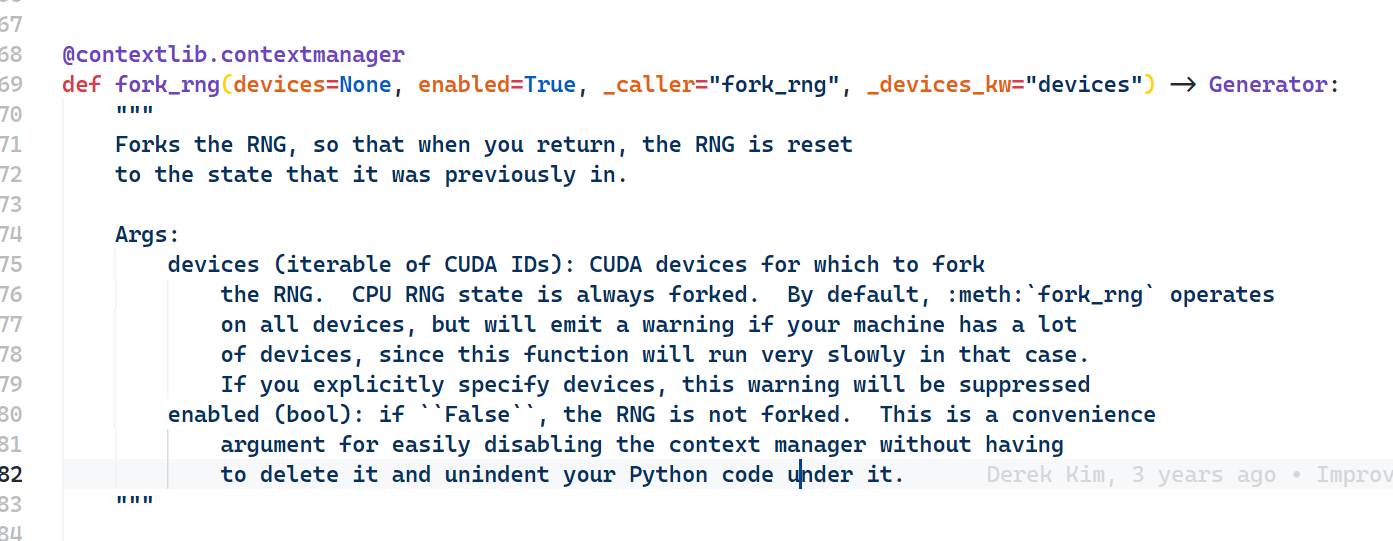

Adds return type annotation for fork_rng function (#63724)

Summary:

Fixes https://github.com/pytorch/pytorch/issues/63723

Since it's a generator function the type annotation shall be `Generator`.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63724

Reviewed By: iramazanli

Differential Revision:

D30543098

Pulled By: heitorschueroff

fbshipit-source-id:

ebdd34749defe1e26c899146786a0357ab4b4b9b

gmagogsfm [Fri, 27 Aug 2021 15:49:54 +0000 (08:49 -0700)]

More robust check of whether a class is defined in torch (#64083)

Summary:

This would prevent bugs for classes that

1) Is defined in a module that happens to start with `torch`, say `torchvision`

2) Is defined in torch but with an import alias like `import torch as th`

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64083

Reviewed By: soulitzer

Differential Revision:

D30598369

Pulled By: gmagogsfm

fbshipit-source-id:

9d3a7135737b2339c9bd32195e4e69a9c07549d4

Harut Movsisyan [Fri, 27 Aug 2021 10:03:32 +0000 (03:03 -0700)]

[Static Runtime] Out version for fmod (#64046)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/64046

Test Plan:

Confirm out variant is used:

```

> //caffe2/benchmarks/static_runtime:static_runtime_cpptest -- --v=1

V0826 23:31:30.321382 193428 impl.cpp:1395] Switch to out variant for node: %4 : Tensor = aten::fmod(%a.1, %b.1)

```

Reviewed By: mikeiovine

Differential Revision:

D30581228

fbshipit-source-id:

dfab9a16ff8afd40b29338037769f938f154bf74

Don Jang [Fri, 27 Aug 2021 09:43:22 +0000 (02:43 -0700)]

[Static Runtime] Manage temporary Tensors for aten::layer_norm (#64078)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64078

This change converts `aten::layer_norm -> output Tensor` to `static_runtime::layer_norm -> (output Tensor, temp1 Tensor, tmp2 Tensor)` to manage `tmp1` and `tmp2` Tensors by the static runtime.

Currently the out-variant of `aten::layer_norm` creates two temporary Tensors inside it:

```

at::Tensor mean = create_empty_from({M}, *X);

at::Tensor rstd = create_empty_from({M}, *X);

```

that the static runtime misses an opportunity to manage.

This change puts them into (unused) output Tensors of a new placeholder op `static_runtime::layer_norm` so that the static runtime can mange them since the static runtime as of now chooses to manage only output tensors.

Test Plan:

- Enhanced `StaticRuntime.LayerNorm` to ensure that `static_runtime::layer_norm` gets activated.

- Confirmed that the new op gets activated during testing:

```

V0825 12:51:50.017890 2265227 impl.cpp:1396] Switch to out variant for node: %8 : Tensor, %9 : Tensor, %10 : Tensor = static_runtime::layer_norm(%input.1, %normalized_shape.1, %4, %4, %5, %3)

```

Reviewed By: hlu1

Differential Revision:

D30486475

fbshipit-source-id:

5121c44ab58c2d8a954aa0bbd9dfeb7468347a2d

Hao Lu [Fri, 27 Aug 2021 08:39:14 +0000 (01:39 -0700)]

[Static Runtime] Use F14FastMap/F14FastSet (#63999)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63999

Use folly::F14FastMap/F14FastSet instead of std::unordered_map/unordered_set in the Static Runtime code base. folly::F14FastMap/F14FastSet implements the same APIs as std::unordered_map/unordered_set but faster. For details see https://github.com/facebook/folly/blob/master/folly/container/F14.md

Reviewed By: d1jang

Differential Revision:

D30566149

fbshipit-source-id:

20a7fa2519e4dde96fb3fc61ef6c92bf6d759383

Ansha Yu [Fri, 27 Aug 2021 06:17:42 +0000 (23:17 -0700)]

[static runtime] port c2 argmin kernel (#63632)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63632

Local benchmarking with 1 input repeated 10k iter on 290331537_4 local net. Reduces argmin runtime by about 80% and and local net execution by about ~0.71-0.77ms.

Before:

```

I0826 17:25:53.972786 1104614 PyTorchPredictorBenchLib.cpp:313] PyTorch run finished. Milliseconds per iter: 7.37599. Iters per second: 135.57

```

```

Static runtime ms per iter: 8.22086. Iters per second: 121.642

Time per node type:

4.13527 ms. 50.9157%. fb::sigrid_transforms_torch_bind (1 nodes, out variant)

0.868506 ms. 10.6935%. aten::argmin (1 nodes, out variant)

...

```

After:

```

I0826 17:17:54.165174 1064079 PyTorchPredictorBenchLib.cpp:313] PyTorch run finished. Milliseconds per iter: 6.66724. Iters per second: 149.987

```

```

Static runtime ms per iter: 7.68172. Iters per second: 130.179

Time per node type:

4.1452 ms. 54.0612%. fb::sigrid_transforms_torch_bind (1 nodes, out variant)

0.656778 ms. 8.56562%. fb::quantized_linear (8 nodes)

0.488229 ms. 6.36741%. static_runtime::to_copy (827 nodes, out variant)

0.372678 ms. 4.86042%. aten::argmin (1 nodes, out variant)

...Time per node type:

3.39387 ms. 53.5467%. fb::sigrid_transforms_torch_bind (1 nodes, out variant)

0.636216 ms. 10.0379%. fb::quantized_linear (8 nodes, out variant)

0.410535 ms. 6.47721%. fb::clip_ranges_to_gather_to_offsets (304 nodes, out variant)

0.212721 ms. 3.3562%. fb::clip_ranges_gather_sigrid_hash_precompute_v3 (157 nodes, out variant)

0.173736 ms. 2.74111%. aten::matmul (1 nodes, out variant)

0.150514 ms. 2.37474%. aten::argmin (1 nodes, out variant)

```

P447422384

Test Plan:

Test with local replayer sending traffic to `ansha_perf_test_0819.test`, and compare outputs to jit interpreter.

Start compute tier:

```

RUN_UUID=ansha_perf_test_0819.test.storage JOB_EXPIRE_TIME=864000 MODEL_ID=290331537_4 PREDICTOR_TAG= PREDICTOR_VERSION=405 PREDICTOR_TYPE=CPU ADDITIONAL_FLAGS="--enable_disagg_file_split=true --enable_adx=false --load_remote_file_locally=true --pytorch_predictor_static_runtime_whitelist_by_id=

290331537" GFLAGS_CONFIG_PATH=sigrid/predictor/gflags/predictor_gflags_ads_perf_cpu_pyper SMC_TIER_NAME=sigrid.predictor.perf.ansha_per_test_0819.test.storage CLUSTER=tsp_rva ENTITLEMENT_NAME=ads_ranking_infra_test_t6 PREDICTOR_LOCAL_DIRECTORY= ICET_CONFIG_PATH= NNPI_COMPILATION_CONFIG_FILE= NUM_TASKS=1 NNPI_NUM_WORKERS=0 tw job start /data/users/ansha/fbsource/fbcode/tupperware/config/admarket/sigrid/predictor/predictor_perf_canary.tw

```

Start nnpi tier:

```

RUN_UUID=ansha_perf_test_0819.test JOB_EXPIRE_TIME=247200 MODEL_ID=290331537_4 PREDICTOR_TAG= PREDICTOR_VERSION=343 PREDICTOR_TYPE=NNPI_TWSHARED ADDITIONAL_FLAGS="--torch_glow_min_fusion_group_size=30 --pytorch_storage_tier_replayer_sr_connection_options=overall_timeout:1000000,processing_timeout:1000000 --predictor_storage_smc_tier=sigrid.predictor.perf.ansha_perf_test_0819.test.storage --pytorch_predictor_static_runtime_whitelist_by_id=

290331537" GFLAGS_CONFIG_PATH=sigrid/predictor/gflags/predictor_gflags_ads_perf_glow_nnpi_pyper_v1 SMC_TIER_NAME=sigrid.predictor.perf.ansha_perf_test_0819.test CLUSTER=tsp_rva ENTITLEMENT_NAME=ads_ranking_infra_test_t17 PREDICTOR_LOCAL_DIRECTORY= ICET_CONFIG_PATH= NNPI_COMPILATION_CONFIG_FILE= NUM_TASKS=1 NNPI_NUM_WORKERS=0 tw job start /data/users/ansha/fbsource/fbcode/tupperware/config/admarket/sigrid/predictor/predictor_perf_canary.tw

```

```buck test caffe2/benchmarks/static_runtime:static_runtime_cpptest -- StaticRuntime.IndividualOps_Argmin --print-passing-details```

Compared outputs to jit interpreter to check for no differences greater than 1e-3 (with nnc on) https://www.internalfb.com/intern/diff/view-version/

136824794/

Reviewed By: hlu1

Differential Revision:

D30445635

fbshipit-source-id:

048de8867ac72f764132295d1ebfa843cde2fa27

Supriya Rao [Fri, 27 Aug 2021 04:05:56 +0000 (21:05 -0700)]

[quant] Add support for linear_relu fusion for FP16 dynamic quant (#63826)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63826

Support the conversion of the intrinsic linearRelu module to the quantized dynamic LinearReLU module

Verify the support works for both linear module and functional linear fusion

Test Plan:

python test/test_quantization.py test_dynamic_with_fusion

Imported from OSS

Reviewed By: iramazanli

Differential Revision:

D30503513

fbshipit-source-id:

70446797e9670dfef7341cba2047183d6f88b70f

Supriya Rao [Fri, 27 Aug 2021 04:05:56 +0000 (21:05 -0700)]

[quant] Add op support for linear_relu_dynamic_fp16 (#63824)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63824

Add a fused operator implementation that will work with the quantization fusion APIs.

Once FBGEMM FP16 kernel supports relu fusion natively we can remove the addition from the PT operator.

Test Plan:

python test/test_quantization.py

Imported from OSS

Reviewed By: heitorschueroff

Differential Revision:

D30503514

fbshipit-source-id:

6bf3bd53f47ffaa3f1d178eaad8cc980a7f5258a

Supriya Rao [Fri, 27 Aug 2021 04:05:56 +0000 (21:05 -0700)]

[quant] support linear_relu_dynamic for qnnpack backend (#63820)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63820

Adds support in the operator directly to call relu operator if relu fusion is enabled.

Once QNNPACK natively supports relu fusion in the linear_dynamic this can be removed

Test Plan:

python test/test_quantization.py TestDynamicQuantizedLinear.test_qlinear

Imported from OSS

Reviewed By: vkuzo

Differential Revision:

D30502813

fbshipit-source-id:

3352ee5f73e482b6d1941f389d720a461b84ba23

Supriya Rao [Fri, 27 Aug 2021 04:05:56 +0000 (21:05 -0700)]

[quant][fx] Add support for dynamic linear + relu fusion (INT8) (#63799)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63799

Add a new module that can be used for module swap with the nni.LinearReLU module in convert function.

Supports INT8 currently (since FP16 op doesn't have relu fusion yet).

Fixes #55393

Test Plan:

python test/test_quantization.py test_dynamic_fusion

Imported from OSS

Reviewed By: heitorschueroff

Differential Revision:

D30502812

fbshipit-source-id:

3668e4f001a0626d469e17ac323acf582ee28a51

Michael Suo [Fri, 27 Aug 2021 03:54:54 +0000 (20:54 -0700)]

[torch/deploy] add torch.distributed to build (#63918)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63918

Previously we were building with `USE_DISTRIBUTED` off, because c10d was built as a separately library for historical reasons. Since then, lw has merged the c10d build into libtorch, so this is fairly easy to turn on.

Differential Revision:

D30492442

**NOTE FOR REVIEWERS**: This PR has internal Facebook specific changes or comments, please review them on [Phabricator](https://our.intern.facebook.com/intern/diff/

D30492442/)!

D30492442

D30492442

Test Plan: added a unit test

Reviewed By: wconstab

Pulled By: suo

fbshipit-source-id:

843b8fcf349a72a7f6fcbd1fcc8961268690fb8c

Can Balioglu [Fri, 27 Aug 2021 03:16:10 +0000 (20:16 -0700)]

Introduce the torchrun entrypoint (#64049)

Summary:

This PR introduces a new `torchrun` entrypoint that simply "points" to `python -m torch.distributed.run`. It is shorter and less error-prone to type and gives a nicer syntax than a rather cryptic `python -m ...` command line. Along with the new entrypoint the documentation is also updated and places where `torch.distributed.run` are mentioned are replaced with `torchrun`.

cc pietern mrshenli pritamdamania87 zhaojuanmao satgera rohan-varma gqchen aazzolini osalpekar jiayisuse agolynski SciPioneer H-Huang mrzzd cbalioglu gcramer23

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64049

Reviewed By: cbalioglu

Differential Revision:

D30584041

Pulled By: kiukchung

fbshipit-source-id:

d99db3b5d12e7bf9676bab70e680d4b88031ae2d

nikithamalgi [Fri, 27 Aug 2021 01:54:51 +0000 (18:54 -0700)]

Merge script and _script_pdt API (#62420)

Summary:

Merge `torch.jit.script` and `torch.jit._script_pdt` API. This PR merges profile directed typing with script api

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62420

Reviewed By: iramazanli

Differential Revision:

D30579015

Pulled By: nikithamalgifb

fbshipit-source-id:

99ba6839d235d61b2dd0144b466b2063a53ccece

Maksim Levental [Fri, 27 Aug 2021 00:59:59 +0000 (17:59 -0700)]

port glu to use structured kernel approach (#61800)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/61800

resubmitting because the [last one](https://github.com/pytorch/pytorch/pull/61433) was unrecoverable due to making changes incorrectly in the stack

Test Plan: Imported from OSS

Reviewed By: iramazanli

Differential Revision:

D29812492

Pulled By: makslevental

fbshipit-source-id:

c3dfeacd1e00a526e24fbaab02dad48069d690ef

Jane Xu [Fri, 27 Aug 2021 00:36:56 +0000 (17:36 -0700)]

Run through failures on trunk (#64063)

Summary:

This PR runs all the tests on trunk instead of stopping on first failure.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64063

Reviewed By: malfet, seemethere

Differential Revision:

D30592020

Pulled By: janeyx99

fbshipit-source-id:

318b225cdf918a98f73e752d1cc0227d9227f36c

Paul Johnson [Fri, 27 Aug 2021 00:28:35 +0000 (17:28 -0700)]

[pytorch] add per_sample_weights support for embedding_bag_4bit_rowwise_offsets (#63605)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/63605

Reviewed By: houseroad

Differential Revision:

D30434664

fbshipit-source-id:

eb4cbae3c705f9dec5c073a56f0f23daee353bc1

Michael Dagitses [Fri, 27 Aug 2021 00:26:52 +0000 (17:26 -0700)]

document that `torch.triangular_solve` has optional out= parameter (#63253)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63253

Fixes #57955

Test Plan: Imported from OSS

Reviewed By: malfet

Differential Revision:

D30312134

Pulled By: dagitses

fbshipit-source-id:

4f484620f5754f4324a99bbac1ff783c64cee6b8

Jiewen Tan [Thu, 26 Aug 2021 23:49:13 +0000 (16:49 -0700)]

Enable test_api IMethodTest in OSS (#63345)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63345

This diff did the following few things to enable the tests:

1. Exposed IMethod as TORCH_API.

2. Linked torch_deploy to test_api if USE_DEPLOY == 1.

3. Generated torch::deploy examples when building torch_deploy library.

Test Plan: ./build/bin/test_api --gtest_filter=IMethodTest.*

Reviewed By: ngimel

Differential Revision:

D30346257

Pulled By: alanwaketan

fbshipit-source-id:

932ae7d45790dfb6e00c51893933a054a0fad86d

Don Jang [Thu, 26 Aug 2021 23:28:35 +0000 (16:28 -0700)]

[Static Runtime] Remove unnecessary fb::equally_split nodes (#64022)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/64022

Test Plan: - Added unittest `StaticRuntime.RemoveEquallySplitListUnpack`.

Reviewed By: hlu1

Differential Revision:

D30472189

fbshipit-source-id:

36040b0146f4be9d0d0fda293f7205f43aad0b87

Shijun Kong [Thu, 26 Aug 2021 23:06:17 +0000 (16:06 -0700)]

[pytorch][quant][oss] Support 2-bit embedding_bag op "embedding_bag_2bit_rowwise_offsets" (#63658)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63658

Support 2-bit embedding_bag op "embedding_bag_2bit_rowwise_offsets"

Reviewed By: jingsh, supriyar

Differential Revision:

D30454994

fbshipit-source-id:

7aa7bfe405c2ffff639d5658a35181036e162dc9

soulitzer [Thu, 26 Aug 2021 23:00:21 +0000 (16:00 -0700)]

Move variabletype functions around (#63330)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63330

- This is in preparation for templated/boxed autograd-not-implemented fallback

- Make sure VariableTypeUtils does not depend on generated code

- Lift `isFwGradDefined` into `autograd/functions/utils.cpp` so it's available to mobile builds

- Removes `using namespace at` from VariableTypeUtils, previously we needed this for Templated version, but now its not strictly necessary but still a good change to avoid name conflicts if this header is included elsewhere in the future.

Test Plan: Imported from OSS

Reviewed By: heitorschueroff

Differential Revision:

D30518573

Pulled By: soulitzer

fbshipit-source-id:

a0fb904baafc9713de609fffec4b813f6cfcc000

Bo Wang [Thu, 26 Aug 2021 23:00:16 +0000 (16:00 -0700)]

More sharded_tensor creation ops: harded_tensor.zeros, sharded_tensor.full, sharded_tensor.rand (#63732)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/63732

Test Plan:

$ python test/distributed/_sharded_tensor/test_sharded_tensor.py --v

$ python test/distributed/_sharded_tensor/test_sharded_tensor.py TestCreateTensorFromParams --v

$ python test/distributed/_sharded_tensor/test_sharded_tensor.py TestShardedTensorChunked --v

Imported from OSS

Differential Revision:

D30472621

D30472621

Reviewed By: pritamdamania87

Pulled By: bowangbj

fbshipit-source-id:

fd8ebf9b815fdc292ad1aad521f9f4f454163d0e

Jane Xu [Thu, 26 Aug 2021 22:42:00 +0000 (15:42 -0700)]

Add shard number to print_test_stats.py upload name (#64055)

Summary:

Now that the render test results job is gone, each shard on GHA is uploading a JSON test stats report. To ensure differentiation, this PR includes the shard number in the report name.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64055

Reviewed By: iramazanli

Differential Revision:

D30586869

Pulled By: janeyx99

fbshipit-source-id:

fd19f347131deec51486bb0795e4e13ac19bc71a

MengeTM [Thu, 26 Aug 2021 22:32:06 +0000 (15:32 -0700)]

Derivatives of relu (#63027) (#63089)

Summary:

Optimization of relu and leaky_relu derivatives for reduction of VRAM needed for the backward-passes

Fixes https://github.com/pytorch/pytorch/issues/63027

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63089

Reviewed By: iramazanli

Differential Revision:

D30582049

Pulled By: albanD

fbshipit-source-id:

a9481fe8c10cbfe2db485e28ce80cabfef501eb8

Facebook Community Bot [Thu, 26 Aug 2021 22:18:37 +0000 (15:18 -0700)]

Automated submodule update: FBGEMM (#62879)

Summary:

This is an automated pull request to update the first-party submodule for [pytorch/FBGEMM](https://github.com/pytorch/FBGEMM).

New submodule commit: https://github.com/pytorch/FBGEMM/commit/

ce5470385723b0262b47250d6af05f1b734e4509

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62879

Test Plan: Ensure that CI jobs succeed on GitHub before landing.

Reviewed By: jspark1105

Differential Revision:

D30154801

fbshipit-source-id:

b2ce185da6f6cadf5128f82b15097d9e13e9e6a0

Mike Iovine [Thu, 26 Aug 2021 21:09:10 +0000 (14:09 -0700)]

[JIT] UseVariadicOp takes list_idx parameter (#63915)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63915

Previously, this function only worked for variadic op substitutions of the form `op(list, args) -> variadic_op(list_1, ..., list_n, args)`. This change allows for transformations of the form `op(args_0, list, args_1) -> variadic_op(args_0, list_1, ..., list_n, args_1)`.

Test Plan:

`buck test caffe2/test/cpp/jit:jit -- Stack Concat`

(tests exercising `list_idx != 0` will be added further up in this diff stack)

Reviewed By: navahgar

Differential Revision:

D30529729

fbshipit-source-id:

568080679c3b40bdaedee56bef2e8a5ce7985d2f

Can Balioglu [Thu, 26 Aug 2021 20:55:08 +0000 (13:55 -0700)]

[torch/elastic] Pretty print the failure message captured by @record (#64036)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64036

This PR slightly revises the implementation of the internal `_format_failure()` method in order to pretty print the error message captured in a subprocess by the `record` annotation.

With this PR a failure log is formatted as below:

```

Root Cause:

[0]:

time: 2021-08-26_17:12:07

rank: 0 (local_rank: 0)

exitcode: 1 (pid: 8045)

error_file: /tmp/torchelastic_6cj9eppm/

6d9d844a-6ce4-4838-93ed-1639a9525b00_rec9kuv3/attempt_0/0/error.json

msg:

{

"message": "ValueError: Test",

"extraInfo": {

"py_callstack": [

" File \"/data/home/balioglu/fail.py\", line 7, in <module>\n main()\n",

" File \"/fsx/users/balioglu/repos/pytorch/torch/distributed/elastic/multiprocessing/errors/__init__.py\", line 373, in wrapper\n error_handler.record_exception(e)\n",

" File \"/fsx/users/balioglu/repos/pytorch/torch/distributed/elastic/multiprocessing/errors/error_handler.py\", line 86, in record_exception\n _write_error(e, self._get_error_file_path())\n",

" File \"/fsx/users/balioglu/repos/pytorch/torch/distributed/elastic/multiprocessing/errors/error_handler.py\", line 26, in _write_error\n \"py_callstack\": traceback.format_stack(),\n"

],

"timestamp": "

1629997927"

}

}

```

in contrast to the old formatting:

```

Root Cause:

[0]:

time: 2021-08-26_17:15:50

rank: 0 (local_rank: 0)

exitcode: 1 (pid: 9417)

error_file: /tmp/torchelastic_22pwarnq/

19f22638-848c-4b8f-8379-677f34fc44e7_u43o9vs7/attempt_0/0/error.json

msg: "{'message': 'ValueError: Test', 'extraInfo': {'py_callstack': 'Traceback (most recent call last):\n File "/fsx/users/balioglu/repos/pytorch/torch/distributed/elastic/multiprocessing/errors/__init__.py", line 351, in wrapper\n return f(*args, **kwargs)\n File "/data/home/balioglu/fail.py", line 5, in main\n raise ValueError("BALIOGLU")\nValueError: BALIOGLU\n', 'timestamp': '

1629998150'}}"

```

ghstack-source-id:

136761768

Test Plan: Run the existing unit tests.

Reviewed By: kiukchung

Differential Revision:

D30579025

fbshipit-source-id:

37df0b7c7ec9b620355766122986c2c77e8495ae

Ilqar Ramazanli [Thu, 26 Aug 2021 20:29:03 +0000 (13:29 -0700)]

To add Chained Scheduler to the list of PyTorch schedulers. (#63491)

Summary:

In this PR we are introducing ChainedScheduler which initially proposed in the discussion https://github.com/pytorch/pytorch/pull/26423#discussion_r329976246 .

The idea is to provide a user friendly chaining method for schedulers, especially for the cases many of them are involved and we want to have a clean and easy to read interface for schedulers. This method will be even more crucial once CompositeSchedulers and Schedulers for different type of parameters are involved.

The immediate application of Chained Scheduler is expected to happen in TorchVision Library to combine WarmUpLR and MultiStepLR https://github.com/pytorch/vision/blob/master/references/video_classification/scheduler.py#L5 . However, it can be expected that in many other use cases also this method could be applied.

### Example

The usage is as simple as below:

```python

sched=ChainedScheduler([ExponentialLR(self.opt, gamma=0.9),

WarmUpLR(self.opt, warmup_factor=0.2, warmup_iters=4, warmup_method="constant"),

StepLR(self.opt, gamma=0.1, step_size=3)])

```

Then calling

```python

sched.step()

```

would trigger step function for all three schedulers consecutively

Partially resolves https://github.com/pytorch/vision/issues/4281

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63491

Reviewed By: datumbox, mruberry

Differential Revision:

D30576180

Pulled By: iramazanli

fbshipit-source-id:

b43f0749f55faab25079641b7d91c21a891a87e4

Shiyan Deng [Thu, 26 Aug 2021 20:06:46 +0000 (13:06 -0700)]

[fx_acc] [fx2trt] add acc op mapper for argmin and converter for topk (#63823)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63823

Add mapper for `torch.argmin` which maps it to `acc_ops.flatten` (optional) + `acc_ops.topk` + `acc_ops.getitem` + `acc_ops.squeeze` (optional). This diff doesn't allow mapping if `dim=None && keepdim=True` in `torch.argmin`.

Add fx2trt converter for `acc_ops.topk`.

Test Plan:

buck test mode/opt glow/fb/fx/oss_acc_tracer:test_acc_tracer -- test_argmin

buck run mode/opt caffe2/torch/fb/fx2trt:test_topk

Reviewed By: jfix71

Differential Revision:

D30501771

fbshipit-source-id:

0babc45e69bac5e61ff0b9b4dfb98940398e3e57

Don Jang [Thu, 26 Aug 2021 19:58:05 +0000 (12:58 -0700)]

[Static Runtime] Add native op for aten::expand_as (#64024)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64024

`aten::expand_as` creates a view of the input tensor. This change adds its native op implementation for the static runtime.

Test Plan: - Added `StaticRuntime.IndividualOps_ExpandAs`

Reviewed By: hlu1

Differential Revision:

D30546851

fbshipit-source-id:

e53483048af890bc41b6192a1ab0c5ba0ee2bdc0

Meghan Lele [Thu, 26 Aug 2021 19:48:01 +0000 (12:48 -0700)]

Back out "[ONNX] Fix an issue that optimizations might adjust graph inputs unexpectedly. (#61280)" (#64004)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64004

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63904

Fixes T98808160

Test Plan: T98808160

Reviewed By: msaroufim

Differential Revision:

D30527450

fbshipit-source-id:

6262901a78ca929cecda1cf740893139aa26f1b4

Ansley Ussery [Thu, 26 Aug 2021 19:14:32 +0000 (12:14 -0700)]

Allow uncompiled strings as input to `checkScriptRaisesRegex` (#63901)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63901

cc gmagogsfm

Test Plan: Imported from OSS

Reviewed By: gmagogsfm

Differential Revision:

D30579472

Pulled By: ansley

fbshipit-source-id:

59ee09c1f25278d4f6e51f626588251bd095c6ea

Luca Wehrstedt [Thu, 26 Aug 2021 19:08:00 +0000 (12:08 -0700)]

Leverage TensorPipe's automatic SHM address selection (#63028)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63028

TensorPipe until now required PyTorch to come up and provide a unique identifier to use as address for the UNIX domain socket used in the SHM transport. However the Linux kernel can automatically assign an available address (like it does with IP ports), and TensorPipe now supports it, so we can remove that useless PyTorch logic.

Test Plan: CI

Reviewed By: mrshenli

Differential Revision:

D30220352

fbshipit-source-id:

78e8a6ef5916b2a72df26cdc9cd367b9d083e821

Erjia Guan [Thu, 26 Aug 2021 17:21:48 +0000 (10:21 -0700)]

Rename IterableAsDataPipe to IterableWrapper (#63981)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63981

Rename `IterableAsDataPipe` to `IterableWrapper` based on our naming convention `Op-er`

Test Plan: Imported from OSS

Reviewed By: VitalyFedyunin

Differential Revision:

D30554197

Pulled By: ejguan

fbshipit-source-id:

c2eacb20df5645d83ca165d6a1591f7e4791990f

Cheng Chang [Thu, 26 Aug 2021 16:52:42 +0000 (09:52 -0700)]

[NNC] Add C++ codegen backend to NNC (#62869)

Summary:

Adds a C++ codegen backend to NNC to generate C++ for CPU instead of generating LLVM IR.

Tensors are represented as blobs of float. Vector operations are devectorized/unrolled.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62869

Test Plan:

https://github.com/pytorch/pytorch/tree/mvz-nnc-aot-prototype makes it able to AOT compile the whole MobileNetV3 model into binary code through LLVM codegen in NNC.

I forked that branch to https://github.com/cheng-chang/pytorch/tree/cc-aot-cpp, merged this PR into it, and modified `fancy_compile` to compile MobileNetV3 into C++ through

```

import torch

m = torch.jit.load('mobnet.pt')

m.eval()

f = torch.jit.freeze(m)

torch._C._fancy_compile(f.graph, [1, 3, 224, 224])

```

The generated C++ file `mobnet.cc` can be found at https://gist.github.com/cheng-chang/

e2830cc6920b39204ebf368035b2bcec.

I manually compiled the generated C++ through `g++ -o mobnet -std=c++14 -L./build/lib -ltorch_cpu -ltorch mobnet.cc`, and it succeeded.

Reviewed By: ZolotukhinM

Differential Revision:

D30149482

Pulled By: cheng-chang

fbshipit-source-id:

e77b189f0353e37cd309423a48a513e668d07675

Raghavan Raman [Thu, 26 Aug 2021 16:49:44 +0000 (09:49 -0700)]

[nnc] Sanitized the names of constants in the input graph. (#63990)

Summary:

Fixes https://github.com/pytorch/pytorch/issues/63923

The input graph can contain constants whose names contain special characters. So, all names of constants in the input graph need to be sanitized.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63990

Reviewed By: ZolotukhinM

Differential Revision:

D30558432

Pulled By: navahgar

fbshipit-source-id:

de5b0c23d50ee8997f40f2c0fc605dda3719186f

Bert Maher [Thu, 26 Aug 2021 16:41:58 +0000 (09:41 -0700)]

[nnc] Fix dtype promotion involving scalars (#64002)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/64002

Fixes https://github.com/pytorch/vision/issues/4315

Test Plan: Imported from OSS

Reviewed By: navahgar

Differential Revision:

D30566979

Pulled By: bertmaher

fbshipit-source-id:

eaa98b9534a926be7fcd337d46c5a0acb3243179

Jane Xu [Thu, 26 Aug 2021 16:27:47 +0000 (09:27 -0700)]

run_test.py: add option to run only core tests (#63976)

Summary:

This is in response to a feature request from some folks in the core team to have a local command that would only run relevant "core" tests. The idea is to have a local smoke test option for developers to run locally before making a PR in order to verify their changes did not break core functionality. These smoke tests are not targeted to be short but rather relevant.

This PR enables that by allowing developers to run `python test/run_test.py --core` or `python test/run_test.py -core` in order to run the CORE_TEST_LIST, which is currently test_nn.py, test_torch.py, and test_ops.py.

I am not the best person to judge what should be considered "core", so please comment which tests should be included and/or excluded from the CORE_TEST_LIST!

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63976

Test Plan:

```

(pytorch) janeyx@janeyx-mbp test % python run_test.py --core -v

Selected tests: test_nn, test_ops, test_torch

Running test_nn ... [2021-08-25 14:48:28.865078]

Executing ['/Users/janeyx/miniconda3/envs/pytorch/bin/python', 'test_nn.py', '-v'] ... [2021-08-25 14:48:28.865123]

test_to (__main__.PackedSequenceTest) ... ok

test_to_memory_format (__main__.PackedSequenceTest) ... ok

```

Reviewed By: walterddr

Differential Revision:

D30575560

Pulled By: janeyx99

fbshipit-source-id:

3f151982c1e315e50e60cb0d818adaea34556a04

Don Jang [Thu, 26 Aug 2021 15:08:53 +0000 (08:08 -0700)]

[Static Runtime] Disable out variant of aten::clone (#63980)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63980

The out variant implementation of `aten::clone` causes a crash, which needs further investigation. This change disables it until the problem gets fixed.

Note that `inline_cvr` doesn't use `aten::clone` as of now, so no perf implication: https://www.internalfb.com/phabricator/paste/view/P446858755?lines=121

Test Plan: N/A

Reviewed By: hlu1

Differential Revision:

D30544149

fbshipit-source-id:

facb334d67473f622b36862fbdb2633358556fdf

Rong Rong (AI Infra) [Thu, 26 Aug 2021 15:00:48 +0000 (08:00 -0700)]

[CI] move distributed test into its own CI job (#62896)

Summary:

Moving distributed to its own job.

- [x] ensure there should be a distributed test job for every default test job matrix (on GHA)

- [x] ensure that circleci jobs works for distributed as well

- [x] waiting for test distributed to have its own run_test.py launch options, see https://github.com/pytorch/pytorch/issues/63147

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62896

Reviewed By: seemethere

Differential Revision:

D30230856

Pulled By: walterddr

fbshipit-source-id:

0cad620f6cd9e56c727c105458d76539a5ae976f

albanD [Thu, 26 Aug 2021 14:48:20 +0000 (07:48 -0700)]

remove special grad_mode tls handling (#63116)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63116

This PR removes the special flag to disable grad mode tracking on the ThreadLocalState and replaces it with an explicit setter that users can use.

This allows to reduce complexity of ThreadLocalState.

Test Plan: Imported from OSS

Reviewed By: ngimel

Differential Revision:

D30388098

Pulled By: albanD

fbshipit-source-id:

85641b3d711179fb78ff6a41ed077548dc821a2f

Heitor Schueroff [Thu, 26 Aug 2021 14:17:24 +0000 (07:17 -0700)]

Added API tests to ReductionOpInfo and ported amax/amin/nansum tests (#62899)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/62899

Test Plan: Imported from OSS

Reviewed By: mruberry

Differential Revision:

D30408816

Pulled By: heitorschueroff

fbshipit-source-id:

6cb0aa7fa7edba93549ef873baa2fb8a003bd91d

Edward Yang [Thu, 26 Aug 2021 13:58:12 +0000 (06:58 -0700)]

Deify opmath_t into its own header, align with accscalar_t (#63986)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63986

Fixes #63985

Signed-off-by: Edward Z. Yang <ezyang@fb.com>

Test Plan: Imported from OSS

Reviewed By: malfet

Differential Revision:

D30555996

Pulled By: ezyang

fbshipit-source-id:

b6e4d56a5658ed028ffc105cc4b479faa6882b65

Heitor Schueroff [Thu, 26 Aug 2021 13:05:28 +0000 (06:05 -0700)]

[OpInfo] Added ReductionOpInfo subclass of OpInfo and ported sum test (#62737)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62737

ReductionOpInfo is a specialization of OpInfo for reduction operators. For now, it is designed to work with reductions that return a single tensor and that reduce all elements along one or more dimensions to a single value. In particular this excludes operators such as `max` and `min` that return multiple tensors and `quantile` that can return multiple values.

fixes https://github.com/pytorch/pytorch/issues/49746

Test Plan: Imported from OSS

Reviewed By: ejguan

Differential Revision:

D30406568

Pulled By: heitorschueroff

fbshipit-source-id:

218b1da1902f67bcf4c3681e2a0f0029a25d51f1

Luca Wehrstedt [Thu, 26 Aug 2021 12:43:05 +0000 (05:43 -0700)]

Update TensorPipe submodule

Summary: The bot failed to do it.

Test Plan:

D30542677

Reviewed By: beauby

Differential Revision:

D30573500

fbshipit-source-id:

50abd6fc415cead0a6b6d9290fa0e5f97d0e4989

Michael Dagitses [Thu, 26 Aug 2021 11:42:36 +0000 (04:42 -0700)]

use `const auto&` as type for grad alias (#63949)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63949

This is an extension of the discussion in

https://github.com/pytorch/pytorch/pull/63040#discussion_r687793027.

Test Plan: Imported from OSS

Reviewed By: albanD

Differential Revision:

D30546789

Pulled By: dagitses

fbshipit-source-id:

3046aff4f129d5492d73dfb67717a824e16ffee8

Kefei Lu [Thu, 26 Aug 2021 07:51:53 +0000 (00:51 -0700)]

Add logging for _MinimizerBase

Summary: Add logging so we know which nodes are currently being visited

Test Plan: lint & SC tests

Reviewed By:

842974287

Differential Revision:

D30509865

fbshipit-source-id:

09e77e44c97c825242e0b24f90463b50f3ca19c6

Rohan Varma [Thu, 26 Aug 2021 06:48:58 +0000 (23:48 -0700)]

Fix issue re: DDP and create_graph=True (#63831)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63831

Closes https://github.com/pytorch/pytorch/issues/63812

`at::mul_out` is not supported when `grad` itself requires grad, which is useful for computing higher order derivatives.

In this case, fall back to a mul + copy instead of mul_out.

ghstack-source-id:

136614644

Test Plan: UT

Reviewed By: SciPioneer

Differential Revision:

D30505573

fbshipit-source-id:

83532b6207b3d80116fcc4dff0e5520d73b3454f

Marjan Fariborz [Thu, 26 Aug 2021 06:40:09 +0000 (23:40 -0700)]

Adding BFP16 quantization/dequantization support to OSS (#63059)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63059

Supporting BFP16 quantization method to OSS. Currently only support CPU

ghstack-source-id:

136639528

Test Plan: Imported from OSS

Reviewed By: wanchaol

Differential Revision:

D30194538

fbshipit-source-id:

ac248567ad8028457c2a91b77ef2ce81709fce53

Kiuk Chung [Thu, 26 Aug 2021 05:56:33 +0000 (22:56 -0700)]

(torch.distributed) Add torch.distributed.is_torchelastic_launched() util method + make init_method=tcp:// compatible with torchelastic (#63910)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63910

Addresses the current issue that `init_method=tcp://` is not compatible with `torch.distributed.run` and `torch.distributed.launch`. When running with a training script that initializes the process group with `init_method=tcp://localhost:$port` as such:

```

$ python -u -m torch.distributed.run --max_restarts 0 --nproc_per_node 1 --nnodes 1 --master_addr $(hostname) --master_port 6000 ~/tmp/test.py

```

An `Address in use` error is raised since the training script tries to create a TCPStore on port 6000, which is already taken since the elastic agent is already running a TCPStore on that port.

For details see: https://github.com/pytorch/pytorch/issues/63874.

This change does a couple of things:

1. Adds `is_torchelastic_launched()` check function that users can use in the training scripts to see whether the script is launched via torchelastic.

1. Update the `torch.distributed` docs page to include the new `is_torchelastic_launched()` function.

1. Makes `init_method=tcp://` torchelastic compatible by modifying `_tcp_rendezvous_handler` in `torch.distributed.rendezvous` (this is NOT the elastic rendezvous, it is the old rendezvous module which is slotted for deprecation in future releases) to check `is_torchelastic_launched()` AND `torchelastic_use_agent_store()` and if so, only create TCPStore clients (no daemons, not even for rank 0).

1. Adds a bunch of unittests to cover the different code paths

NOTE: the issue mentions that we should fail-fast with an assertion on `init_method!=env://` when `is_torchelastic_launched()` is `True`. There are three registered init_methods in pytorch: env://, tcp://, file://. Since this diff makes tcp:// compatible with torchelastic and I've validated that file is compatible with torchelastic. There is no need to add assertions. I did update the docs to point out that env:// is the RECOMMENDED init_method. We should probably deprecate the other init_methods in the future but this is out of scope for this issue.

Test Plan: Unittests.

Reviewed By: cbalioglu

Differential Revision:

D30529984

fbshipit-source-id:

267aea6d4dad73eb14a2680ac921f210ff547cc5

Joseph Spisak [Thu, 26 Aug 2021 05:49:22 +0000 (22:49 -0700)]

Update persons_of_interest.rst (#63907)

Summary:

Fixes #{issue number}

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63907

Reviewed By: jspisak

Differential Revision:

D30534972

Pulled By: dzhulgakov

fbshipit-source-id:

ba726fc53e292a362c387cc8b5f7776ca2a2544c

Philip Meier [Thu, 26 Aug 2021 05:04:44 +0000 (22:04 -0700)]

enable equal_nan for complex values in isclose (#63571)

Summary: Pull Request resolved: https://github.com/pytorch/pytorch/pull/63571

Test Plan: Imported from OSS

Reviewed By: malfet, ngimel

Differential Revision:

D30560127

Pulled By: mruberry

fbshipit-source-id:

8958121ca24e7c139d869607903aebbe87bc0740

nikithamalgi [Thu, 26 Aug 2021 04:47:50 +0000 (21:47 -0700)]

Clean up related to type refinements (#62444)

Summary:

Creates a helper function to refine the types into a torchScript compatible format in the monkeytype config for profile directed typing

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62444

Reviewed By: malfet

Differential Revision:

D30548159

Pulled By: nikithamalgifb

fbshipit-source-id:

7c09ce5f5e043d069313b87112837d7e226ade1f

Zeina Migeed [Thu, 26 Aug 2021 03:42:14 +0000 (20:42 -0700)]

inference for algebraic expressions (#63822)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63822

Infer algebraic expressions and add it to our symbolic inferencer. Works for conv2D and can be extended to other operations.

Test Plan: Imported from OSS

Reviewed By: jamesr66a

Differential Revision:

D30518469

Pulled By: migeed-z

fbshipit-source-id:

b92dfa40b2d834a535177da42b851701b8f7178c

Zafar Takhirov [Thu, 26 Aug 2021 03:37:56 +0000 (20:37 -0700)]

[quant] Fixing the conversion of the quantizable RNN (#63879)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63879

Quantizable RNN had a bug, where the `from_observed` was an instance method, instead of a class method. This caused the `tq.convert` to fail. This fixes the issue by making the `from_observed` a classmethod.

The tests were passing before because the unittests were not using the custom module path, but a conventional `from_float`, which is also supported.

Test Plan:

`buck test mode/dev //caffe2/test:quantization -- test_custom_module_lstm`

```

buck test mode/dev //caffe2/test:quantization -- test_custom_module_lstm

Parsing buck files: finished in 0.5 sec

Downloaded 0/2 artifacts, 0.00 bytes, 100.0% cache miss (for updated rules)

Building: finished in 9.2 sec (100%) 12622/12622 jobs, 2/12622 updated

Total time: 9.7 sec

More details at https://www.internalfb.com/intern/buck/build/

0d87b987-649f-4d06-b0e2-97b5077

Tpx test run coordinator for Facebook. See https://fburl.com/tpx for details.

Running with tpx session id:

cb99305f-65c9-438b-a99f-

a0a2a3089778

Trace available for this run at /tmp/tpx-

20210824-115652.540356/trace.log

Started reporting to test run: https://www.internalfb.com/intern/testinfra/testrun/

5066549645030046

✓ ListingSuccess: caffe2/test:quantization - main (12.550)

✓ Pass: caffe2/test:quantization - test_custom_module_lstm (quantization.core.test_quantized_op.TestQuantizedOps) (174.867)

Summary

Pass: 1

ListingSuccess: 1

If you need help understanding your runs, please follow the wiki: https://fburl.com/posting_in_tpx_users

Finished test run: https://www.internalfb.com/intern/testinfra/testrun/

5066549645030046

```

Reviewed By: jerryzh168, mtl67

Differential Revision:

D30520473

fbshipit-source-id:

bc5d0b5bb079fd146e2614dd42526fc7d4d4f3c6

Zhengxu Chen [Thu, 26 Aug 2021 03:09:12 +0000 (20:09 -0700)]

Make frozen symbol name customizable in torch deploy. (#63817)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63817

ghstack-source-id:

136699671

Test Plan: eyes

Reviewed By: wconstab

Differential Revision:

D29571559

fbshipit-source-id:

8e3caa4932ef8d7c8559f264f0e9bb5474ad2237

Natalia Gimelshein [Thu, 26 Aug 2021 01:17:10 +0000 (18:17 -0700)]

Compute cuda reduction buffer size in elements (#63969)

Summary:

Resubmit of https://github.com/pytorch/pytorch/issues/63885

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63969

Reviewed By: mruberry

Differential Revision:

D30549423

Pulled By: ngimel

fbshipit-source-id:

b16d25030d44ced789c125a333d72b02a8f45067

Jerry Zhang [Thu, 26 Aug 2021 00:50:48 +0000 (17:50 -0700)]

Back out "Revert

D30384746: [fx2trt] Add a test for quantized resnet18" (#63973)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63973

Original commit changeset:

b93235323e22

Test Plan: buck run mode/opt -c python.package_style=inplace caffe2:fx2trt_quantized_resnet_test

Reviewed By:

842974287

Differential Revision:

D30546036

fbshipit-source-id:

2c8302456f072d04da00cf9ad97aa8304bc5e43e

Philip Meier [Wed, 25 Aug 2021 23:42:14 +0000 (16:42 -0700)]

replace `self.assertTrue(torch.allclose(..))` with `self.assertEqual(…)` (#63637)

Summary:

Fixes https://github.com/pytorch/pytorch/issues/63565

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63637

Reviewed By: malfet

Differential Revision:

D30541266

Pulled By: mruberry

fbshipit-source-id:

ab461949782c6908a589ea098fcfcf5c3e081ee6

David Riazati [Wed, 25 Aug 2021 22:54:31 +0000 (15:54 -0700)]

Remove render_test_results job (#63877)

Summary:

This removes the `render_test_results` job we had before which had been causing some confusion among devs when it failed and isn't really necessary now that we can actually render test results on the PR HUD.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63877

Reviewed By: walterddr, janeyx99

Differential Revision:

D30546705

Pulled By: driazati

fbshipit-source-id:

55fdafdb6f80924d941ffc15ee10787cb54f34a1

John Clow [Wed, 25 Aug 2021 22:27:37 +0000 (15:27 -0700)]

[EASY] Update the clang-tidy error message (#63370)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/63370

As shown by this CI run, the actual thing that is incorrect is the prompt.

https://github.com/pytorch/pytorch/actions/runs/

1137298261

The CI runs the below command instead of the original command.

The original command errors out when importing another file on line 1.

Trying to fix the code to work with the original command causes the CI to error out.

We should actually ask the user to run

`python3 -m tools.linter.install.clang_tidy`

Test Plan: Imported from OSS

Reviewed By: janeyx99, heitorschueroff

Differential Revision:

D30530216

Pulled By: Gamrix

fbshipit-source-id:

2a2b8d539dcc2839e4000c13e82c207fa89bfc9f

Peter Bell [Wed, 25 Aug 2021 22:05:14 +0000 (15:05 -0700)]

Shard python_torch_functions.cpp (#62187)

Summary:

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62187

This file can take 3 minutes on its own to compile, and after

python_functions.cpp is the second limiting factor for compile time of

`libtorch_python` on a 32-core threadripper. This splits it into 3 files that

take around 1 minute each to compile.

Test Plan: Imported from OSS

Reviewed By: H-Huang

Differential Revision:

D29962048

Pulled By: albanD

fbshipit-source-id:

99016d75912bff483fe21b130cef43a6882f8c0e

Jithun Nair [Wed, 25 Aug 2021 22:00:47 +0000 (15:00 -0700)]

Add note on ifdefing based on CUDA_VERSION for ROCm path (#62850)

Summary:

CUDA_VERSION and HIP_VERSION follow very unrelated versioning schemes, so it does not make sense to use CUDA_VERSION to determine the ROCm path. This note explicitly addresses it.

Pull Request resolved: https://github.com/pytorch/pytorch/pull/62850

Reviewed By: mruberry

Differential Revision:

D30547562

Pulled By: malfet

fbshipit-source-id:

02990fa66a88466c2330ab85f446b25b78545150